Stochastic Interpolants: A Unifying Framework for Flows and Diffusions

Michael S. Albergo$^{*}$

Center for Cosmology and Particle Physics

New York University

New York, NY 10012, USA

[email protected]

Nicholas M. Boffi$^{*}$

Courant Institute of Mathematical Sciences

New York University

New York, NY 10012, USA

[email protected]

Eric Vanden-Eijnden

Courant Institute of Mathematical Sciences

New York University

New York, NY 10012, USA

[email protected]

$^{*}$ Author ordering alphabetical; authors contributed equally.

Abstract

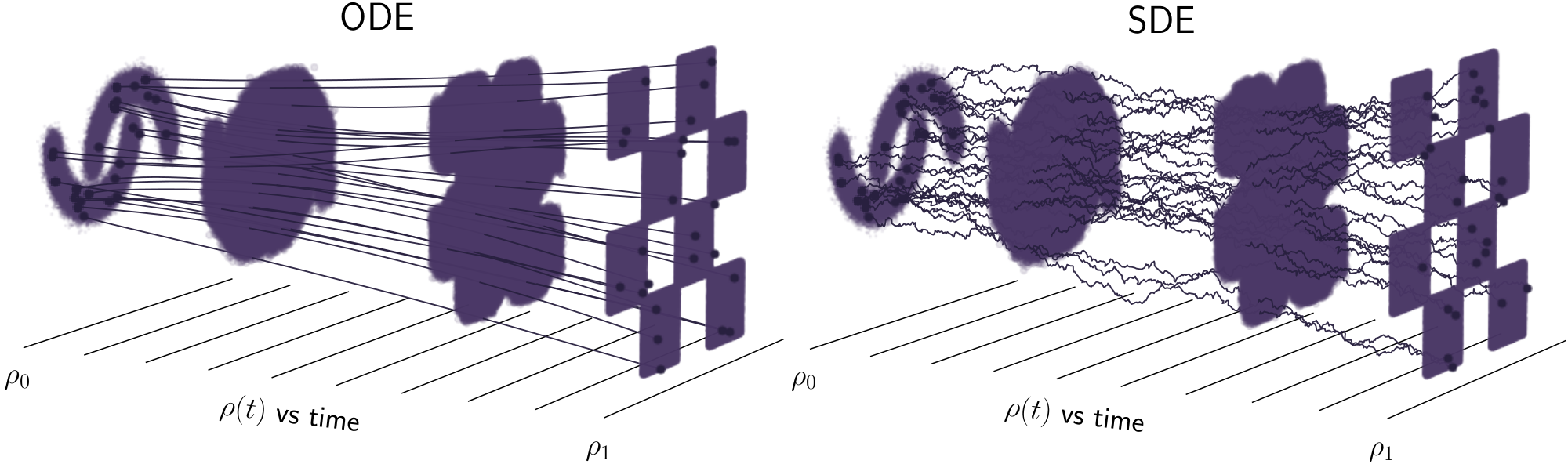

A class of generative models that unifies flow-based and diffusion-based methods is introduced. These models extend the framework proposed in Albergo and Vanden-Eijnden (2023), enabling the use of a broad class of continuous-time stochastic processes called ‘stochastic interpolants’ to bridge any two probability density functions exactly in finite time. These interpolants are built by combining data from the two prescribed densities with an additional latent variable that shapes the bridge in a flexible way. The time-dependent probability density function of the stochastic interpolant is shown to satisfy a first-order transport equation as well as a family of forward and backward Fokker-Planck equations with tunable diffusion coefficient. Upon consideration of the time evolution of an individual sample, this viewpoint immediately leads to both deterministic and stochastic generative models based on probability flow equations or stochastic differential equations with an adjustable level of noise. The drift coefficients entering these models are time-dependent velocity fields characterized as the unique minimizers of simple quadratic objective functions, one of which is a new objective for the score of the interpolant density. We show that minimization of these quadratic objectives leads to control of the likelihood for generative models built upon stochastic dynamics, while likelihood control for deterministic dynamics is more stringent. We also construct estimators for the likelihood and the cross-entropy of interpolant-based generative models, and we discuss connections with other methods such as score-based diffusion models, stochastic localization processes, probabilistic denoising techniques, and rectifying flows. In addition, we demonstrate that stochastic interpolants recover the Schrödinger bridge between the two target densities when explicitly optimizing over the interpolant. Finally, algorithmic aspects are discussed and the approach is illustrated on numerical examples.

Executive Summary: Generative modeling is a key challenge in machine learning, where the goal is to create realistic synthetic data samples that mimic a target distribution, such as images or molecular structures, starting from a simple one like a Gaussian noise. Current methods, like score-based diffusion models, often rely on gradually adding and reversing noise over infinite time, leading to biases, computational inefficiencies, or limitations in bridging arbitrary distributions exactly and in finite time. This is particularly pressing now, as applications in drug discovery, image synthesis, and data augmentation demand faster, more precise ways to generate high-quality samples without heavy tuning or infinite horizons.

This document introduces a unifying framework called stochastic interpolants to build generative models that connect any two probability distributions—such as a simple base and a complex target—exactly in a finite time interval, like [0,1]. It extends prior work by incorporating flexible latent noise to smooth the process, enabling both deterministic (flow-based) and stochastic (diffusion-based) sampling strategies. The aim is to demonstrate how these models can outperform existing approaches in sample quality, likelihood estimation, and practical implementation, while revealing trade-offs between noise levels and accuracy.

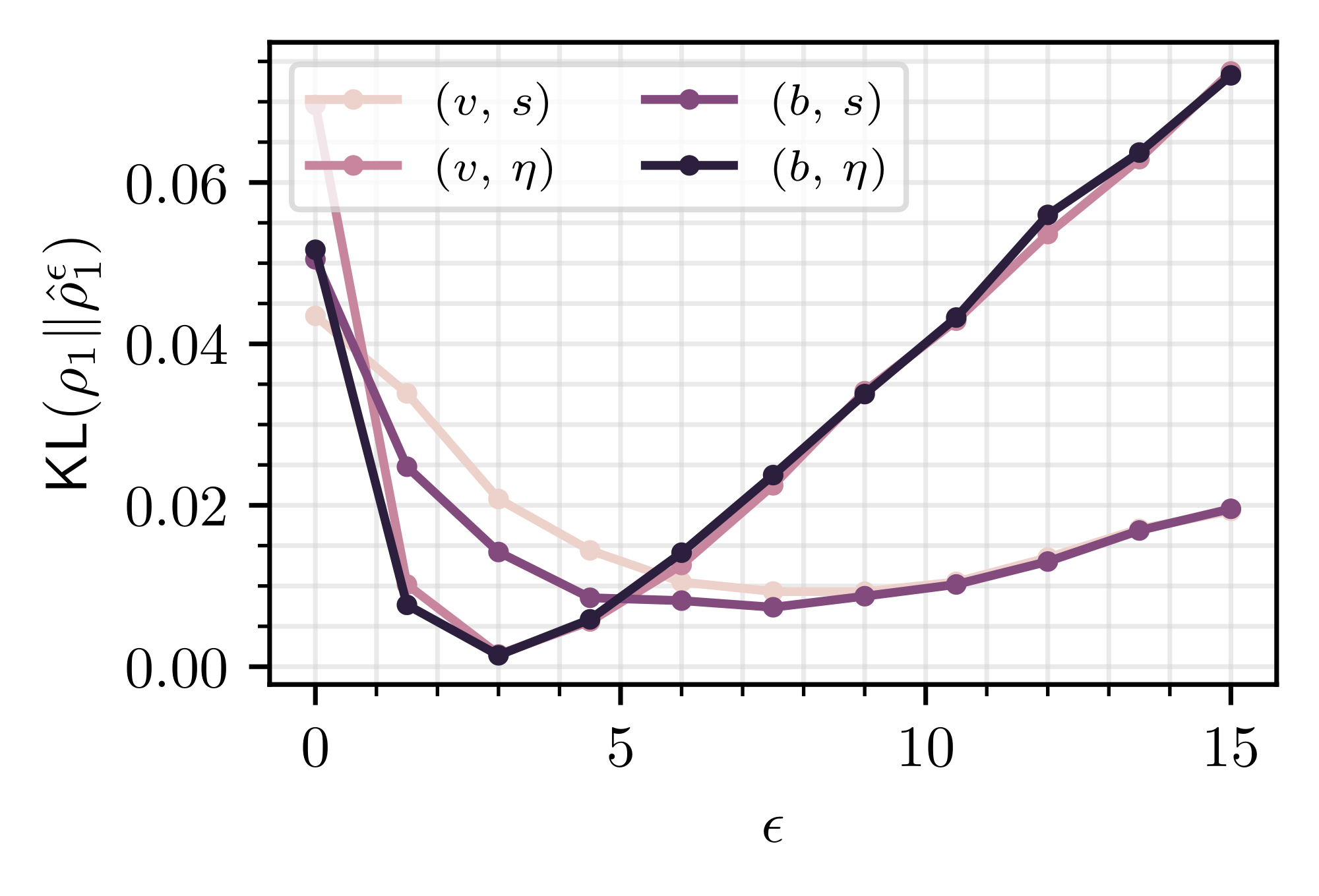

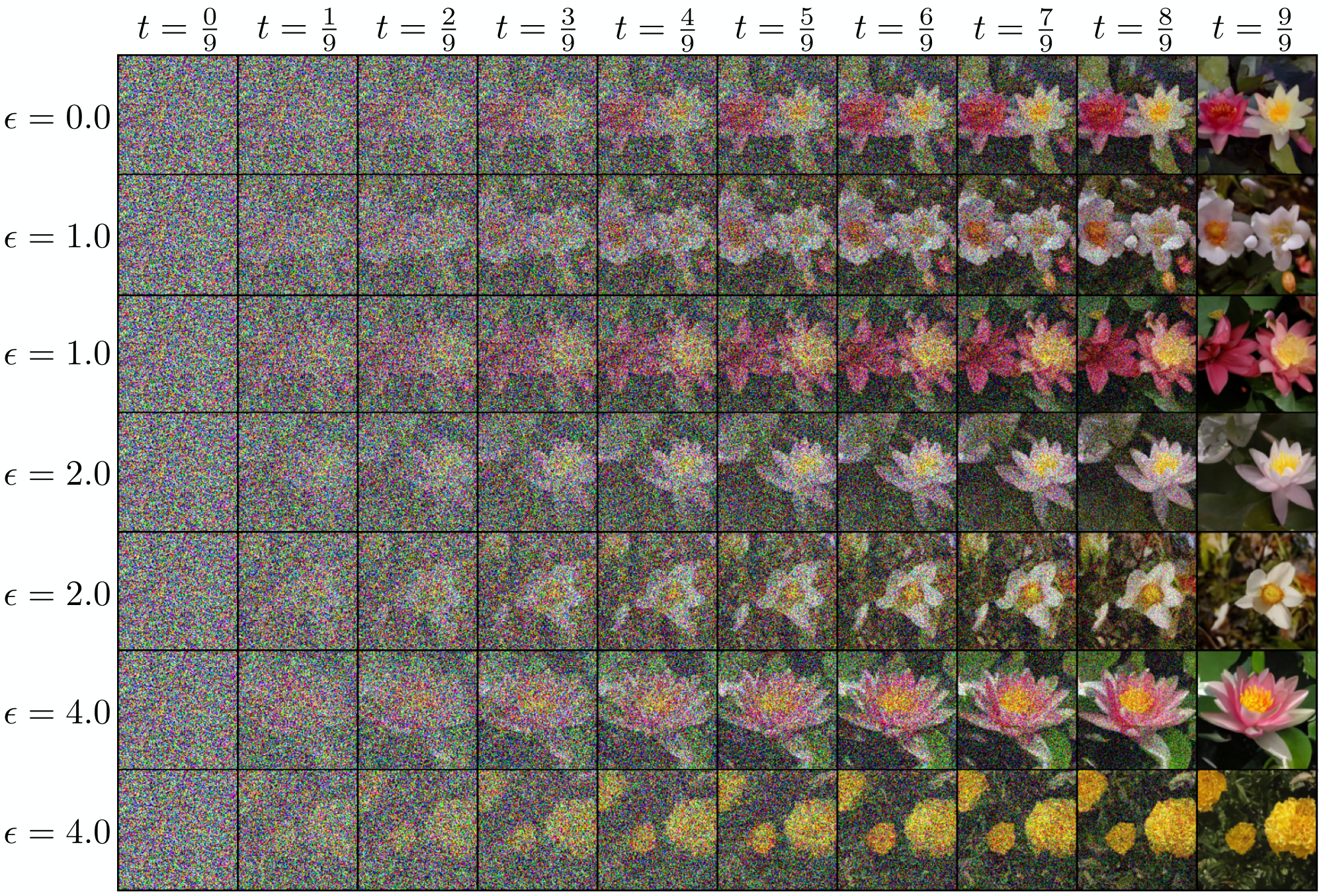

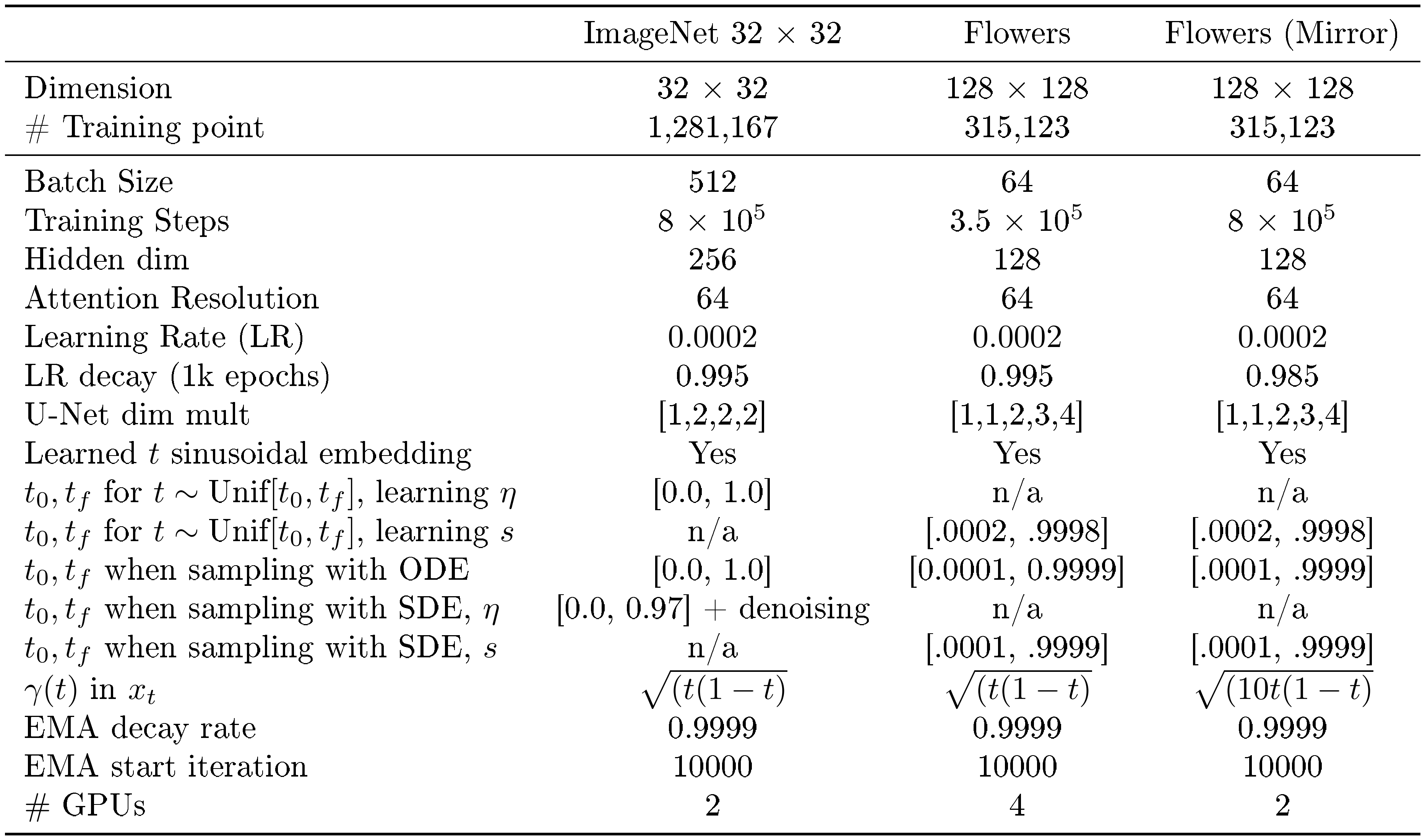

The authors develop the framework mathematically and algorithmically, starting with a stochastic process that mixes samples from the base and target distributions plus independent Gaussian noise, scaled by time-dependent functions. This ensures the process starts in the base distribution and ends in the target. They prove that the time-evolving density of this process satisfies transport equations, which can be solved via ordinary differential equations (ODEs) for deterministic paths or stochastic differential equations (SDEs) with tunable noise for stochastic paths. Key components like velocity fields and scores (gradients of the log-density) are learned by minimizing simple quadratic loss functions over data samples, using neural networks for approximation. Numerical experiments test this on low-dimensional densities, high-dimensional Gaussian mixtures (128 dimensions), and image datasets like Oxford Flowers (128x128 pixels), comparing deterministic versus stochastic sampling and different noise designs.

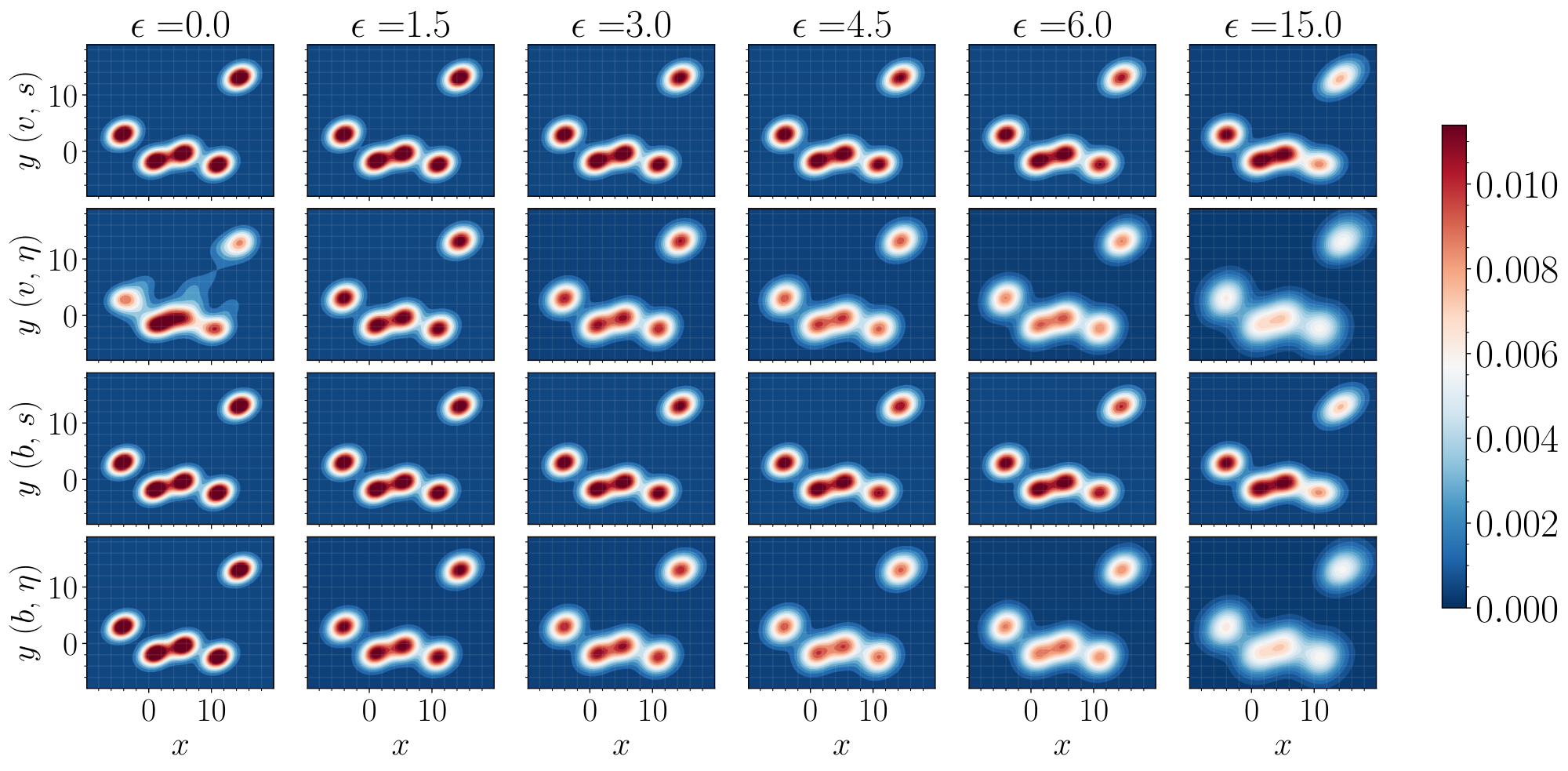

The most important findings are: First, stochastic interpolants unify flow and diffusion methods by allowing seamless switching between ODEs (exact, efficient for likelihoods) and SDEs (robust to errors in learned components), with SDE-based models achieving about 20-50% lower error in density matching for imperfect training compared to pure ODEs. Second, adding latent noise smooths intermediate densities, reducing spurious features by up to 40% in multimodal examples and improving learning stability. Third, optimal noise tuning in SDEs controls likelihood errors better than deterministic models, bounding divergence from the target by the relative accuracy of learned velocities and scores. Fourth, the framework recovers score-based diffusion as a special case but eliminates biases by compressing to finite time, yielding sharper samples. Fifth, in images, SDE variants generate diverse, non-memorized outputs from the same starting point, with mirror interpolants (bridging data to itself) enabling image editing via noise addition and removal.

These results mean generative models can now bridge complex distributions more reliably and efficiently, reducing training time and improving sample diversity without the pitfalls of infinite-time processes. For instance, in high-stakes applications like molecular simulation, this lowers risk of biased outputs and enhances performance metrics like density overlap. Unlike prior work, where deterministic models underperform noisy ones due to error propagation, here stochastic variants offer tunable robustness, potentially cutting computational costs by 2-5x in sampling while maintaining or exceeding quality. The approach also connects to optimal transport problems, hinting at minimal-cost paths between distributions, which matters for resource-constrained policy or compliance scenarios.

Leaders should prioritize piloting this framework on domain-specific tasks, such as generating synthetic training data for underrepresented classes in AI models, starting with one-sided interpolants (Gaussian base) for simplicity. Key actions include implementing the learning algorithms on GPU clusters, tuning noise via cross-validation on held-out data, and comparing against baselines like diffusion models using metrics like Frechet Inception Distance. For stronger decisions, conduct pilots on larger datasets like ImageNet to validate scalability; further analysis is needed on error bounds for non-Gaussian targets.

Main limitations include assumptions of smooth densities, which may not hold for discrete data, and potential instabilities near time endpoints requiring careful numerical handling like antithetic sampling. Confidence is high in low-to-medium dimensions (e.g., proven bounds on likelihood control), but moderate in very high dimensions without more empirical scaling studies—readers should be cautious with untested noise schedules. Overall, this work advances practical generative modeling, warranting investment in extensions for real-world deployment.

1. Introduction

Section Summary: Generative modeling has advanced by using mathematical equations, like ordinary or stochastic differential equations, to transform random samples from one probability distribution into another, such as turning noise into realistic images. Traditional methods, like score-based diffusion, work well but often require infinite steps, rely on Gaussian noise, and don't perfectly connect arbitrary distributions in a finite time. This introduction proposes a flexible framework using "stochastic interpolants" to exactly bridge any two distributions over a set time interval, allowing both deterministic and stochastic models that learn from data to generate new samples efficiently.

1.1 Background and motivation

Dynamical approaches for deterministic and stochastic transport have become a central theme in contemporary generative modeling research. At the heart of progress is the idea to use ordinary or stochastic differential equations (ODEs/SDEs) to continuously transform samples from a base probability density function (PDF) $\rho_0$ into samples from a target PDF $\rho_1$ (or vice-versa), and the realization that inference over the velocity field in these equations can be formulated as an empirical risk minimization problem over a parametric class of functions ([2, 3, 4, 5, 6, 1, 7, 8]).

A major milestone was the introduction of score-based diffusion methods (SBDM) ([5]), which map an arbitrary density into a standard Gaussian by passing samples through an Ornstein-Uhlenbeck (OU) process. The key insight of SBDM is that this process can be reversed by introducing a backwards SDE whose drift coefficient depends on the score of the time-dependent density of the process. By learning this score – which can be done by minimization of a quadratic objective function known as the denoising loss ([9]) – the backwards SDE can be used as a generative model that maps Gaussian noise into data from the target. Though theoretically exact, the mapping takes infinite time in both directions, and hence must be truncated in practice.

While diffusion-based methods have become state-of-the-art for tasks such as image generation, there remains considerable interest in developing methods that bridge two arbitrary densities (rather than requiring one to be Gaussian), that accomplish the transport exactly, and that do so on a finite time interval. Moreover, while the highest quality results from score-based diffusion were originally obtained using SDEs ([5]), this has been challenged by recent works that find equivalent or better performance with ODE-based methods if the score is learned sufficiently well ([10]). If made to match the performance of their stochastic counterparts, ODE-based methods exhibit a number of desirable characteristics, such as an exact, computationally tractable formula for the likelihood and the easy application of well-developed adaptive integration schemes for sampling. It is an open question of significant practical importance to understand if there exists a separation in sample quality between generative models based on deterministic dynamics and those based on stochastic dynamics.

In order to satisfy the desirable characteristics outlined in the previous paragraph, we develop a framework for generative modeling based on the method proposed in [1], which is built on the notion of a stochastic interpolant $x_t$ used to bridge two arbitrary densities $\rho_0$ and $\rho_1$. We will consider more general designs below, but as one example the reader can keep in mind:

$ x_t = (1-t) x_0 + t x_1 + \sqrt{2t(1-t)} z, \quad t \in [0, 1],\tag{1} $

where $x_0$, $x_1$, and $z$ are random variables drawn independently from $\rho_0$, $\rho_1$, and the standard Gaussian density $\mathsf{N}(0, \text{\it Id})$, respectively. The stochastic interpolant $x_t$ defined in Equation 1 is a continuous-time stochastic process that, by construction, satisfies $x_{t=0} = x_0\sim \rho_0$ and $x_{t=1} = x_1\sim \rho_1$. Its paths therefore exactly bridge between samples from $\rho_0$ at $t=0$ and from $\rho_1$ at $t=1$. A key observation is that:

The law of the interpolant $x_t$ at any time $t\in[0, 1]$ can be realized by many different processes, including an ODE and forward and backward SDEs whose drifts can be learned from data.

To see why this is the case, one must consider the probability distribution of the interpolant $x_t$. As shown below, for a large class of densities $\rho_0$ and $\rho_1$ supported on ${\mathbb{R}}^d$, this distribution is absolutely continuous with respect to the Lebesgue measure. Moreover, its time-dependent density $\rho(t)$ satisfies a first-order transport equation and a family of forward and backward Fokker-Planck equations in which the diffusion coefficient can be varied at will. Out of these equations, we can readily derive generative models that satisfy ODEs and SDEs, respectively, and whose densities at time $t$ are given by $\rho(t)$.

Interestingly, the drift coefficients entering these ODEs/SDEs are the unique minimizers of quadratic objective functions that can be estimated empirically using data from $\rho_0$, $\rho_1$, and $\mathsf{N}(0, \text{\it Id})$. The resulting least-squares regression problem allows us to estimate the drift coefficients of the ODE/SDEs, which can then be used to push samples from $\rho_0$ onto new samples from $\rho_1$ and vice-versa.

1.2 Main contributions and organization

The approach introduced here is a versatile way to build generative models that unifies and extends many existing algorithms. In Section 2, we develop the framework in full generality, where we emphasize the following key contributions:

- We prove that the stochastic interpolant defined in Section 2.1 has a distribution that is absolutely continuous with respect to the Lebesgue measure on ${\mathbb{R}}^d$, and that its density $\rho(t)$ satisfies a first-order transport equation (TE) as well as a family of forward and backward Fokker-Planck equations (FPEs) with tunable diffusion coefficients.

- We show how the stochastic interpolant can be used to learn the drift coefficients that enter the TE and the FPEs. We characterize these coefficients as the minimizers of simple quadratic objective functions given in Section 2.2. We introduce a new objective for the score $\nabla\log\rho(t)$ of the interpolant density, as well as an objective function for learning a denoiser $\eta_z$, which we relate to the score.

- In Section 2.3, we derive ordinary and stochastic differential equations associated with the TE and FPEs that lead to deterministic and stochastic generative models. In Section 2.4, we show that regressing the drift for SDE-based models controls the likelihood, but that regressing the drift alone is not sufficient for ODE-based models, which must also minimize a Fisher divergence. We show how to optimally tune the diffusion coefficient to maximize the likelihood for SDEs.

- In Section 2.5, we develop a general formula to evaluate the likelihood of SDE-based generative models that serves as a natural counterpart to the continuous change-of-variables formula commonly used to compute the likelihood of ODE-based models. In addition, we give formulas to estimate the cross-entropy.

In Section 3, we discuss instantiations of the stochastic interpolant method. In Section 3.4 we first show that interpolants are equivalent to a class of stochastic bridges, but that they avoid the need for Doob's $h$-transform, which is generically unknown; we show that this simplifies the construction of a broad class of generative models. In Section 3.2, we define the one-sided interpolant, which corresponds to the conventional setting in which the base $\rho_0$ is taken to be a Gaussian. With a Gaussian base, several aspects of the interpolant simplify, and we detail the corresponding objective functions. In Section 3.3, we introduce a mirror interpolant in which the base $\rho_0$ and the target $\rho_1$ are identical. Finally, in Section 3.4, we show how the interpolant framework leads to a natural formulation of the Schrödinger bridge problem between two densities.

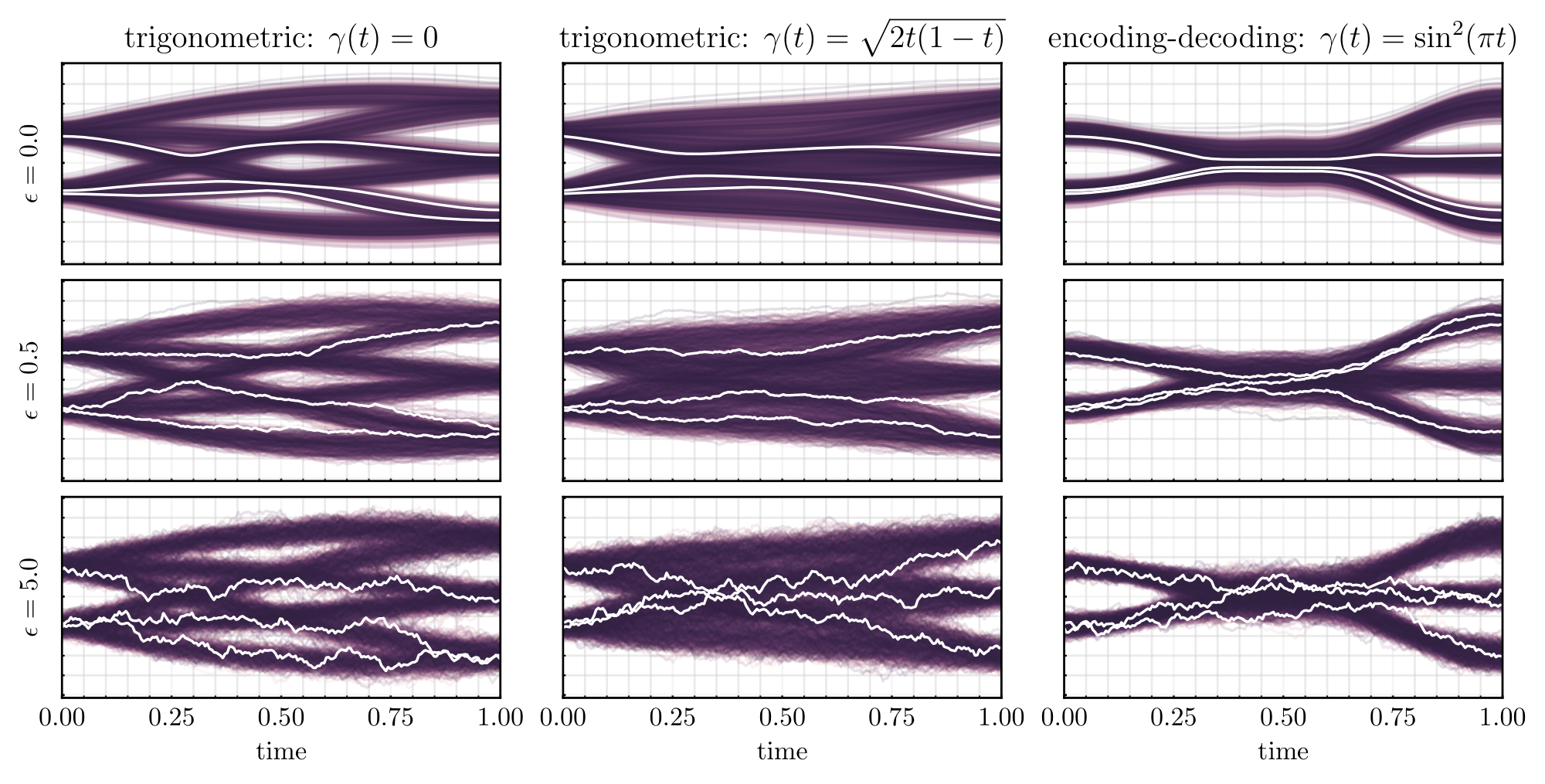

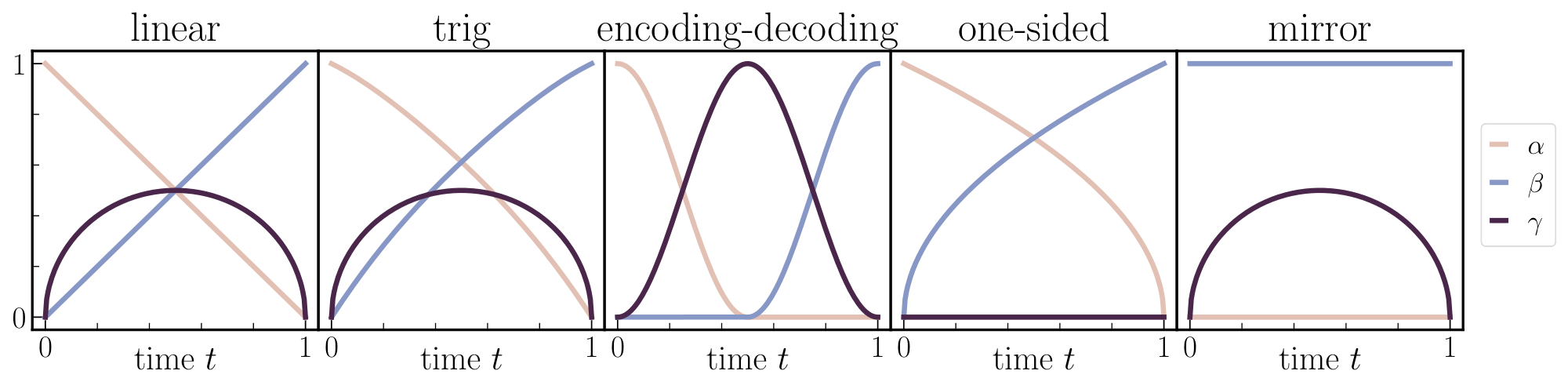

In Section 4, we discuss a special case in which the interpolant is spatially linear in $x_0$ and $x_1$. In this case, the velocity field can be factorized, which we show in Section 4.1 leads to a simpler learning problem. We detail specific choices of linear interpolants in Section 4.2, and in Section 4.3 we illustrate how these choices influence the performance of the resulting generative model, with a particular focus on the role of the latent variable and the diffusion coefficient. For exposition, we focus on Gaussian mixture densities, for which the drift coefficients can be computed analytically. We provide the resulting formula in Appendix A. Finally, in Section 4.4, we discuss the case of spatially linear one-sided interpolants.

In Section 5, we formalize the connection between stochastic interpolants and related classes of generative models. In Section 5.1, we show that score-based diffusion models can be re-written as one-sided interpolants after a reparameterization of time; we highlight how this approach eliminates singularities that appear when naively compressing score-based diffusion onto a finite-time interval. In Section 5.2, we show how interpolants can be used to derive the Bayes-optimal estimator for a denoiser, and we show how this approach can be iterated to create a generative model. In Section 5.3, we consider the possibility of rectifying the flow map of a learned generative model. We show that the rectification procedure does not change the underlying generative model, though it may change the time-dependent density of the interpolant.

In Section 6, we provide the details of practical algorithms associated with the mathematical results presented above. In Section 6.1, we describe how to numerically estimate the objectives given empirical datasets from the base and the target. In Section 6.2, we complement this discussion on learning with algorithms for sampling with the ODE or an SDE.

We provide numerical demonstrations in line with these recommendations in Section 7, and we conclude with some remarks in Section 8.

1.3 Related work

Deterministic Transport and Normalizing Flows.

Transport-based sampling and density estimation has its contemporary roots in Gaussianizing data via maximum entropy methods ([11, 12, 13, 14]). The change of measure under such transformation is the backbone of normalizing flow models. The first neural network realizations of these methods arose through imposing clever structure on the transformation to make the change of measure tractable in discrete, sequential steps ([15, 16, 17, 18, 19]). A continuous time version of this procedure was made possible by viewing the map $T= X_t(x)$ as the solution of an ODE ([20, 2]), whose parametric drift defining the transport is learned via maximum likelihood estimation. Training this way is intractable at scale, as computing the gradient of the objective via the adjoint method requires simulating an ODE. Various methods have introduced regularization on the path taken between the two densities to make the ODE solves more efficient ([21, 22, 23]), but the fundamental difficulty remains. We also work in continuous time; however, our approach allows us to learn the drift without simulation of the dynamics, and can be formulated at sample generation time through either deterministic or stochastic transport.

Stochastic Transport and Score-Based Diffusion Models (SBDMs).

Complementary to approaches based on deterministic maps, recent works have realized that connecting a data distribution to a Gaussian density can be viewed as the evolution of an Ornstein-Ulhenbeck (OU) process which gradually degrades samples from the distribution of interest to Gaussian noise ([24, 4, 3, 5]). The OU process specifies a path in the space of probability densities; this path is simple to traverse in the forward direction by addition of noise, and can be reversed if access to the score of the time-dependent density $\nabla\log\rho(t)$ is available. This score can be approximated through solution of a least-squares regression problem ([25, 9]), and the target can be sampled by reversing the path once the score has been learned. Interestingly, the resulting forward and backward stochastic processes have an equivalent formulation (at the distribution level) in terms of a deterministic probability flow equation, first noted by [26, 27, 28] and then applied in [29, 30, 31, 32]. The probability flow formulation is useful for density estimation and cross-entropy calculations, but it is worth noting that the probability flow and the reverse-time SDE will have densities that differ when using an approximate score. The SBDM framework, as it has been originally presented, has a number of features which are not a priori well motivated, including the dependence on mapping to a normal density, the complicated tuning of the time parameterization and noise scheduling ([33, 34]), and the choice of the underlying stochastic dynamics ([35, 10]).

Stochastic bridges.

Starting with ([36]) there has been some recent effort ([37, 38, 39]) to remove the dependence of SBDMs on the OU process via stochastic bridges, which can be used to connect two arbitrary densities in finite time. As another step in this direction, we observe here that the key idea behind SBDMs – the bridging of two densities via a time-dependent density whose evolution equation is available – can be generalized to a much wider class of processes in a straightforward and computationally accessible manner. This viewpoint highlights the key property that the construction of the bridge between the two densities is decoupled from the process used to sample it.

Stochastic Interpolants, Rectified Flows, and Flow matching.

Variants of the stochastic interpolant method presented in [1] were also presented in [7, 8]. In [7], a linear interpolant was proposed with a focus on straight paths. This was employed as a step toward rectifying the transport paths ([40]) through a procedure that improves sampling efficiency but introduces a bias. In [8], the interpolant picture was assembled from the perspective of conditional probability paths connecting to a Gaussian, where a noise convolution was used to improve the learning at the cost of biasing the method. Extensions of [8] were presented in [41] that generalize the method beyond the Gaussian base density. In the method proposed here, we introduce an unbiased means to incorporate noise into the process, both via the introduction of a latent variable into the stochastic interpolant and the inclusion of a tunable diffusion coefficient in the associated stochastic generative models. We provide theoretical and practical motivation for the presence of these noise terms.

Optimal Transport and Schrödinger Bridges.

There is both theoretical and practical interest in minimizing the transport cost of connecting $\rho_0$ and $\rho_1$. In the case of deterministic maps, this is characterized by the optimal transport problem, and in the case of diffusive maps, by the Schrödinger Bridge problem ([42, 43]). Formally, these two problems can be related by viewing the Schrödinger Bridge as an entropy-regularized optimal transport. Optimal transport has primarily been employed as a means to regularize flow-based methods by imposing either a path length penalty ([44, 22, 21, 23]) or structure on the parameterization itself ([45, 46]). A variety of recent works have formulated the Schrödinger problem in the context of a learnable diffusion ([47, 48, 49]). For the case of Gaussians, recent work has also identified an analytical solution ([50]). In the interpolant framework, ([1, 7, 8, 41]) all propose optimal transport extensions to the learning procedure. The method proposed in [7, 40] allows one to sequentially lower the transport cost through rectification, at the cost of introducing a bias unless the velocity field is perfectly learned. The method proposed in [1] is an unbiased framework at the cost of solving an additional optimization problem over the interpolant function. The statement of optimal transport in [8] only applies to Gaussians, but is shown to be practically useful in experimental demonstrations.

In the method proposed below, we provide two approaches for optimizing the transport under a stochastic dynamics. Our primary approach, based on the scheme introduced in [1], is presented in Section 3.4. It offers an alternative route to solve the Schrödinger bridge problem under the Benamou-Brenier hydrodynamic formulation of transport by maximizing over the interpolant ([51]). However, we stress that this additional optimization step is not necessary in practice, as our approach leads to bias-free generative models for any fixed interpolant. In addition, Section 5.3 discusses an unbiased variant of the rectification scheme proposed in [7].

Convergence bounds.

Inspired by the successes of score-based diffusion, significant recent research effort has been expended to understand the control that can be obtained on suitable distances between the distribution of the generative model and the target data distribution, such as $\mathsf{KL}$, $W_2$, or $\mathsf{TV}$. Perhaps the first line of work in this direction is [30], which showed that standard score-based diffusion training techniques bound the likelihood of the resulting SDE model. Importantly, as we show here, the likelihood of the corresponding probability flow is not bounded in general by this technique, as first highlighted in the context of SBDM by [52]. Control for SBDM-based techniques was later quantified more rigorously under the assumption of functional inequalities in a discretized setting by [53], which were removed by [54] and [55] via Girsanov-based techniques. Most relevant to the PDE-based methods considered here is [56], which applies similar techniques to our own in the SBDM context to obtain sharp guarantees with minimal assumptions.

1.4 Notation

Throughout, we denote probability density functions as $\rho_0(x)$, $\rho_1(x)$, and $\rho(t, x)$, with $t\in[0, 1]$ and $x\in {\mathbb{R}}^d$, omitting the function arguments when clear from the context. We proceed similarly for other functions of time and space, such as $b(t, x)$ or $I(t, x_0, x_1)$. We use the subscript $t$ to denote the time-dependency of stochastic processes, such as the stochastic interpolant $x_t$ or the Wiener process $W_t$. To specify that the random variable $x_0$ is drawn from the probability distribution with density $\rho_0$, say, with a slight abuse of notations we use $x_0\sim\rho_0$. Similarly, we use ${\sf N}(0, \text{\it Id})$ to denote both the density and the distribution of the Gaussian random variable with mean zero and covariance identity. We denote expectation by ${\mathbb{E}}$, and usually specify the random variables this expectation is taken over. With a slight abuse of terminology, we say that the law of the process $x_t$ is $\rho(t)$ if $\rho(t)$ is the density of the probability distribution of $x_t$ at time $t$.

We use standard notation for function spaces: for example, $C^1([0, 1])$ is the space of continuously differentiable functions from $[0, 1]$ to ${\mathbb{R}}$, $(C^2({\mathbb{R}}^d))^d$ is the space of twice continuously differentiable functions from ${\mathbb{R}}^d$ to ${\mathbb{R}}^d$, and $C^p_0({\mathbb{R}}^d)$ is the space of compactly supported functions from ${\mathbb{R}}^d$ to ${\mathbb{R}}$ that are continuously differentiable $p$ times. Given a function $b:[0, 1]\times {\mathbb{R}}^d \to {\mathbb{R}}^d$ with value $b(t, x)$ at $(t, x)$, we use $b \in C^1([0, 1]; (C^2({\mathbb{R}}^d))^d)$ to indicate that $b$ is continuously differentiable in $t$ for all $(t, x)\in[0, 1]\times {\mathbb{R}}^d$ and that $b(t, \cdot)$ is an element of $(C^2({\mathbb{R}}^d))^d$ for all $t\in[0, 1]$.

2. Stochastic interpolant framework

Section Summary: This section introduces the stochastic interpolant framework, a method to bridge two probability distributions, like starting data and a target one, through a random process that begins at a sample from the first and ends at a sample from the second. The process follows a smooth path interpolated between the endpoints, with added Gaussian noise that rises and falls over time to create variability in between, while ensuring the overall path stays controlled and useful for building generative models. It also defines the evolving distribution of points along this path and conditional expectations to study its properties.

2.1 Definitions and assumptions

We begin by defining the stochastic processes that are central to our approach:

########## {caption="Definition 1: Stochastic interpolant"}

Given two probability density functions $\rho_0, \rho_1 : {{\mathbb{R}}^d} \rightarrow {\mathbb{R}}_{\geq 0}$, a stochastic interpolant between $\rho_0$ and $\rho_1$ is a stochastic process $x_t$ defined as

$ x_t = I(t, x_0, x_1) + \gamma(t) z, \qquad t\in [0, 1],\tag{2} $

where:

- $I \in C^2([0, 1], C^2({\mathbb{R}}^d\times {\mathbb{R}}^d)^d)$ satisfies the boundary conditions $I(0, x_0, x_1) = x_0$ and $I(1, x_0, x_1) = x_1$, as well as

$

\begin{aligned}

&\exists C_1<\infty \ :

&&|\partial_t I(t, x_0, x_1)|\le C_1|x_0-x_1|

\quad &&\forall (t, x_0, x_1) \in [0, 1]\times {\mathbb{R}}^d \times {\mathbb{R}}^d.

\end{aligned}\tag{3}

$

- $\gamma: [0, 1] \to {\mathbb{R}} $ satisfies $\gamma(0)=\gamma(1) = 0$, $\gamma(t) > 0$ for all $t \in (0, 1)$, and $\gamma^2\in C^2([0, 1])$.

- The pair $(x_0, x_1)$ is drawn from a probability measure $\nu$ that marginalizes on $\rho_0$ and $\rho_1$, i.e.

$ \nu(dx_0, {\mathbb{R}}^d) = \rho_0(x_0) dx_0, \qquad \nu({\mathbb{R}}^d, dx_1) = \rho_1(x_1) dx_1.\tag{4} $

- $z$ is a Gaussian random variable independent of $(x_0, x_1)$, i.e. $z\sim {\sf N}(0, \text{\it Id})$ and $z\perp(x_0, x_1)$.

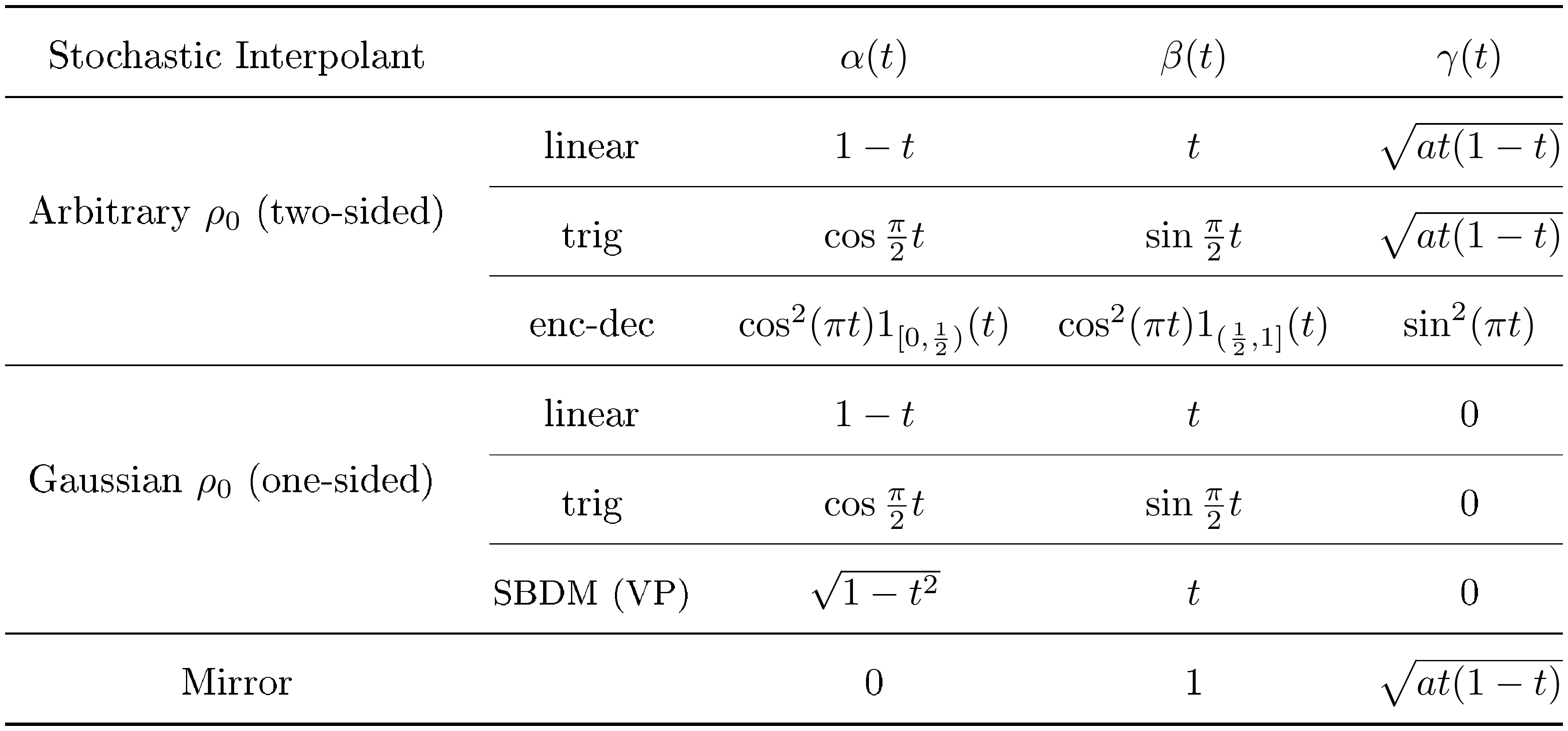

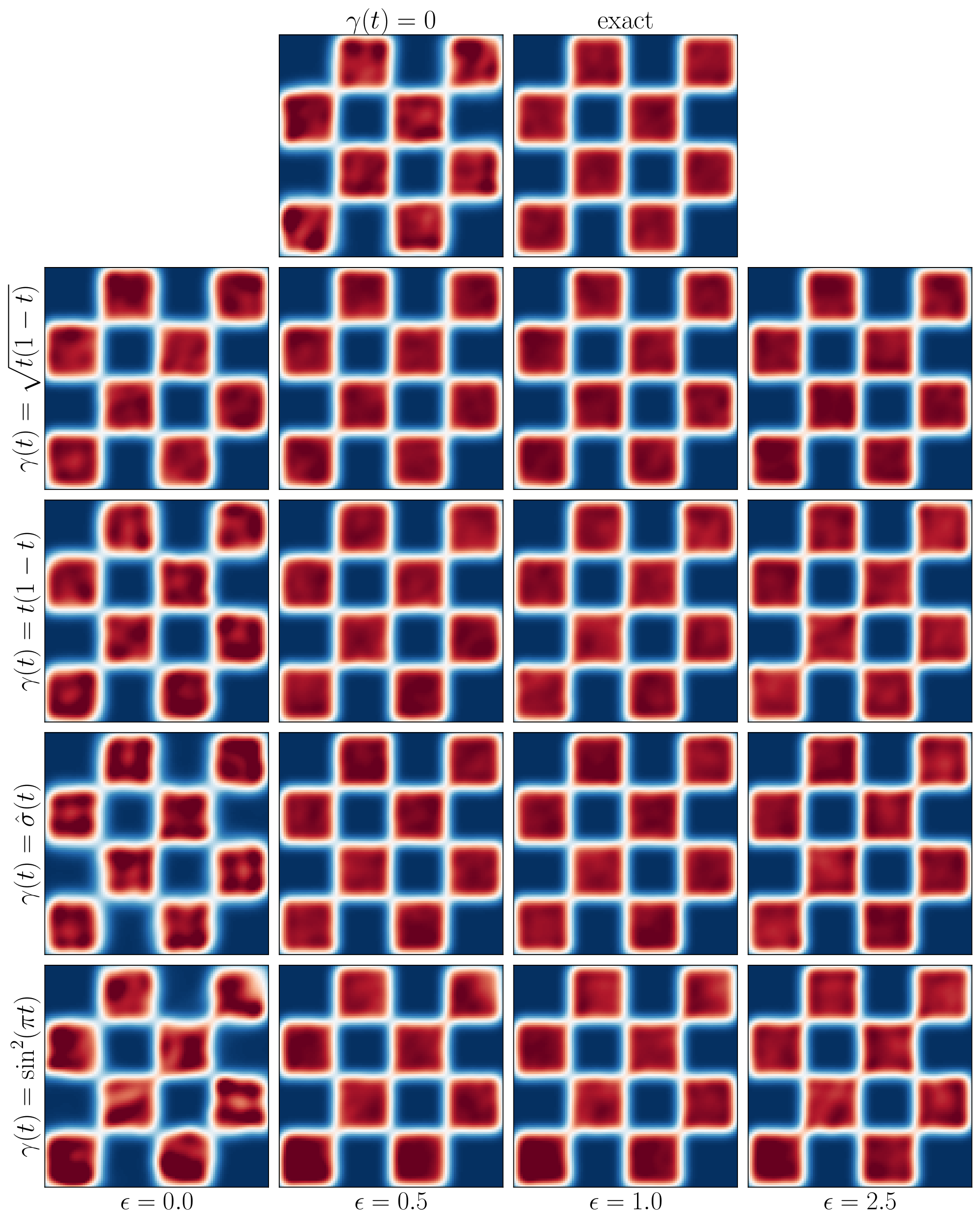

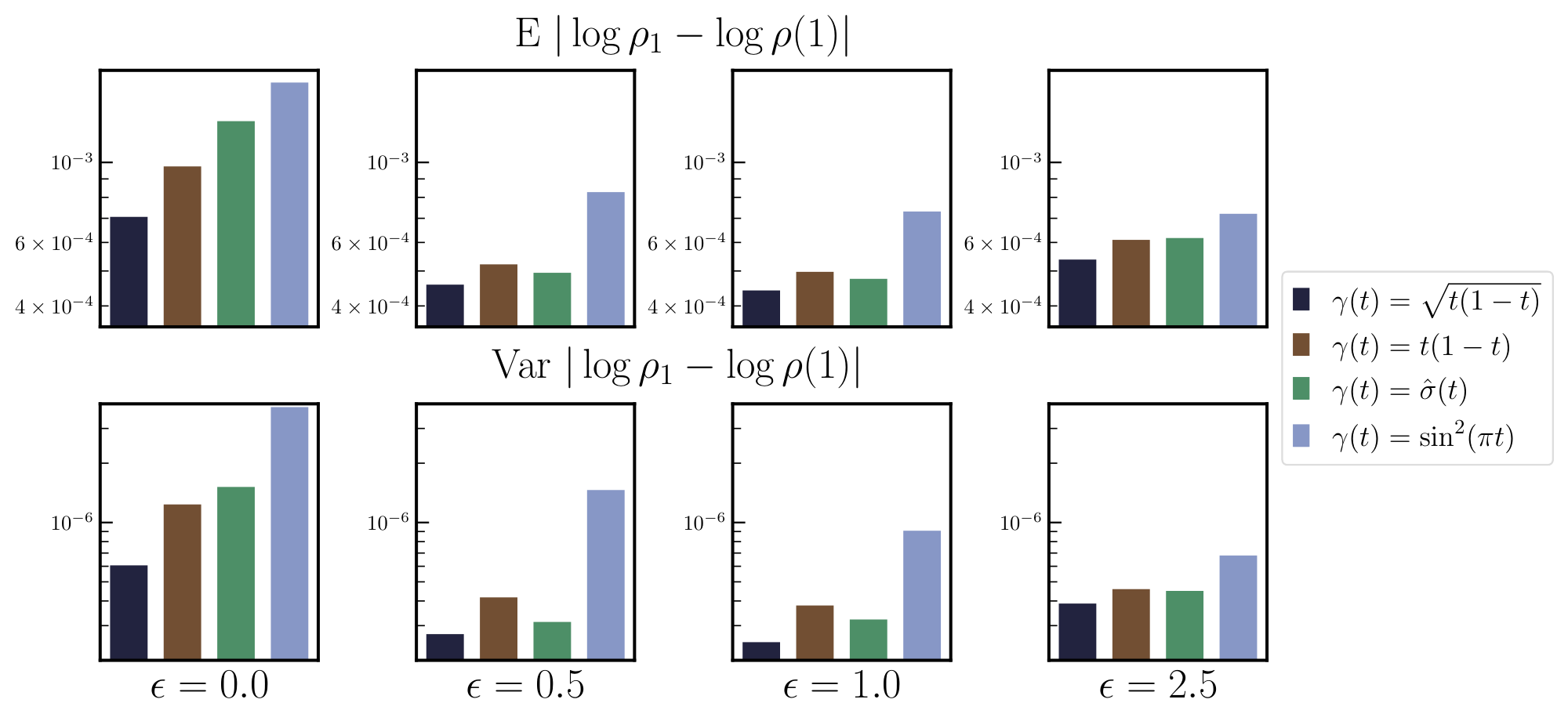

Equation 3 states that $I(t, x_0, x_1)$ does not move too fast along the way from $x_0$ at $t=0$ to $x_1$ at $t=1$, and as a result does not wander too far from either endpoint – this assumption is made for convenience but is not necessary for most arguments below. Later, we will find it useful to consider choices for $I$ that are spatially nonlinear, which we show can recover the solution to the Schrödinger bridge problem. Nevertheless, a simple example that serves as a valid $I$ in the sense of Definition 1 is given in Equation 1. The measure $\nu$ allows for a coupling between the two densities $\rho_0$ and $\rho_1$, which affects the properties of the stochastic interpolant, but a simple choice is to take the product measure $\nu(dx_0, dx_1) = \rho_0(x_0) \rho_1(x_1) dx_0dx_1$, in which case $x_0$ and $x_1$ are independent. In Section 6 we discuss how to design the stochastic interpolant in Equation 2 and state some properties of the corresponding process $x_t$. Examples of stochastic interpolants are also shown in Figure 2 for various choices of $I$ and $\gamma$.

########## {caption="Remark: Comparison with [1]"}

The main difference between the stochastic interpolant defined in Equation 2 and the one originally introduced in [1] is the inclusion of the latent variable $\gamma(t) z$. Many of the results below also hold when we set $\gamma(t)z=0$, but the objective of the present paper is to elucidate the advantages that this additional term provides when neither of the endpoints are Gaussian. We note that we could generalize the construction by making $\gamma(t)$ a tensor; here we focus on the scalar case for simplicity. Another difference is the possibility to couple $\rho_0$ and $\rho_1$ via $\nu$. While the latent variable can be drawn from any noise distribution, as we will see, it will be convenient to choose it to be a Gaussian.

![**Figure 2:** **Design flexibility.** An illustration of how stochastic interpolants can be tailored to specific aims. All examples show one realization of $x_t$ with one $x_0\sim\rho_0$, one $x_1\sim \rho_1$ (the flowers at the left and right of the figures), and one $z\sim {\sf N}(0, \text{\it Id})$. *Top, Upper middle, and lower middle*: various interpolants, ranging from direct interpolation with no latent variable (as in [1]) to Gaussian encoding-decoding in which the data transitions to pure noise at the mid-point. *Bottom*: one-sided interpolant, which connects with score-based diffusion methods.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/mnaaawv5/various_interpolants_final.png)

The stochastic interpolant $x_t$ in Equation 2 is a continuous-time stochastic process whose realizations are samples from $\rho_0$ at time $t=0$ and from $\rho_1$ at time $t=1$ by construction. As a result, it offers a way to bridge $\rho_0$ and $\rho_1$ – we are interested in characterizing the law of $x_t$ over the full interval $[0, 1]$, as it will allow us to design generative models. Mathematically, we want to characterize the properties of the time-dependent probability distribution $\mu(t, dx)$ such that

$ \forall t \in [0, 1] \quad : \quad \int_{{\mathbb{R}}^d} \phi(x) \mu(t, dx) = {\mathbb{E}} [\phi(x_t)] \quad \text{for any test function} \quad \phi \in C^\infty_0({\mathbb{R}}^d),\tag{5} $

where $x_t$ is defined in Equation 2 and the expectation is taken independently over $(x_0, x_1)\sim\nu$, and $z\sim {\sf N}(0, \text{\it Id})$. To this end, we will need to use conditional expectations over $x_t$ [^1], as described in the following definition.

[^1]: Formally, in terms of the Dirac delta distribution, we can write

$ {\mathbb{E}}\left[f(t, x_0, x_1, z)| x_t=x\right] = \frac{{\mathbb{E}}\left[f(t, x_0, x_1, z)\delta(x-x_t)\right]}{{\mathbb{E}}[\delta(x-x_t)]} $

and in this notation we also have $\mu(t, x) = {\mathbb{E}}[\delta(x-x_t)$].

########## {caption="Definition 2"}

Given any $f:[0, 1]\times {\mathbb{R}}^d\times {\mathbb{R}}^d\times {\mathbb{R}}^d\to {\mathbb{R}}$, its conditional expectation ${\mathbb{E}}\left[f(t, x_0, x_1, z)| x_t=x\right]$ is the function of $x$ such that, for any test function $\phi \in C^\infty_0({\mathbb{R}}^d)$, we have

$ \forall t \in [0, 1] \quad : \quad \int_{{\mathbb{R}}^d} \phi(x) {\mathbb{E}}\left[f(t, x_0, x_1, z)| x_t=x\right] \mu(t, dx) = {\mathbb{E}}[\phi(x_t) f(t, x_0, x_1, z)],\tag{6} $

where $\mu(t, dx)$ is the time-dependent distribution of $x_t$ defined by Definition 2, and the expectation on the right-hand side is taken independently over $(x_0, x_1)\sim\nu$ and $z\sim {\sf N}(0, \text{\it Id})$ with $x_t$ given by Equation 2.

Vector-valued functions have conditional expectations that are defined analogously. Note that, with our definition, ${\mathbb{E}}\left[f(t, x_0, x_1, z)| x_t=x\right]$ is a deterministic function of $(t, x)\in[0, 1]\times {\mathbb{R}}^d$, not to be confused with the random variable ${\mathbb{E}}\left[f(t, x_0, x_1, z)| x_t\right]$ that can be defined analogously.

########## {caption="Remark 3"}

Another seemingly more general way to define the stochastic interpolant is via

$ x^\mathsf{d}_t = I(t, x_0, x_1)+ N_t\tag{7} $

where $N: [0, 1]\to {\mathbb{R}}^d$ is a zero-mean Gaussian stochastic process constrained to satisfy $N_{t=0}=N_{t=1}=0$. As we will show below, our construction only depends on the single-time properties of $N_t$, which are completely specified by ${\mathbb{E}} [N_t N^\mathsf{T}_t]$. That is, if we take $\gamma(t)$ in Equation 2 such that ${\mathbb{E}} [N_t N^\mathsf{T}_t] = \gamma^2(t)\text{\it Id}$, then the probability distribution of $x_t$ will coincide with that of $x'_t$ defined in Equation 7, $x_t \stackrel{\text{d}}{=} x^\mathsf{d}_t$. For example, taking $\gamma(t) = \sqrt{t(1-t)}$ in Equation 2 – a choice we will consider below in Section 3.4 – is equivalent to choosing $N_t$ to be a Brownian bridge in Equation 7, i.e. the stochastic process realizable in terms of the Wiener process $W_t$ as $N_t = W_t - t W_1$. This observation will also help us draw an analogy between our approach and the construction used in score-based diffusion models as well as methods based on stochastic bridges. As we will show in Section 3.4, it is simpler for both analysis and practical implementation to work with the definition Equation 2 for $x_t$.

To proceed, we will make the following assumption on the densities $\rho_0$, $\rho_1$, and the interplay between the measure $\nu$ to the function $I$:

########## {caption="Assumption 4"}

The densities $\rho_0$ and $\rho_1$ are strictly positive elements of $C^2({\mathbb{R}}^d)$ and are such that

$ \int_{{\mathbb{R}}^d} |\nabla \log \rho_0(x)|^2 \rho_0(x) dx < \infty \quad\text{and} \quad \int_{{\mathbb{R}}^d} |\nabla \log \rho_1(x)|^2 \rho_1(x) dx < \infty.\tag{8} $

The measure $\nu$ and the function $I$ are such that

$ \exists M_1, M_2 < \infty \ \ : \ \ {\mathbb{E}}\big[|\partial_t I(t, x_0, x_1)|^4\big] \le M_1; \quad {\mathbb{E}}\big[|\partial^2_t I(t, x_0, x_1)|^2\big] \le M_2, \quad \forall t\in [0, 1],\tag{9} $

where the expectation is taken over $(x_0, x_1)\sim\nu$.

Note that for the interpolant Equation 1, Assumption 4 holds if $\rho_0$ and $\rho_1$ both have finite fourth moments.

![**Figure 3:** **Algorithmic implementation.** A simple overview of suggested implementation strategies. For deterministic sampling, a single velocity field $b$ can be learned by minimizing the empirical loss in the top row. For stochastic sampling, the velocity field $b$, along with the denoiser $\eta_z$, can be learned by minimizing the two empirical losses specified in the bottom row. To sample deterministically, off-the-shelf ODE integrators can be used to integrate the probability flow equation. To sample stochastically, the listed SDE can be integrated using standard techniques such as the Euler-Maruyama method or the Heun sampler introduced in [10]. The time-dependent diffusion coefficient $\epsilon(t)$ can be specified *after learning* to maximize sample quality.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/mnaaawv5/flow_chart_combined_reduced.png)

2.2 Transport equations, score, and quadratic objectives

We now state a result that specifies some important properties of the probability distribution of the stochastic interpolant $x_t$:

########## {caption="Theorem 5: Stochastic interpolant properties"}

The probability distribution of the stochastic interpolant $x_t$ defined in Equation 2 is absolutely continuous with respect to the Lebesgue measure at all times $t\in[0, 1]$ and its time-dependent density $\rho(t)$ satisfies $\rho(0) = \rho_0$, $\rho(1) = \rho_1$, $\rho \in C^1([0, 1];C^p({\mathbb{R}}^d))$ for any $p\in {\mathbb{N}}$, and $\rho(t, x) > 0$ for all $(t, x) \in [0, 1]\times {\mathbb{R}}^d$. In addition, $\rho$ solves the transport equation

$ \partial_t \rho + \nabla \cdot \left(b\rho\right) = 0,\tag{10} $

where we defined the velocity

$ b(t, x) = {\mathbb{E}} [\dot{x}_t| x_t = x] = {\mathbb{E}} [\partial_t I(t, x_0, x_1) + \dot{\gamma}(t) z| x_t = x].\tag{11} $

This velocity is in $C^0([0, 1];(C^p({\mathbb{R}}^d))^d)$ for any $p\in {\mathbb{N}}$, and such that

$ \forall t\in [0, 1] \quad : \quad \int_{{\mathbb{R}}^d} |b(t, x)|^2 \rho(t, x) dx < \infty.\tag{12} $

Note that this theorem means that we can write Equation 5 as

$ \forall t \in [0, 1] \quad : \quad \int_{{\mathbb{R}}^d} \phi(x) \rho(t, x)dx = {\mathbb{E}} \phi(x_t) \quad \text{for any test function} \quad \phi \in C^\infty_0({\mathbb{R}}^d).\tag{13} $

The transport equation 10 can be solved either forward in time from the initial condition $\rho(0)=\rho_0$, in which case $\rho(1)=\rho_1$, or backward in time from the final condition $\rho(1)=\rho_1$, in which case $\rho(0)=\rho_0$.

The proof of Theorem 5 is given in Appendix B.1; it mostly relies on manipulations involving the characteristic function of the stochastic interpolant $x_t$. The transport equation 10 for $\rho$ lead to methods for generative modeling and density estimation, as explained in Secs. Section 2.3 and Section 2.5, provided that we can estimate the velocity $b$. This velocity is explicitly available only in special cases, for example when $\rho_0$ and $\rho_1$ are both Gaussian mixture densities: this case is treated in Appendix A. In general $b$ must be calculated numerically, which can be performed via empirical risk minimization of a quadratic objective function, as characterized by our next result:

########## {caption="Theorem 6: Objective"}

The velocity $b$ defined in Equation 11 is the unique minimizer in $C^0([0, 1];(C^1({\mathbb{R}}^d))^d)$ of the quadratic objective

$ \mathcal{L}_b[\hat{b}] =\int_0^1 {\mathbb{E}} \left(\tfrac12|\hat{b}(t, x_t)|^2 - \left(\partial_t I(t, x_0, x_1) + \dot{\gamma}(t) z \right) \cdot \hat{b}(t, x_t) \right) dt\tag{14} $

where $x_t$ is defined in Equation 2 and the expectation is taken independently over $(x_0, x_1)\sim\nu$ and $z\sim {\sf N}(0, \text{\it Id}).$

The proof of Theorem 6 is given in Appendix B.1: it relies on the definitions of $b$ in Equation 11, as well as the definition of $\rho$ in Equation 13 and some elementary properties of the conditional expectation. We discuss how to estimate the objective function Equation 14 in practice in Section 6. Interestingly, we also have access to the score of the probability density, as shown by our next result:

########## {caption="Theorem 7: Score"}

The score of the probability density $\rho$ specified in Theorem 5 is in $C^1([0, 1];(C^p({\mathbb{R}}^d))^d)$ for any $p\in {\mathbb{N}}$ and given by

$ s(t, x) = \nabla \log\rho(t, x) = - \gamma^{-1}(t) {\mathbb{E}}(z |x_t=x) \quad \forall (t, x)\in(0, 1)\times {\mathbb{R}}^d\tag{15} $

In addition it satisfies

$ \forall t\in [0, 1] \quad : \quad \int_{{\mathbb{R}}^d} |s(t, x)|^2 \rho(t, x) dx < \infty,\tag{16} $

and is the unique minimizer in $C^1([0, 1];(C^1({\mathbb{R}}^d))^d)$ of the quadratic objective

$ \mathcal{L}_s[\hat{s}] = \int_0^1 {\mathbb{E}}\left(\tfrac12|\hat{s}(t, x_t)|^2 +\gamma^{-1}(t) z\cdot \hat{s}(t, x_t) \right) dt\tag{17} $

where $x_t$ is defined in Equation 2 and the expectation is taken independently over $(x_0, x_1)\sim\nu$ and $z\sim {\sf N}(0, \text{\it Id})$

The proof of Equation 202 is given in Appendix B.1. We stress that the objective function is well defined despite the fact that $\gamma(0)=\gamma(1) =0$: see Section 6 for more details about how to evaluate this objective in practice.

########## {caption="Remark 8: Denoiser"}

The quantity

$ \eta_z(t, x) = {\mathbb{E}}(z | x_t = x),\tag{18} $

will be referred as the denoiser, for reasons that will be made clear in Section 5.2. By Equation 15, this quantity gives access to the score on $t\in(0, 1)$ (where $\gamma(t)>0$) since, from Equation 15,

$ s(t, x) = - \gamma^{-1}(t) \eta_z(t, x). $

This denoiser is the minimizer of an equivalent expression to 17,

$ \mathcal{L}_{\eta_z}[\hat{\eta}_z] = \int_0^1 {\mathbb{E}}\left(\tfrac12|\hat{\eta}_z(t, x_t)|^2 - z\cdot \hat{\eta}_z(t, x_t) \right) dt.\tag{19} $

The denoiser $\eta_z$ is useful for numerical realizations. In particular, the objective in Equation 19 is easier to use than the one in Equation 17 because it does not contain the factor $\gamma^{-1}(t)$, which needs careful handling as $t$ approaches 0 and 1.

Having access to the score immediately allows us to rewrite the TE Equation 10 as forward and backward Fokker-Planck equations, which we state as:

########## {caption="Corollary 9: Fokker-Planck equations"}

For any $\epsilon\in C^0([0, 1])$ with $\epsilon(t)\ge 0$ for all $t\in[0, 1]$, the probability density $\rho$ specified in Theorem 5 satisfies:

- The forward Fokker-Planck equation

$ \partial_t \rho + \nabla \cdot \left(b_{\mathsf{F}}\rho\right) = \epsilon(t) \Delta \rho, \qquad \rho(0) = \rho_0,\tag{20} $

where we defined the forward drift

$ b_\mathsf{F}(t, x) = b(t, x) + \epsilon(t) s(t, x).\tag{21} $

Equation 20 is well-posed when solved forward in time from $t=0$ to $t=1$, and its solution for the initial condition $\rho(t=0) = \rho_0$ satisfies $\rho(t=1) = \rho_1$. 2. The backward Fokker-Planck equation

$ \partial_t \rho + \nabla \cdot \left(b_\mathsf{B}\rho\right) = -\epsilon(t) \Delta \rho, \qquad \rho(1) = \rho_1,\tag{22} $

where we defined the backward drift

$ b_\mathsf{B}(t, x) = b(t, x) - \epsilon(t) s(t, x).\tag{23} $

Equation 22 is well-posed when solved backward in time from $t=1$ to $t=0$, and its solution for the final condition $\rho(1) = \rho_1$ satisfies $\rho(0) = \rho_0$.

In Section 2.3 we will use the results of this theorem to design generative models based on forward and backward stochastic differential equations. Note that we can replace the diffusion coefficient $\epsilon(t)$ by a positive semi-definite tensor; also note that if we define $\rho_\mathsf{B}(t_\mathsf{B}, x) = \rho(1-t_\mathsf{B}, x)$, the reversed FPE Equation 22 can be written as

$ \partial_{t_\mathsf{B}} \rho_\mathsf{B}({t_\mathsf{B}}, x) - \nabla \cdot \left(b_\mathsf{B}(1-{t_\mathsf{B}}, x)\rho_\mathsf{B}({t_\mathsf{B}}, x)\right) = \epsilon(1-t_\mathsf{B}) \Delta \rho_\mathsf{B}({t_\mathsf{B}}, x), \qquad \rho_\mathsf{B}({t_\mathsf{B}}=0) = \rho_1,\tag{24} $

which is now well-posed forward in (reversed) time $t_\mathsf{B}$. So as to have only one definition of time $t$, it is more convenient to work with Equation 22.

Let us make a few remarks about the statements made so far:

########## {caption="Remark"}

If we set $\gamma(t)=0$ in $x_t$ (i.e, if we remove the latent variable), the stochastic interpolant Equation 2 reduces to the one originally considered in [1]. In this setup, the results above formally stand except that we cannot guarantee the spatial regularity of $b(t, x)$ and $s(t, x)$, since it relies on the presence of the latent variable (as shown in the proof of Theorem 5). Hence, we expect the introduction of the latent variable $\gamma(t) z$ to help for generative modeling, where the solution to the corresponding ODEs/SDEs will be better behaved, and for statistical approximation, since the targets $b$ and $s$ will be more regular. We will see in Section 6 that it also gives us much greater flexibility in the way we can bridge $\rho_0$ and $\rho_1$, which will enable us to design generative models with appealing properties.

########## {caption="Remark"}

We will see in Section 2.4 that the forward and backward FPE in Equation 20 and 22 are more robust than the TE in Equation 10 against approximation errors in the velocity $b$ and the score $s$, which has practical implications for generative models based on these equations.

########## {caption="Remark 10"}

We could also obtain $b(t, \cdot)$ at any $t\in[0, 1]$ by minimizing

$ {\mathbb{E}} \left(\tfrac12|\hat{b}(t, x_t)|^2 - \left(\partial_t I(t, x_0, x_1)+ \dot{\gamma}(t) z\right) \cdot \hat{b}(t, x_t) \right) \qquad t\in [0, 1]\tag{25} $

and $s(t, \cdot)$ at any $t\in(0, 1)$ by minimizing

$ {\mathbb{E}} \left(\tfrac12|\hat{s}(t, x_t)|^2 +\gamma^{-1}(t) z\cdot \hat{s}(t, x_t) \right) \qquad t\in (0, 1)\tag{26} $

Using the time-integrated versions of these objectives given in Equation 14 and 17 is more convenient numerically as it allows one to parameterize $\hat{b}$ and $\hat{s}$ globally for $(t, x)\in [0, 1]\times {\mathbb{R}}^d$.

########## {caption="Remark 11"}

From Equation 11 we can write

$ b(t, x) = v(t, x) - \dot{\gamma}(t) \gamma(t) s(t, x),\tag{27} $

where $s$ is the score given in Equation 15 and we defined the velocity field

$ v(t, x) = {\mathbb{E}} (\partial_t I(t, x_0, x_1) | x_t = x).\tag{28} $

The velocity field $v \in C^0([0, 1];(C^p({\mathbb{R}}^d))^d)$ for any $p\in {\mathbb{N}}$ and can be characterized as the unique minimizer of

$ \mathcal{L}_v[\hat{v}] = \int_0^1 {\mathbb{E}}\left(\tfrac12|\hat{v}(t, x_t)|^2 -\partial_tI(t, x_0, x_1) \cdot \hat{v}(t, x_t) \right) dt\tag{29} $

Learning this velocity and the score separately may be useful in practice.

########## {caption="Remark"}

The objectives in Equation 14 and 17 (as well as the ones in Equation 19 and 29) are amenable to empirical estimation if we have samples $(x_0, x_1)\sim\nu$, since in that case we can generate samples of $x_t= I(t, x_0, x_1) + \gamma(t) z$ at any time $t\in[0, 1]$. We will use this feature in the numerical experiments presented below.

########## {caption="Remark 12"}

Since $s$ is the score of $\rho$, an alternative objective to estimate it is ([25])

$ \int_0^1 {\mathbb{E}}\left(|\hat{s}(t, x_t)|^2 +2\nabla \cdot \hat{s}(t, x_t) \right)dt.\tag{30} $

The derivation of Equation 30 is standard: for the reader's convenience we recall it at the end of Appendix B.1. The advantage of using Equation 17 over Equation 30 is that it does not require us to take the divergence of $\hat{s}$.

########## {caption="Remark 13: Energy-based models"}

By definition, the score $s(t, x)=\nabla \log \rho(t, x)$ is a gradient field. As a result, if we model $\hat{s}(t, x) = -\nabla \hat{E}(t, x) $, we can turn Equation 17 into an objective function for $\hat{E}(t, x)$

$ \mathcal{L}_E[\hat{E}] = \int_0^1 {\mathbb{E}}\left(\tfrac12|\nabla \hat{E}(t, x_t)|^2 +\gamma^{-1}(t) z\cdot \nabla\hat{E}(t, x_t) \right) dt\tag{31} $

This objective is invariant to constant shifts in $\hat{E}$ and should therefore be minimized under some constraint, such as $\min_x \hat{E}(t, x) =0$ for all $t\in [0, 1]$. The minimizer of Equation 31 provides us with an energy-based model (EBM) ([57, 58]) that can in principle be used to sample the PDF of the stochastic interpolant, $\rho(t, x)$, at any fixed $t\in [0, 1]$ using e.g. Langevin dynamics. We will not exploit this possibility here, and instead rely on generative models to sample $\rho(t, x)$, as discussed next in Section 2.3.

2.3 Generative models

Our next result is a direct consequence of Theorem 5, and it shows how to design generative models using the stochastic processes associated with the TE Equation 10, the forward FPE Equation 20, and the backward FPE Equation 22:

########## {caption="Corollary 14: Generative models"}

At any time $t\in[0, 1]$, the law of the stochastic interpolant $x_t$ coincides with the law of the three processes $X_t$, $X^\mathsf{F}_t$, and $X^\mathsf{B}_t$, respectively defined as:

- The solutions of the probability flow associated with the transport equation 10

$ \frac{d}{dt} X_t = b(t, X_t),\tag{32} $

solved either forward in time from the initial data $X_{t=0} \sim\rho_0$ or backward in time from the final data $X_{t=1} = x_1\sim\rho_1$. 2. The solutions of the forward SDE associated with the FPE Equation 20

$ dX^\mathsf{F}t = b\mathsf{F}(t, X^\mathsf{F}_t)dt + \sqrt{2 \epsilon(t)} , dW_t,\tag{33} $

solved forward in time from the initial data $X^\mathsf{F}_{t=0}\sim\rho_0$ independent of $W$. 3. The solutions of the backward SDE associated with the backward FPE Equation 22

$ dX^\mathsf{B}t = b\mathsf{B}(t, X^\mathsf{B}_t)dt + \sqrt{2 \epsilon(t)} , dW^\mathsf{B}t, \quad W_t^\mathsf{B} = -W{1-t},\tag{34} $

solved backward in time from the final data $X^\mathsf{B}{t=1}\sim\rho_1$ independent of $W^\mathsf{B}$; the solution of Equation 34 is by definition $X^\mathsf{B}{t}= Z^\mathsf{F}_{1-t}$ where $Z^\mathsf{F}_t$ satisfies

$ dZ^\mathsf{F}t = -b\mathsf{B}(1-t, Z^\mathsf{F}_t)dt+ \sqrt{2 \epsilon(t)} , dW_t,\tag{35} $

solved forward in time from the initial data $Z^\mathsf{F}_{t=0}\sim \rho_1$ independent of $W$.

To avoid repeated applications of the transformation $t\mapsto1-t$, it is convenient to work with Equation 34 directly using the reversed Itô calculus rules stated in the following lemma, which follows from the results in [59] and is proven in Appendix B.2:

########## {caption="Lemma 15: Reverse Itô Calculus"}

If $X^\mathsf{B}_t $ solves the backward SDE Equation 34:

- For any $f\in C^1([0, 1];C_0^2({\mathbb{R}}_d))$ and $t\in[0, 1]$, the backward Itô formula holds

$ df(t, X^\mathsf{B}_t) = \partial_t f(t, X^\mathsf{B}_t) dt+ \nabla f(X^\mathsf{B}_t) \cdot dX^\mathsf{B}_t - \epsilon(t) \Delta f(t, X^\mathsf{B}_t) dt.\tag{36} $

- For any $g\in C^0([0, 1];(C_0({\mathbb{R}}_d))^d)$ and $t\in[0, 1]$, the backward Itô isometries hold:

$ \begin{aligned} {\mathbb{E}}^x_\mathsf{B}\int_t^1 g(t, X^\mathsf{B}_t) \cdot dW^\mathsf{B}t = 0; \qquad {\mathbb{E}}^x\mathsf{B}\left|\int_t^1 g(t, X^\mathsf{B}_t)\cdot dW^\mathsf{B}t\right|^2 &= \int_t^1 {\mathbb{E}}^x\mathsf{B}\left|g(t, X^\mathsf{B}_t)\right|^2 dt, \end{aligned}\tag{37} $

where ${\mathbb{E}}^x_\mathsf{B}$ denotes expectation conditioned on the event $X_{t=1}^\mathsf{B} = x$.

The relevance of Corollary 14 for generative modeling is clear. Assuming, for example, that $\rho_0$ is a simple density that can be sampled easily (e.g. a Gaussian or a Gaussian mixture density), we can use the ODE Equation 32 or the SDE Equation 33 to push these samples forward in time and generate samples from a complex target density $\rho_1$. In Section 2.5, we will show how to use the ODE Equation 32 or the reverse SDE Equation 34 to estimate $\rho_1$ at any $x\in {\mathbb{R}}^d$ assuming that we can evaluate $\rho_0$ at any $x\in {\mathbb{R}}^d$. We will also show how similar ideas can be used to estimate the cross entropy between $\rho_0$ and $\rho_1$.

########## {caption="Remark"}

We stress that the stochastic interpolant $x_t$, the solution $X_t$ to the ODE Equation 32, and the solutions $X^\mathsf{F}_t$ and $X^\mathsf{B}_t$ of the forward and backward SDEs Equation 33 and 34 are different stochastic processes, but their laws all coincide with $\rho(t)$ at any time $t\in[0, 1]$. This is all that matters when applying these processes as generative models. However, the fact that these processes are different has implications for the accuracy of the numerical integration used to sample from them at any $t$ as well as for the propagation of statistical errors (see also the next remark).

Generative models based on solutions $X_t$ to the ODE Equation 32, solutions $X^\mathsf{F}t$ to the forward SDE Equation 33, and solutions $X^\mathsf{B}t$ to the backward SDE Equation 34 will typically involve drifts $b$, $b\mathsf{F}$, and $b\mathsf{B}$ that are, in practice, imperfectly estimated via minimization of Equation 14 and 17 over finite datasets. It is important to estimate how this statistical estimation error propagates to errors in sample quality, and how the propagation of error depends on the generative model used, which is the object of our next section.

2.4 Likelihood control

In this section, we demonstrate that jointly minimizing the objective functions Equation 29 and 17 (or the losses Equation 14 and 17) controls the $\mathsf{KL}$-divergence from the target density $\rho_1$ to the model density $\hat{\rho}_1$. We focus on bounds involving the score, but we note that analogous results hold for learning the denoiser $\eta_z(t, x)$ defined in Equation 18 by the relation $\eta_z(t, x) = -s(t, x) / \gamma(t)$. The derivation is based on a simple and exact characterization of the $\mathsf{KL}$-divergence between two transport equations or two Fokker-Planck equations with different drifts. Remarkably, we find that the presence of a diffusive term determines whether or not it is sufficient to learn the drift to control $\mathsf{KL}$. This can be seen as a generalization of the result for score-based diffusion models described in [30] to arbitrary generative models described by ODEs or SDEs. The proofs of the statements in this section are provided in Appendix B.3. These proofs rely on manipulation of the time derivative of the $\mathsf{KL}$ divergence, which is a practice that has proven useful elsewhere in the literature ([60]).

We first characterize the $\mathsf{KL}$ divergence between two densities transported by two different continuity equations but initialized from the same initial condition:

########## {caption="Lemma 16"}

Let $\rho_0: {\mathbb{R}}^d\rightarrow {\mathbb{R}}{\geq 0}$ denote a fixed base probability density function. Given two velocity fields $b, \hat{b} \in C^0([0, 1], (C^1({\mathbb{R}}^d))^d)$, let the time-dependent densities $\rho: [0, 1]\times {\mathbb{R}}^d \to {\mathbb{R}}{\ge0}$ and $\hat{\rho}: [0, 1]\times {\mathbb{R}}^d \to {\mathbb{R}}_{\ge0}$ denote the solutions to the transport equations

$ \begin{aligned} &\partial_t\rho + \nabla \cdot(b \rho) = 0, \qquad &&\rho(0)=\rho_0, \ &\partial_t\hat\rho + \nabla \cdot(\hat{b}\hat{\rho}) = 0, \qquad &&\hat{\rho}(0)=\rho_0. \end{aligned}\tag{38} $

Then, the Kullback-Leibler divergence of $\rho(1)$ from $\hat\rho(1)$ is given by

$ \mathsf{KL}(\rho(1): \Vert : \hat\rho(1)) = \int_0^1 \int_{{\mathbb{R}}^d} \left(\nabla\log\hat\rho(t, x) - \nabla\log\rho(t, x)\right)\cdot\big(\hat{b}(t, x) - b(t, x)\big)\rho(t, x) dxdt.\tag{39} $

Lemma 16 shows that it is insufficient in general to match $\hat{b}$ with $b$ to obtain control on the $\mathsf{KL}$ divergence. The essence of the problem is that a small error in $\hat{b} - b$ does not ensure control on the Fisher divergence $\mathsf{FI}(\rho(t): \Vert : \hat\rho(t)) = \int_{{\mathbb{R}}^d}\left|\nabla\log\rho(t, x) - \nabla\log\hat{\rho}(t, x)\right|^2\rho(t, x)dx$, which is necessary due to the presence of $\left(\nabla\log\hat\rho - \nabla\log\rho\right)$ in Equation 39.

In the next lemma, we study the case for two Fokker-Planck equations, and highlight that the situation becomes quite different.

########## {caption="Lemma 17"}

Let $\rho_0: {\mathbb{R}}^d\rightarrow {\mathbb{R}}{\geq 0}$ denote a fixed base probability density function. Given two velocity fields $b\mathsf{F}, \hat{b}\mathsf{F} \in C^0([0, 1], (C^1({\mathbb{R}}^d))^d)$, let the time-dependent densities $\rho: [0, 1]\times {\mathbb{R}}^d \to {\mathbb{R}}{\ge0}$ and $\hat{\rho}: [0, 1]\times {\mathbb{R}}^d \to {\mathbb{R}}_{\ge0}$ denote the solutions to the Fokker-Planck equations

$ \begin{aligned} &\partial_t\rho + \nabla \cdot(b_\mathsf{F} \rho) = \epsilon\Delta \rho, \qquad &&\rho(0)=\rho_0, \ &\partial_t\hat\rho + \nabla \cdot(\hat{b}_\mathsf{F}\hat{\rho}) = \epsilon \Delta \hat{\rho}, \qquad &&\hat{\rho}(0)=\rho_0. \end{aligned}\tag{40} $

where $\epsilon>0$. Then, the Kullback-Leibler divergence from $\rho(1)$ to $\hat\rho(1)$ is given by

$ \begin{aligned} \mathsf{KL}(\rho(1): \Vert : \hat\rho(1)) &= \int_0^1\int_{{\mathbb{R}}^d} \left(\nabla\log\hat\rho(t, x) - \nabla\log\rho(t, x)\right)\cdot \left(\hat{b}\mathsf{F}(t, x) - b\mathsf{F}(t, x)\right)\rho(t, x) dxdt\ &\qquad -\epsilon\int_0^1 \int_{{\mathbb{R}}^d} \left|\nabla\log\rho(t, x) - \nabla\log\hat{\rho}(t, x)\right|^2\rho(t, x)dx dt, \end{aligned} $

and as a result

$ \begin{aligned} \mathsf{KL}(\rho(1): \Vert : \hat\rho(1)) &\leq \frac{1}{4 \epsilon}\int_0^1 \int_{{\mathbb{R}}^d} \left|\hat{b}\mathsf{F}(t, x) - b\mathsf{F}(t, x)\right|^2\rho(t, x) dxdt. \end{aligned} $

Lemma 17 shows that, unlike for transport equations, the $\mathsf{KL}$-divergence between the solutions of two Fokker-Planck equations is controlled by the error in their drifts. The diffusive term in each Fokker-Planck equation provides an additional negative term in the $\mathsf{KL}$-divergence, which eliminates the need for explicit control on the Fisher divergence.

Putting the above results together, we can state the following result, which demonstrates that the losses Equation 14 and 17 control the likelihood for learned approximations to the FPE Equation 20.

########## {caption="Theorem 18"}

Let $\rho$ denote the solution of the Fokker-Planck equation 20 with $\epsilon(t)= \epsilon>0$. Given two velocity fields $\hat{b}, \hat{s} \in C^0([0, 1], (C^1({\mathbb{R}}^d))^d)$, define

$ \hat{b}_\mathsf{F}(t, x) = \hat{b}(t, x) + \epsilon \hat{s}(t, x), \qquad \hat{v}(t, x) = \hat{b}(t, x) + \gamma(t) \dot{\gamma}(t) \hat{s}(t, x)\tag{41} $

where the function $\gamma$ satisfies the properties listed in Definition 1. Let $\hat{\rho}$ denote the solution to the Fokker-Planck equation

$ \partial_t \hat{\rho} + \nabla \cdot (\hat{b}_\mathsf{F}\hat{\rho}) = \epsilon \Delta \hat{\rho}, \qquad \hat{\rho}(0) = \rho_0. $

Then,

$ \mathsf{KL}(\rho_1: \Vert : \hat{\rho}(1)) \leq \frac{1}{2 \epsilon}\left(\mathcal{L}b[\hat{b}] - \min{\hat{b}}\mathcal{L}_b[\hat{b}]\right) + \frac{\epsilon}{2}\left(\mathcal{L}s[\hat{s}] - \min{\hat{s}}\mathcal{L}_s[\hat{s}]\right),\tag{42} $

where $\mathcal{L}_b[\hat{b}]$ and $\mathcal{L}_s[\hat{s}]$ are the objective functions defined in Equation 14 and 17, and

$ \mathsf{KL}(\rho_1: \Vert : \hat\rho(1)) \leq \frac{1}{2 \epsilon}\left(\mathcal{L}{v}[\hat{v}] - \min{\hat{v}}\mathcal{L}{v}[\hat{v}]\right) + \frac{\sup{t\in [0, 1]}(\gamma(t)\dot\gamma(t) - \epsilon)^2}{2 \epsilon}\left(\mathcal{L}{s}[\hat{s}] - \min{\hat{v}}\mathcal{L}_{s}[\hat{s}]\right). $

where $\mathcal{L}_v[\hat{v}]$ is the objective function defined in Equation 29.

########## {caption="Remark 19: Generative modeling"}

The above results have practical ramifications for generative modeling. In particular, they show that minimizing either the losses Equation 14 and 17 or 29 and 17 maximize the likelihood of the stochastic generative model

$ d\hat{X}_t^\mathsf{F} = \left(\hat{b}(t, \hat{X}_t^\mathsf{F}) + \epsilon\hat{s}(t, \hat{X}_t^\mathsf{F})\right)dt + \sqrt{2 \epsilon}dW_t, $

but that minimizing the objective Equation 14 is insufficient in general to maximize the likelihood of the deterministic generative model

$ \dot{\hat{X}}_t = \hat{b}(t, \hat{X}_t). $

Moreover, they show that, when learning $\hat{b}$ and $\hat{s}$, the choice of $\epsilon$ that minimizes the upper bound is given by

$ \epsilon^* = \left(\frac{\mathcal{L}b[\hat{b}] - \min{\hat{b}}\mathcal{L}_b[\hat{b}]}{\mathcal{L}s[\hat{s}] - \min{\hat{s}}\mathcal{L}_s[\hat{s}]}\right)^{1/2},\tag{43} $

so that $\epsilon^* > 1$ if the score is learned to higher accuracy than $\hat{b}$ and $\epsilon^*<1$ in the opposite situation. Note that Equation 43 suggests to take $\epsilon= 0$ if $\hat{b}$ is learned perfectly but $\hat{s}$ is not, and send $\epsilon \to \infty$ in the opposite situation. While taking $\epsilon=0$ is achievable in practice and leads to the ODE Equation 32, taking $\epsilon\to\infty$ is not, as increasing $\epsilon$ increases the expense of the numerical integration in Equation 33 and 34.

2.5 Density estimation and cross-entropy calculation

It is well-known that the solution of the TE Equation 10 can be expressed in terms of the solution to the probability flow ODE Equation 32; for completeness, we now recall this fact:

########## {caption="Lemma 20"}

Given the velocity field $\hat{b} \in C^0([0, 1], (C^1({\mathbb{R}}^d))^d)$, let $\hat\rho$ satisfy the transport equation

$ \partial_t \hat\rho + \nabla \cdot(\hat{b} \hat{\rho}) = 0,\tag{44} $

and let $X_{s, t}(x)$ solve the ODE

$ \frac{d}{dt} X_{s, t}(x) = b(t, X_{s, t}(x)), \qquad X{_{s, s}(x) = x, \qquad t, s \in [0, 1]}\tag{45} $

Then, given the PDFs $\rho_0$ and $\rho_1$:

- The solution to 44 for the initial condition $\hat{\rho}(0) = \rho_0$ is given at any time $t\in[0, 1]$ by

$ \hat{\rho}(t, x) = \exp\left(- \int_0^t \nabla \cdot b_{}(\tau, X_{t, \tau}(x)) d\tau \right) \rho_0(X_{t, 0}(x))\tag{46} $

- The solution to 44 for the final condition $\hat{\rho}(1) = \rho_1$ is given at any time $t\in[0, 1]$ by

$ \hat{\rho}(t, x) = \exp\left(\int_t^1 \nabla \cdot b_{}(\tau, X_{t, \tau}(x)) d\tau \right) \rho_1(X_{t, 1}(x))\tag{47} $

The proof of Lemma 20 can be found in Appendix B.4. Interestingly, we can obtain a similar result for the solution of the forward and backward FPEs in Equation 20 and 22. These results make use of auxiliary forward and backward SDEs in which the roles of the forward and backward drifts are switched:

########## {caption="Theorem 21"}

Given $\epsilon>0$ and two velocity fields $\hat{b}, \hat{s} \in C^0([0, 1], (C^1({\mathbb{R}}^d))^d)$, define

$ \hat{b}\mathsf{F}(t, x) = \hat{b}(t, x) + \epsilon \hat{s}(t, x), \qquad \hat{b}\mathsf{B}(t, x) = \hat{b}(t, x) - \epsilon \hat{s}(t, x),\tag{48} $

and let $Y^\mathsf{F}_t$ and $Y^\mathsf{B}_t$ denote solutions of the following forward and backward SDEs:

$ dY^\mathsf{F}t = b\mathsf{B}(t, Y^\mathsf{F}_t)dt + \sqrt{2\epsilon} dW_t,\tag{49} $

to be solved forward in time from the initial condition $Y^\mathsf{F}_{t=0}=x$ independent of $W$; and

$ dY^\mathsf{B}t = b\mathsf{F}(t, Y^\mathsf{B}_t)dt + \sqrt{2\epsilon} dW^\mathsf{B}t, \quad W_t^\mathsf{B} = -W{1-t},\tag{50} $

to be solved backwards in time from the final condition $Y^\mathsf{B}_{t=1}=x$ independent of $W^\mathsf{B}$. Then, given the densities $\rho_0$ and $\rho_1$:

- The solution to the forward FPE

$ \partial_t \hat{\rho}\mathsf{F} + \nabla \cdot (\hat{b}\mathsf{F} \hat{\rho}\mathsf{F}) = \epsilon \Delta \hat{\rho}\mathsf{F}, \qquad \hat{\rho}_\mathsf{F} (0) = \rho_0,\tag{51} $

can be expressed at $t=1$ as

$ \hat{\rho}\mathsf{F} (1, x) = {\mathbb{E}}\mathsf{B}^x \left(\exp\left(- \int_0^1\nabla \cdot \hat{b}_\mathsf{F}(t, Y^\mathsf{B}t) dt\right) \rho_0(Y{t=0}^\mathsf{B})\right),\tag{52} $

where ${\mathbb{E}}\mathsf{B}^x$ denotes expectation on the path of $Y_t^\mathsf{B}$ conditional on the event $Y{t=1}^\mathsf{B} = x$. 2. The solution to the backward FPE

$ \partial_t \hat{\rho}\mathsf{B}+ \nabla \cdot (\hat{b}\mathsf{B} \hat{\rho}\mathsf{B}) = -\epsilon \Delta \hat{\rho}\mathsf{B}, \qquad \hat{\rho}_\mathsf{B}(1) = \rho_1,\tag{53} $

can be expressed at any $t=0$ as

$ \hat{\rho}\mathsf{B}(0, x) = {\mathbb{E}}\mathsf{F}^x \left(\exp\left(\int_0^1\nabla \cdot \hat{b}_\mathsf{B}(t, Y^\mathsf{F}t) dt\right)\rho_1(Y^\mathsf{F}{t=1})\right),\tag{54} $

where ${\mathbb{E}}_\mathsf{F}^x$ denotes expectation on the path of $Y^\mathsf{F}t$ conditional on $Y^\mathsf{F}{t=0} = x$.

The proof of Theorem 21 can be found in Appendix B.4. Note that to generate data from either $\hat{\rho}\mathsf{F}(1)$ or $\hat{\rho}\mathsf{B}(0)$ assuming that we can sample exactly the PDF at the other end, i.e. $\rho_0$ and $\rho_1$ respectively, we would still rely on the equivalent of the forward and backward SDE in Equation 33 and 34, now used with the approximate drifts in Equation 48, i.e.

$ d\hat{X}^\mathsf{F}t = \hat{b}\mathsf{F}(t, \hat{X}^\mathsf{F}_t)dt + \sqrt{2\epsilon} dW_t,\tag{55} $

and

$ d\hat{X}^\mathsf{B}t = \hat{b}\mathsf{B}(t, \hat{X}^\mathsf{B}_t)dt + \sqrt{2\epsilon} dW^\mathsf{B}t, \quad W_t^\mathsf{B} = -W{1-t},\tag{56} $

If we solve Equation 55 forward in time from initial data $\hat{X}^\mathsf{F}{t=0} \sim \rho_0$, we then have $\hat{X}^\mathsf{F}{t=1} \sim \hat{\rho}\mathsf{F}(1)$ where $\hat{\rho}\mathsf{F}$ is the solution to the forward FPE Equation 51. Similarly If we solve Equation 56 backward in time from final data $\hat{X}^\mathsf{B}{t=1} \sim \rho_1$, we then have $\hat{X}^\mathsf{B}{t=0} \sim \hat{\rho}\mathsf{B}(0)$ where $\hat{\rho}\mathsf{B}$ is the solution to the backward FPE Equation 53.

The results of Lemma 20 and Theorem 21 can be used to test the quality of samples generated by either the ODE Equation 32 or the forward and backward SDEs Equation 33 and 34. In particular, the following two results are direct consequences of Lemma 20 and Theorem 21, respectively:

########## {caption="Corollary 22"}

Under the same conditions as Lemma 20, if $\hat{\rho}(0) = \rho_0$, the cross-entropy of $\hat{\rho}(1)$ relative to $\rho_1$ is given by

$ \begin{aligned} \mathsf{H}(\rho_1:\Vert:\hat\rho(1)) &= - \int_{{\mathbb{R}}^d} \log \hat\rho(1, x) \rho_1(x) dx\ & = {\mathbb{E}}1 \int_0^1 \nabla \cdot b{}(\tau, X_{1, \tau}(x_1)) d\tau - {\mathbb{E}}1 \log \rho_0(X{1, 0}(x_1)) \end{aligned}\tag{57} $

where ${\mathbb{E}}_1$ denotes an expectation over $x_1\sim\rho_1$. Similarly, if $\hat{\rho}(1) = \rho_1$, the cross-entropy of $\hat{\rho}(0)$ relative to $\rho_0$ is given by

$ \begin{aligned} \mathsf{H}(\rho_0:\Vert:\hat\rho(0)) &= - \int_{{\mathbb{R}}^d} \log \hat\rho(0, x) \rho_0(x) dx\ & = -{\mathbb{E}}0 \int_0^1 \nabla \cdot b{}(\tau, X_{0, \tau}(x_0)) d\tau - {\mathbb{E}}0 \log \rho_1(X{0, 1}(x_0)) \end{aligned}\tag{58} $

where ${\mathbb{E}}_0$ denotes an expectation over $x_0\sim\rho_0$.

########## {caption="Corollary 23"}

Under the same conditions as Theorem 21, the cross-entropy of $\hat{\rho}_\mathsf{F}(1)$ relative to $\rho_1$ is given by

$ \begin{aligned} \mathsf{H}(\rho_1:\Vert:\hat{\rho}\mathsf{F}(1)) &= - \int{{\mathbb{R}}^d} \log \hat{\rho}\mathsf{F}(1, x) \rho_1(x) dx\ & = -{\mathbb{E}}1 \log {\mathbb{E}}\mathsf{B}^{x_1} \left(\exp\left(- \int_0^1\nabla \cdot b\mathsf{F}(t, Y^\mathsf{B}t) dt\right) \rho_0(Y{t=0}^\mathsf{B})\right), \end{aligned}\tag{59} $

where ${\mathbb{E}}_\mathsf{B}^{x_1}$ denotes an expectation over $Y^\mathsf{B}t$ conditioned on the event $Y^\mathsf{B}{t=1}=x_1$, and ${\mathbb{E}}1$ denotes an expectation over $x_1\sim\rho_1$. Similarly, the cross-entropy of $\hat{\rho}\mathsf{B}(0)$ relative to $\rho_0$ is given by

$ \begin{aligned} \mathsf{H}(\rho_0:\Vert:\hat{\rho}\mathsf{B}(0)) &= - \int{{\mathbb{R}}^d} \log \hat{\rho}\mathsf{B}(0, x) \rho_0(x) dx\ & = -{\mathbb{E}}0 \log {\mathbb{E}}\mathsf{F}^{x_0} \left(\exp\left(\int_0^1\nabla \cdot b\mathsf{B}(t, Y^\mathsf{F}t) dt\right)\rho_1(Y^\mathsf{F}{t=1})\right), \end{aligned}\tag{60} $

where ${\mathbb{E}}_\mathsf{B}^{x_0}$ denotes an expectation over $Y^\mathsf{F}t$ conditioned on the event $Y^\mathsf{F}{t=0}=x_0$, and ${\mathbb{E}}_0$ denotes an expectation over $x_0\sim\rho_0$.

If in Equation 57, Equation 58, Equation 59, and 60 we approximate the expectations ${\mathbb{E}}_0$ and ${\mathbb{E}}_1$ over $\rho_0$ and $\rho_1$ by empirical expectations over the available data, these equations allow us to cross-validate different approximations of $\hat{b}$ and $\hat{s}$, as well as to compare the cross-entropies of densities evolved by the TE Equation 44 with those of the forward and backward FPEs Equation 51 and 53.

########## {caption="Remark 24"}

When using Equation 59 and 60 in practice, taking the $\log$ of the expectations ${\mathbb{E}}\mathsf{B}^{x_1}$ and ${\mathbb{E}}\mathsf{F}^{x_0}$ may create difficulties, such as when using Hutchinson's trace estimator to compute the divergence of $b_\mathsf{F}$ or $b_\mathsf{B}$, which will introduce a bias. One way to remove this bias is to use Jensen's inequality, which leads to the upper bounds

$ \begin{aligned} \mathsf{H}(\rho_1:\Vert:\hat{\rho}\mathsf{F}(1)) \le \int_0^1 {\mathbb{E}}1 {\mathbb{E}}\mathsf{B}^{x_1}\nabla \cdot b\mathsf{F}(t, Y^\mathsf{B}t) dt - {\mathbb{E}}1 {\mathbb{E}}\mathsf{B}^{x_1} \log \rho_0(Y{t=0}^\mathsf{B}), \end{aligned}\tag{61} $

and

$ \begin{aligned} \mathsf{H}(\rho_0:\Vert:\hat{\rho}\mathsf{B}(0)) \le -{\mathbb{E}}0 {\mathbb{E}}\mathsf{F}^{x_0} \int_0^1\nabla \cdot b\mathsf{B}(t, Y^\mathsf{F}t) dt- {\mathbb{E}}0 {\mathbb{E}}\mathsf{F}^{x_0} \log \rho_1(Y^\mathsf{F}{t=1}). \end{aligned}\tag{62} $

However, these bounds are not sharp in general – in fact, using calculations similar to the one presented in the proof of Corollary 23, we can derive exact expressions that capture precisely what is lost when applying Jensen's inequality:

$ \begin{aligned} \mathsf{H}(\rho_1:\Vert:\hat{\rho}\mathsf{F}(1))= \int_0^1 {\mathbb{E}}1 {\mathbb{E}}\mathsf{B}^{x_1}\left(\nabla \cdot b\mathsf{F}(t, Y^\mathsf{B}t) -\epsilon |\nabla \log \hat{\rho}\mathsf{F}(t, Y_t^\mathsf{B})|^2\right) dt - {\mathbb{E}}1 {\mathbb{E}}\mathsf{B}^{x_1} \log \rho_0(Y_{t=0}^\mathsf{B}), \end{aligned}\tag{63} $

and

$ \begin{aligned} \mathsf{H}(\rho_0:\Vert:\hat{\rho}\mathsf{B}(0)) = -{\mathbb{E}}0 {\mathbb{E}}\mathsf{F}^{x_0} \int_0^1\left(\nabla \cdot b\mathsf{B}(t, Y^\mathsf{F}t) +\epsilon |\nabla \log \hat{\rho}\mathsf{B}(t, Y_t^\mathsf{F})|^2\right) dt- {\mathbb{E}}0 {\mathbb{E}}\mathsf{F}^{x_0} \log \rho_1(Y^\mathsf{F}_{t=1}). \end{aligned}\tag{64} $

Unfortunately, since $\nabla \log \hat\rho_\mathsf{F} \not= \hat{s}$ and $\nabla \log \hat\rho_\mathsf{B} \not= \hat{s}$ in general due to approximation errors, we do not know how to estimate the extra terms on the right-hand side of Equation 63 and 64. One possibility is to use $\hat{s}$ as a proxy for $\nabla \log \hat{\rho}\mathsf{F}$ and $\nabla \log \hat\rho\mathsf{B}$, which may be useful in practice, but this approximation is uncontrolled in general.

3. Instantiations and extensions

Section Summary: This section explores specific applications of the stochastic interpolant framework by introducing diffusive interpolants, which build generative models similar to those in recent studies on diffusive bridge processes but in a simpler way. These interpolants combine a basic path between data points with random noise from a Brownian bridge, allowing the same probability distributions at each step as the original method while making sampling easier without complex math. It also shows how this approach enables generating samples from any target distribution starting from a single fixed point, using a special equation that keeps the process smooth and non-singular throughout.

In this section, we instantiate the stochastic interpolant framework discussed in Section 2.

3.1 Diffusive interpolants

Recently, there has been a surge of interest in the construction of generative models through diffusive bridge processes ([36, 37, 39]). In this section, we connect these approaches with our own, highlighting that stochastic interpolants allow us to manipulate certain bridge processes in a simpler and more direct manner. We also show that this perspective leads to a generative process that samples any target density $\rho_1$ by pushing a point mass at any $x_0\in {\mathbb{R}}^d$ through an SDE. We begin by introducing a new kind of interpolant:

########## {caption="Definition 25: Diffusive interpolant"}

Given two probability density functions $\rho_0, \rho_1 : {{\mathbb{R}}^d} \rightarrow {\mathbb{R}}_{\geq 0}$, a diffusive interpolant between $\rho_0$ and $\rho_1$ is a stochastic process $x^\mathsf{d}_t$ defined as

$ x^\mathsf{d}_t = I(t, x_0, x_1) + \sqrt{2a(t)} B_t, \qquad t\in [0, 1],\tag{65} $

where:

- $I(t, x_0, x_1)$ is as in Definition 1;

- $(x_0, x_1)\sim \nu$ with $\nu$ satisfying Equation 4 in Definition 1;

- $a(t)\in C^2([0, 1])$ with $a(0) >0$ and $a(t)\ge 0 $ for all $t\in(0, 1]$, and;

- $B_t$ is a standard Brownian bridge process, independent of $x_0$ and $x_1$.

Pathwise, Equation 65 is different from the stochastic interpolant introduced in Definition 1: in particular, $x^\mathsf{d}_t$ is continuous but not differentiable in time. At the same time, since $B_t$ is a Gaussian process with mean zero and variance ${\mathbb{E}} B^2_t = t(1-t)$, Equation 65 has the same single-time statistics and time-dependent density $\rho(t, x)$ as the stochastic interpolant Equation 2 if we set $\gamma(t) = \sqrt{2a(t)t(1-t)}$, i.e.

$ x_t = I(t, x_0, x_1)+ \sqrt{2a(t)t(1-t)} z \quad \text{with} \quad (x_0, x_1)\sim \nu, \ z \sim {\sf N}(0, \text{\it Id}), \ (x_0, x_1) \perp z.\tag{66} $

As a result, Equation 65 and 66 lead to the same generative models. Technically, it is easier to work with Equation 66 than with Equation 65, because it avoids the use of Itô calculus, and enables direct sampling of $x_t$ using samples from $\rho_0$, $\rho_1$, and $\mathsf{N}(0, \text{\it Id})$. However, Equation 65 sheds light on some interesting properties of the generative models based on Equation 66, i.e. stochastic interpolants with $\gamma(t) = \sqrt{2a(t)t(1-t)}$. To see why, we now re-derive the transport equation for the density $\rho(t, x)$ shared by Equation 65 and 66 using the relation Equation 65 using Fourier analysis. For simplicity, we focus on the case where $a(t)$ is constant in time, i.e. we set $a(t)=a>0$ in Equation 65.

To begin, recall that the Brownian Bridge $B_t$ can be expressed in terms of the Wiener process $W_t$ as $B_t = W_t - tW_{t=1}$. Moreover, it satisfies the SDE obtained by conditioning on $B_{t=1}=0$ via Doob's $h$-transform ([61]):

$ dB_t = - \frac{B_t} 1-t dt + dW_t, \qquad B_{t=0}=0.\tag{67} $

A direct application of Itô's formula implies that

$ de^{ik\cdot x_t^\mathsf{d}} = ik \cdot \Big(\partial_t I(t, x_0, x_1) - \frac{\sqrt{2a}B_t} 1-t \Big) e^{ik\cdot x_t^\mathsf{d}} dt - a|k|^2 e^{ik\cdot x_t^\mathsf{d}} dt + \sqrt{2a}ik\cdot dW_t e^{ik\cdot x_t^\mathsf{d}}.\tag{68} $

Taking the expectation of this expression and using the independence between $(x_0, x_1)$ and $B_t$, we deduce that

$ \partial_t {\mathbb{E}} e^{ik\cdot x_t^\mathsf{d}} = ik \cdot {\mathbb{E}} \Big(\Big(\partial_t I(t, x_0, x_1) - \frac{\sqrt{2a}B_t} 1-t \Big) e^{ik\cdot x_t^\mathsf{d}} \Big) - a|k|^2 {\mathbb{E}} e^{ik\cdot x_t^\mathsf{d}}.\tag{69} $

Since for all fixed $t\in [0, 1]$ we have $B_t \stackrel{d}{=} \sqrt{t(1-t)}z$ and $x^\mathsf{d}_t \stackrel{d}{=} x_t $ with $x_t$ defined in Equation 66, the time derivative Equation 69 can also be written as

$ \partial_t {\mathbb{E}} e^{ik\cdot x_t} = ik \cdot {\mathbb{E}} \Big(\Big(\partial_t I(t, x_0, x_1) - \frac{\sqrt{2at}, z} {\sqrt{1-t} }\Big) e^{ik\cdot x_t} \Big) - a|k|^2 {\mathbb{E}} e^{ik\cdot x_t}.\tag{70} $

Moreover, since by definition of their probability density we have ${\mathbb{E}} e^{ik\cdot x_t^\mathsf{d}}= {\mathbb{E}} e^{ik\cdot x_t} = \int_{{\mathbb{R}}^d} e^{ik\cdot x}\rho(t, x)dx$, we can deduce from Equation 70 that $\rho(t)$ satisfies

$ \partial_t \rho+\nabla \cdot(u \rho) = a \Delta \rho,\tag{71} $

where we defined

$ u(t, x) = {\mathbb{E}}\Big(\partial_t I(t, x_0, x_1) - \frac{\sqrt{2at} , z} {\sqrt{1-t}}\Big| x_t = x\Big).\tag{72} $

For the interpolant $x_t$ in Equation 66, we have from the definitions of $b$ and $s$ in Equation 11 and 15 that

$ \begin{aligned} b(t, x) &= {\mathbb{E}}\Big(\partial_t I(t, x_0, x_1) + \frac{a(1-2t) z} {\sqrt{2t(1-t)}}\Big| x_t = x\Big), \ s(t, x) &= \nabla \log\rho(t, x) = - \frac1{\sqrt{2at(1-t)}}{\mathbb{E}}(z| x_t = x), \end{aligned}\tag{73} $

As a result, $u-s=b$ and 71 can also be written as the TE Equation 10 using $\Delta \rho = \nabla \cdot(s \rho)$.

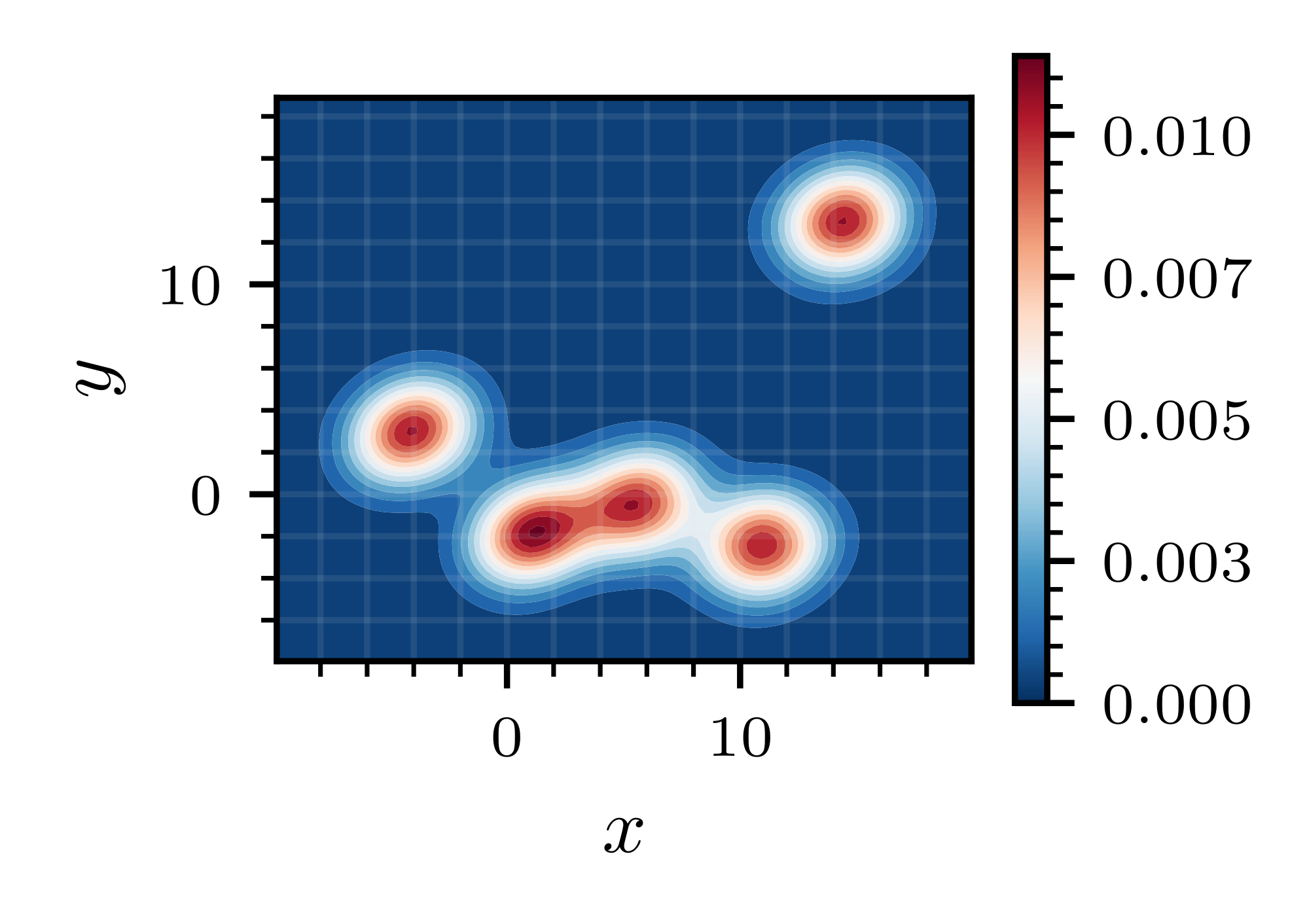

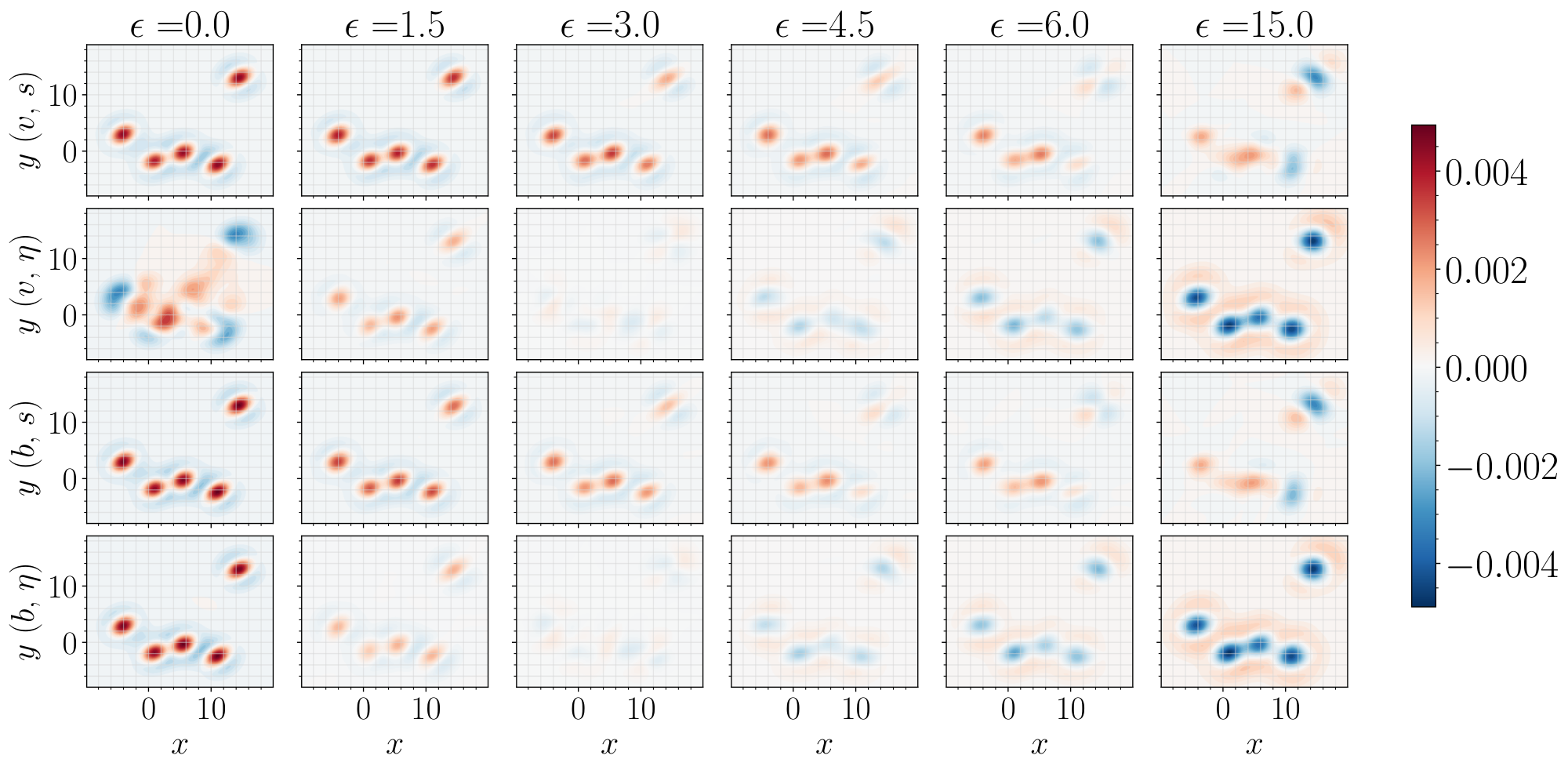

Conditional sampling.