Neural Computers

Mingchen Zhuge$^{1, 2, +}$, Changsheng Zhao$^{1, +}$, Haozhe Liu$^{1, 2, +}$, Zijian Zhou$^{1, +}$, Shuming Liu$^{1, 2, +}$, Wenyi Wang$^{2}$, Ernie Chang$^{1}$, Gael Le Lan$^{1}$, Junjie Fei$^{1, 2}$, Wenxuan Zhang$^{1, 2}$, Yasheng Sun$^{2}$, Zhipeng Cai$^{1}$, Zechun Liu$^{1}$, Yunyang Xiong$^{1}$, Yining Yang$^{1}$, Yuandong Tian$^{1}$, Yangyang Shi$^{1}$, Vikas Chandra$^{1}$, Jürgen Schmidhuber$^{2}$

$^{1}$ Meta AI

$^{2}$ KAUST

$^{+}$ Core Contributors

Abstract

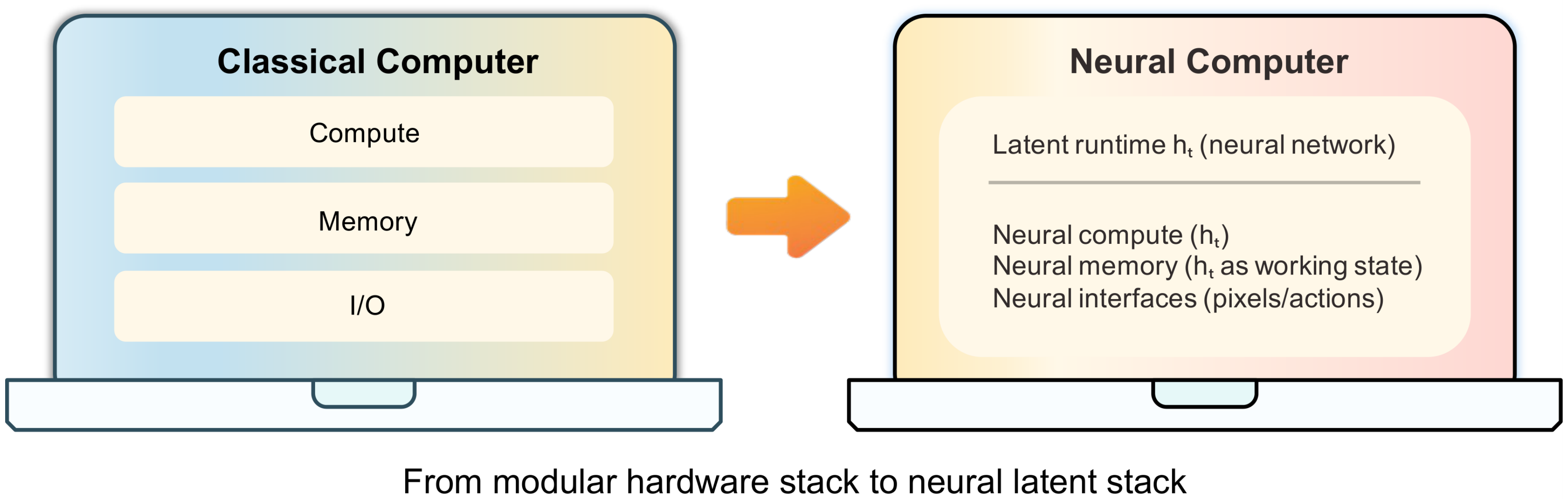

We propose a new frontier: Neural Computers (NCs)—an emerging machine form that unifies computation, memory, and I/O in a learned runtime state. Unlike conventional computers, which execute explicit programs, agents, which act over external execution environments, and world models, which learn environment dynamics, NCs aim to make the model itself the running computer. Our long-term goal is the Completely Neural Computer (CNC): the mature, general-purpose realization of this emerging machine form, with stable execution, explicit reprogramming, and durable capability reuse. As an initial step, we study whether early NC primitives can be learned solely from collected I/O traces, without instrumented program state. Concretely, we instantiate NCs as video models that roll out screen frames from instructions, pixels, and user actions (when available) in CLI and GUI settings. These implementations show that learned runtimes can acquire early interface primitives, especially I/O alignment and short-horizon control, while routine reuse, controlled updates, and symbolic stability remain open. We outline a roadmap toward CNCs around these challenges. If overcome, CNCs could establish a new computing paradigm beyond today’s agents, world models, and conventional computers.

Blogpost: https://metauto.ai/neuralcomputer

Correspondence: [email protected], [email protected]

Executive Summary: The rapid evolution of AI agents and world models has exposed a key limitation in current computing: systems still separate learned intelligence from the actual execution environment, relying on external hardware, operating systems, or simulators to handle computation, memory, and input/output. This fragmentation hinders seamless integration, especially for tasks involving dynamic interfaces like command lines or graphical desktops. As AI capabilities grow, there is an urgent need to explore whether a neural model can internalize these roles into a single learned state, potentially creating a new machine paradigm that unifies AI with computing infrastructure.

This document proposes neural computers (NCs)—neural systems where a single set of learned weights acts as the running computer, blending computation, memory, and interfaces in a dynamic runtime state. It evaluates whether basic NC features, such as aligning inputs/outputs and short-term control, can emerge solely from raw interaction traces like screen frames and user actions, without access to underlying program details.

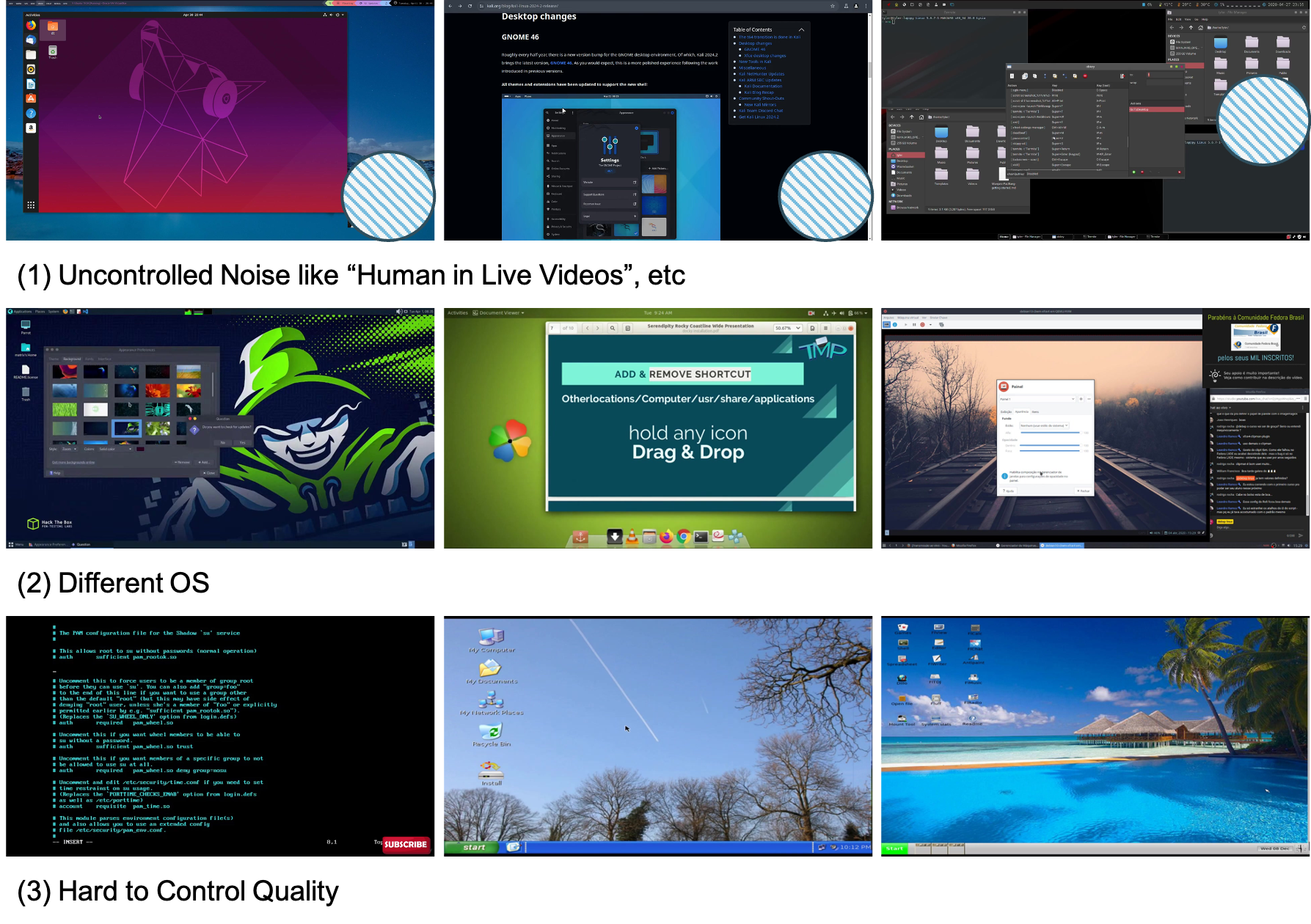

Researchers built NC prototypes as video generation models, trained to predict interface evolution from initial frames, text prompts, or action streams. For command-line interfaces (CLI), they used two datasets: one with 1,100 hours of diverse public terminal recordings and another with 128,000 scripted, clean sessions in controlled environments. For graphical user interfaces (GUI), they collected 1,500 hours of desktop interactions in a standardized Ubuntu setup, including random mouse/keyboard actions and 110 hours of goal-directed traces from AI agents. Training involved fine-tuning a state-of-the-art video model over thousands of GPU hours, focusing on synchronized data to ensure temporal accuracy. Evaluations measured rendering quality, text fidelity via optical character recognition, and control precision through metrics like structural similarity and video distance, without real-time feedback loops.

The most critical finding is that NCs can achieve strong interface fidelity: in CLI setups, generated terminals show readable text at practical font sizes, with character accuracy reaching 54% and exact line matches at 31% after training. In GUI, explicit visual cues for cursor position yield 98.7% accuracy, enabling coherent short responses to actions like clicks or hovers. Second, data quality trumps scale—a small set of purposeful interactions outperformed ten times more random data by improving post-action consistency up to 16% in visual metrics. Third, detailed prompts boost control: CLI arithmetic tasks jumped from 4% to 83% accuracy with better conditioning, though native symbolic reasoning stayed weak at under 5% without aids. Fourth, deeper integration of actions into the model enhances responsiveness, cutting temporal distortion by about 50% compared to surface-level methods. Finally, prototypes handle short workflows well but falter on longer chains, with limited reuse of learned routines.

These results suggest NCs mark an early shift toward self-contained neural runtimes, capable of basic interface simulation without external execution layers. This could reduce costs and risks in AI-driven systems by embedding control directly in models, improving robustness for uncertain tasks like user interactions—areas where traditional computers struggle with noise or adaptation. Unlike prior world models, which predict but do not execute, or agents that rely on separate tools, NCs begin to close this gap, though weaker-than-expected symbolic performance underscores that scaling alone won't suffice; holistic numerical processing favors perceptual tasks over precise math.

To advance, prioritize engineering for stable reuse—such as installing routines that persist across sessions without retraining—and explicit updates to govern changes, avoiding unintended drifts. Test deeper architectures for long-term reasoning, perhaps combining video models with symbolic modules, and run pilots on real-world tasks like error recovery in desktops. Options include heavy reliance on high-quality curated data (lower risk, slower scaling) versus automated collection with filters (faster but higher noise). Further work needs richer datasets for edge cases and closed-loop evaluations to validate interactivity before committing resources.

Confidence in early primitives like rendering and short control is high, backed by consistent metrics across prototypes. However, uncertainties remain in symbolic stability and long-horizon behavior due to video substrates' bias toward visuals over logic, and assumptions of clean data may not hold in diverse real environments—stakeholders should approach full deployment cautiously, focusing first on controlled proofs of concept.

1. Introduction

Section Summary: The introduction proposes the concept of a Neural Computer, a neural network system that combines computing, memory, and input-output functions within a single learned state, aiming to make the AI itself function like a running computer rather than relying on separate external tools. To test this, the authors create practical prototypes using video models that simulate interactions with command-line terminals and graphical desktop interfaces, drawing on advances in world modeling and video generation. Early experiments show promise in rendering basic workflows and handling short actions, but highlight ongoing challenges toward a fully mature version called a Completely Neural Computer, with contributions including new data tools and a roadmap for future development.

Can a single set of weights act as a "computer"? We term this abstraction a Neural Computer (NC): a neural system that unifies computation, memory, and I/O in a learned runtime state. This usage is distinct from the Neural Turing Machine / Differentiable Neural Computer line ([1, 2]): our concern is not differentiable external memory, but whether a learning machine can begin to assume the role of the running computer itself.

To implement this idea, we instantiate NCs as video models. At this stage, video models are the most practical substrate for this prototype, though we expect the long-term solution to require a fundamentally new neural architecture (Section 4). This implementation draws on several technical lines. World models ([3]) show that neural networks can internalize environment dynamics and support predictive imagination, while high-capacity video generators such as Veo 3.1 ([4]) and Sora 2 ([5]) show that such learned dynamics can be rendered into coherent frame sequences. Frontier interactive video models such as Genie 3 ([6]) further extend this trajectory toward action-controllable generative environments. These lines provide practical machinery for current NC prototypes, but do not by themselves define the NC abstraction. In parallel, LLM-driven UI systems such as Imagine with Claude^1 map natural-language inputs to structured interface updates. Yet these capabilities remain split across different systems objects: conventional computers execute explicit programs, agents act through external execution environments, and world models render or predict environment dynamics, while executable state still resides outside the model. NCs are motivated by this gap: they are not a smarter layer on top of the existing stack, but a proposal to make the model itself the running computer. The immediate question in this paper is whether early runtime primitives can be learned directly from raw interface I/O without privileged access to program state.

Throughout this paper, NC denotes this proposed machine form, while CNC denotes its mature, general-purpose realization. We study two interface-specific prototypes of this NC formulation (see Section 2). NC $\text{CLIGen}$ models terminal interaction from text (natural language or command lines) and an initial frame, while NC $\text{GUIWorld}$ models desktop interaction from recent pixels and synchronized mouse/keyboard actions (Section 3.1 and Section 3.2).

**Neural Computer (NC) abstraction (Teaser).**

A neural system $(F, G)$ parameterized by $\theta$ that models an interactive computer interface through a single latent runtime state $h_t$ that carries executable interface state and also acts as working memory (see Equation 1).

In the NC $_\text{CLIGen}$ experiments, the NC can render and execute basic command-line workflows. It often stays aligned with the terminal buffer and captures common "physics" of everyday CLI use (e.g., fast scrollback, prompt wrapping, window resizing). On carefully scripted data, rollouts can be visually and structurally close to real sessions, and the model can execute short command chains and render their outputs. Arithmetic-probe scores improve substantially with stronger system-level conditioning, though symbolic stability remains limited.

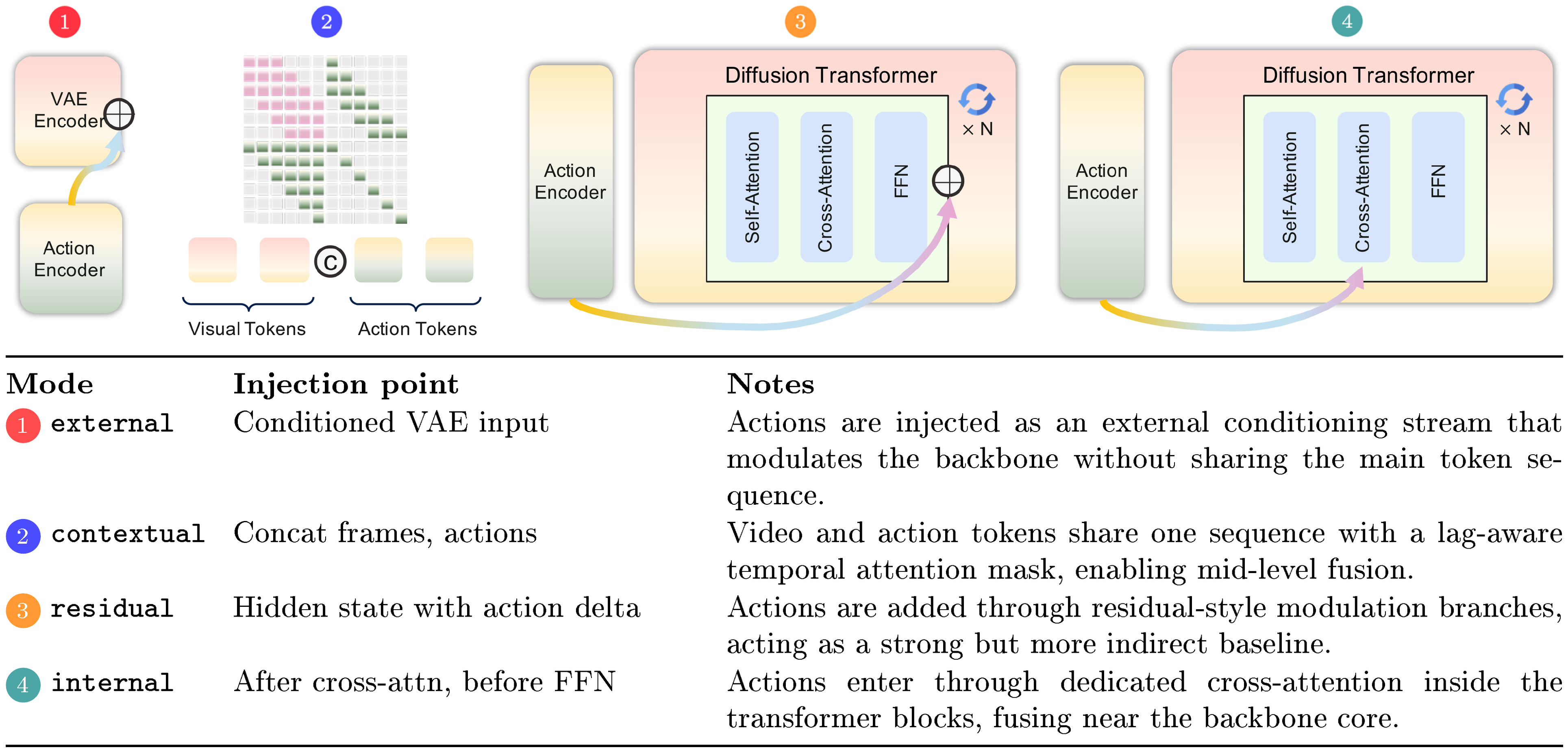

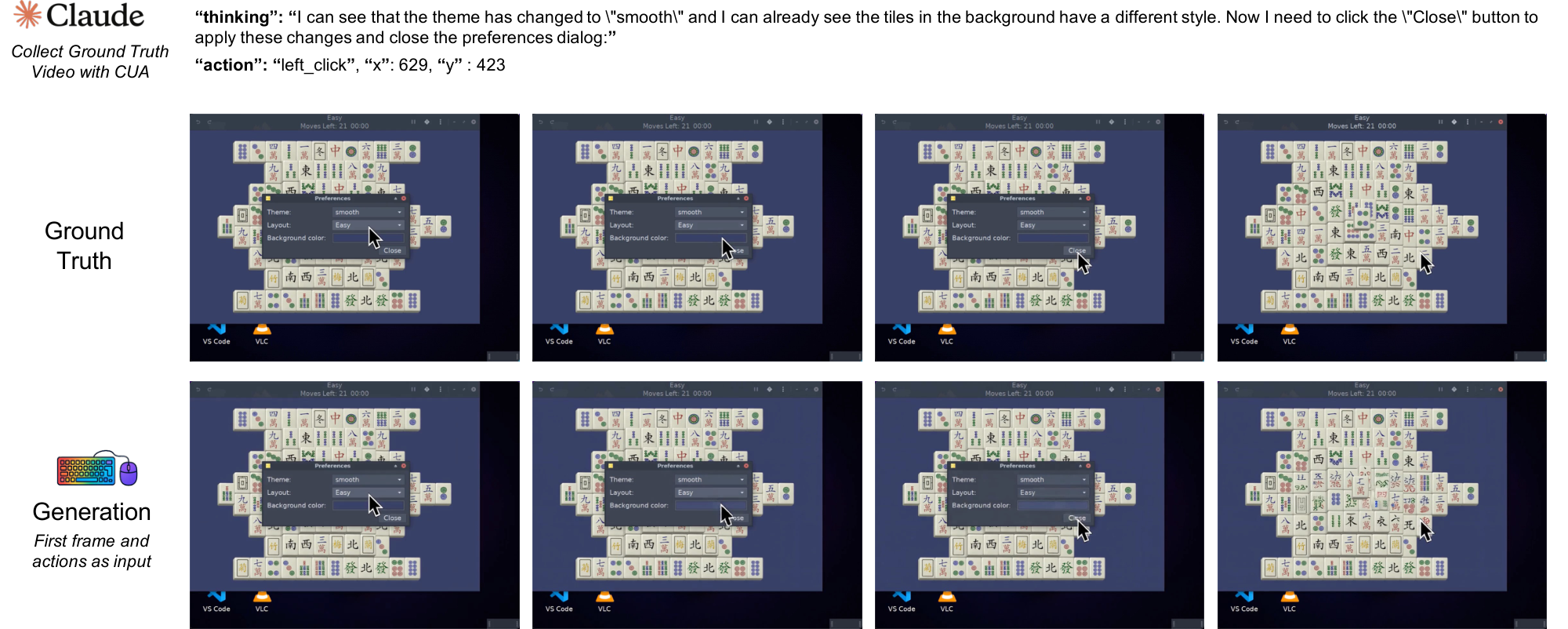

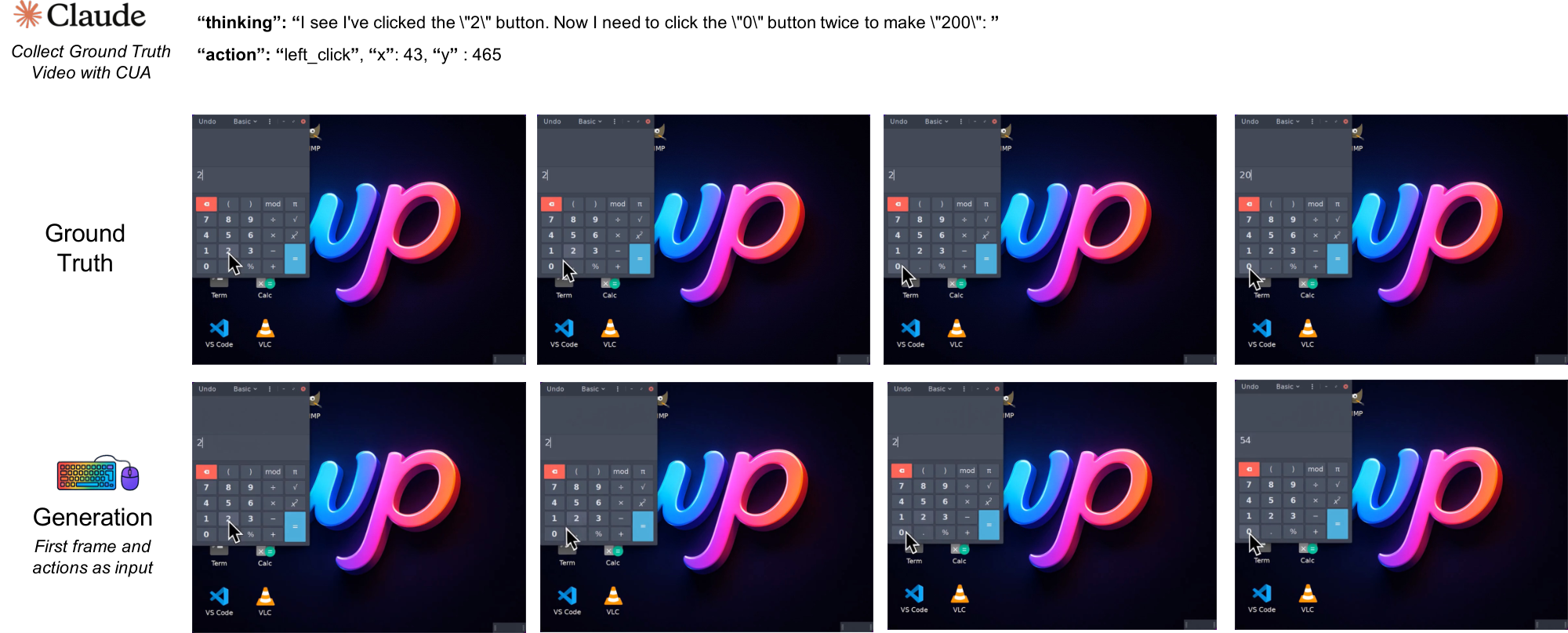

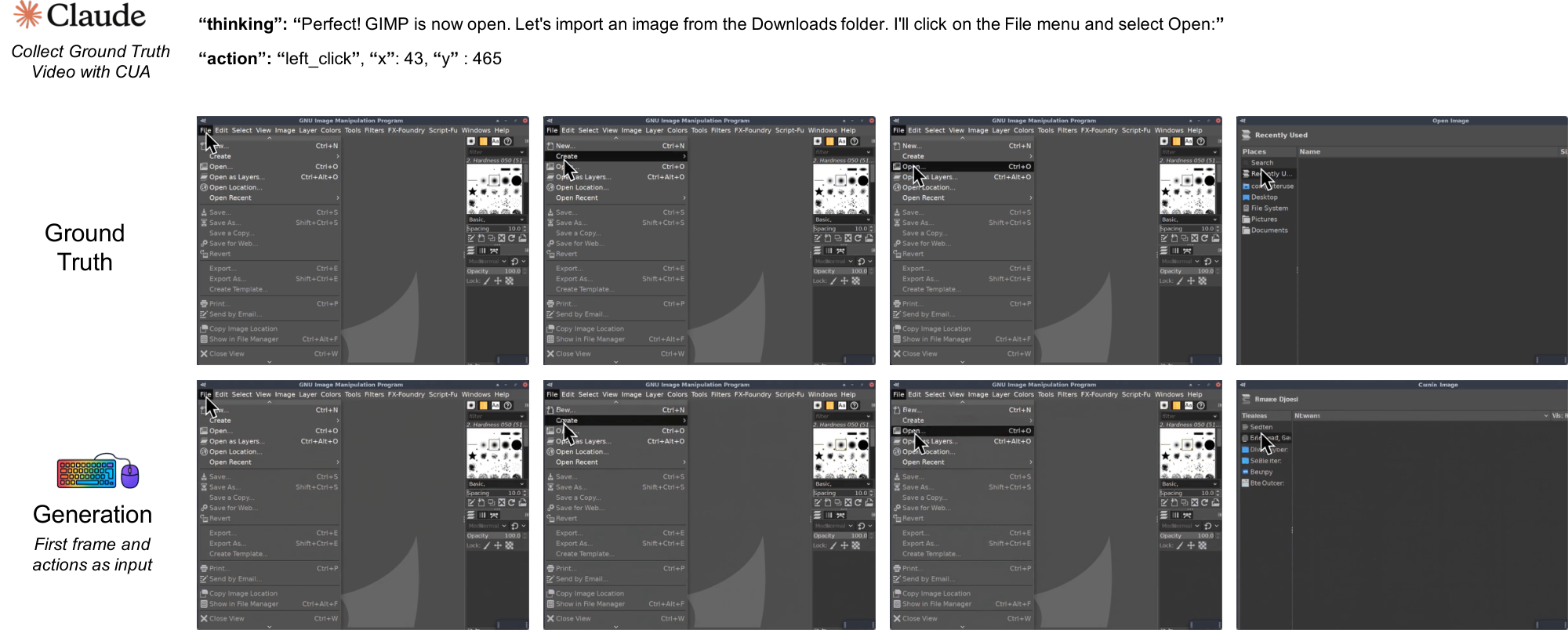

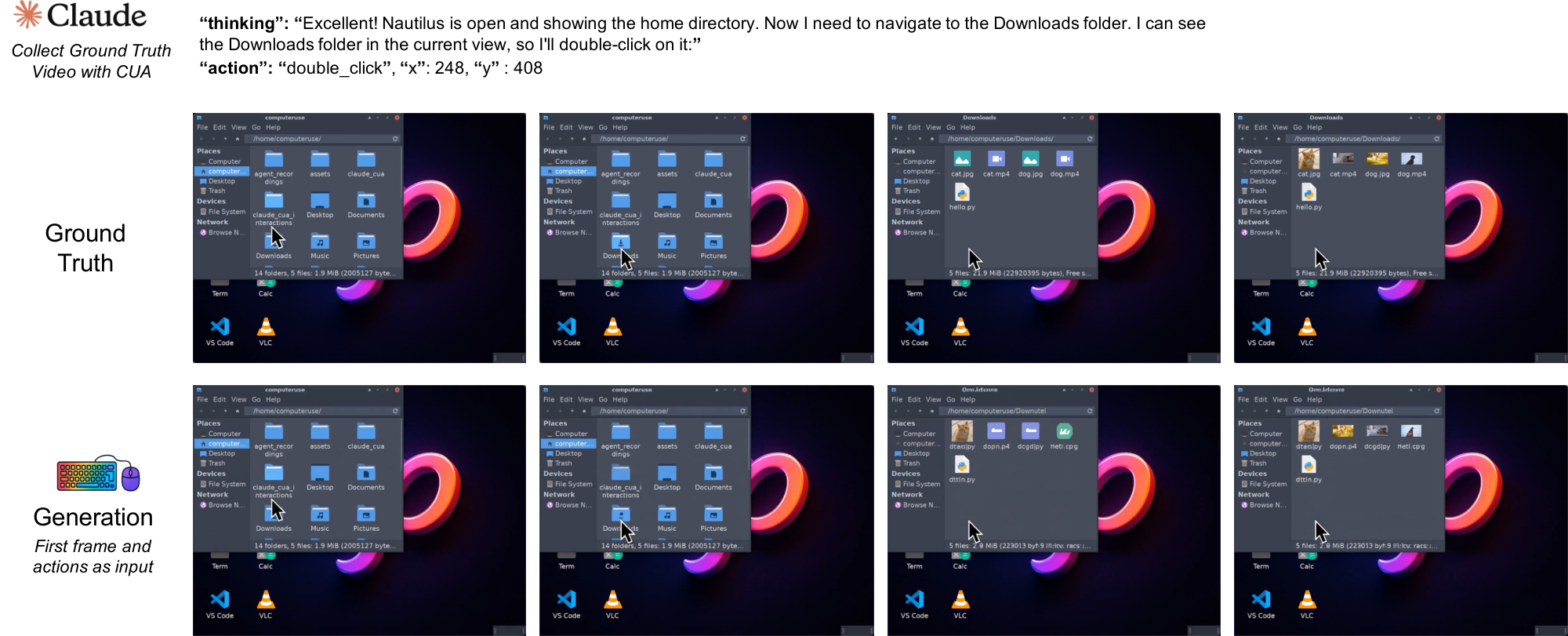

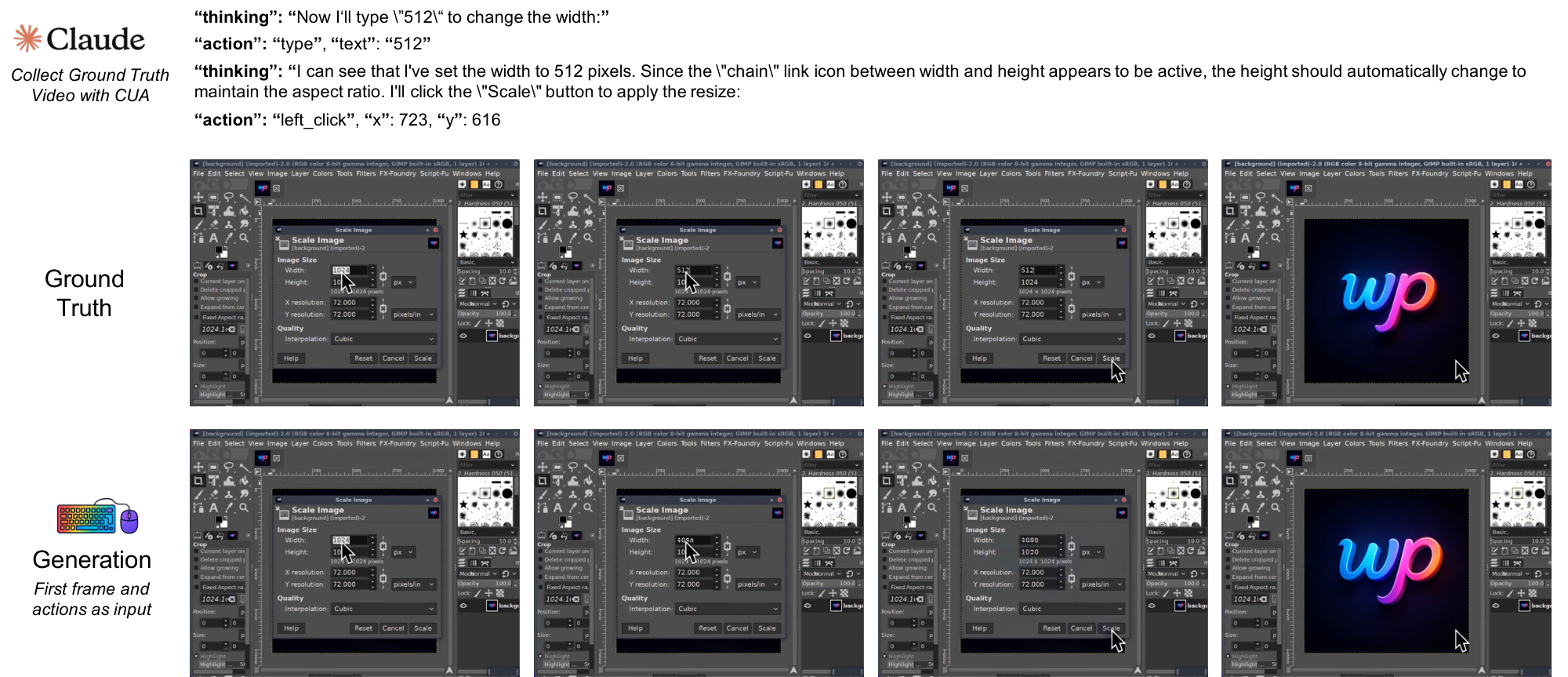

In the NC $_\text{GUIWorld}$ experiments, we evaluate standard world-model designs across action injection, action encoding, and data quality. Figure 1 summarizes this template across two interface-specific NCs trained separately without shared parameters. Qualitatively, the model learns coherent pointer dynamics and short-horizon action responses (e.g., hover/click feedback and window/menu transitions), suggesting that local GUI control primitives are learnable in controlled settings.

Our experimental insights indicate that current NCs already realize early runtime primitives, most notably I/O alignment and short-horizon control. The long-term target is a Completely Neural Computer (CNC), the mature, general-purpose realization of this machine form: a fully learned computer whose compute, memory, and interfaces are unified in a single learned runtime substrate rather than engineered as separate modules. These prototypes are an early step toward that CNC vision. Substantial challenges remain in robust long-horizon reasoning, reliable symbolic processing, stable capability reuse, and explicit runtime governance. Section 4 outlines these open challenges and a roadmap toward CNCs.

**Completely Neural Computer (CNC) abstraction (Section 4.2).**

A Neural Computer instance is *complete* (i.e., a CNC) if it is

(i) *Turing complete*,

(ii) *universally programmable*,

(iii) *behavior-consistent* unless explicitly reprogrammed, and

(iv) realizes the architectural and programming-language advantages of NCs relative to conventional computers.

Concretely, this work makes the following contributions:

- Define neural computers (NCs) and build video-based prototypes for both CLI and GUI interfaces.

- Provide a data engine and alignment recipe that synchronize text, actions, and frames for the CLI and GUI environments used in this paper.

- Identify practical design choices for NCs through extensive ablation studies.

- Outline an engineering roadmap toward completely neural computers (CNCs), centered on acceptance challenges such as reuse, consistency, and runtime governance.

2. Preliminaries

Section Summary: This section introduces conventional digital computers as traditional machines that separate processing, memory, and input/output functions, often relying on operating systems to manage them. It poses a key question: can a single neural network's internal state handle all these roles without external support, using a "neural computer" prototype that simulates video interfaces through an update-and-render process? The neural computer updates a hidden internal state based on current screen views and user actions to predict the next frame, drawing from related research in neural memory systems, world models, and advanced video generators to enable interactive simulations like command-line or desktop environments.

Throughout this paper, we use conventional digital computers as an umbrella term for stored-program machines (e.g., von Neumann-style architectures): at the theory level they are commonly abstracted as random-access machines with an instruction set architecture, and at the systems level they are typically realized through layered operating-system/application stacks. Such systems separate computation, memory, and I/O. Our motivating question is whether a single set of weights can internalize these roles inside one latent runtime state, rather than relying on an external execution environment (e.g., OS/simulator) to carry executable state. We model a video-based neural computer (NC) prototype as a learned latent-state system that folds these roles into an update-and-render loop.

Specifically, an NC updates a latent runtime state from the current observation and conditioning input, and then predicts (or samples) the next observation. In this paper, we treat screen frames as observables and define actions as time-indexed conditions. More broadly, the NC framework can accommodate various other modalities and structural representations for both observables and actions. Given an initial screen frame $x_0$ and conditioned on user action $u_t$ at iteration $t$, an NC updates its runtime state and samples the next frame $x_{t+1}$. Formally, an NC defined by an initial runtime state $h_0$, an update function $F_\theta$, and a decoder $G_\theta$ operates as follows, where $G_\theta$ parameterizes a distribution over next frames:

$ \begin{align} h_t &= F_\theta(h_{t-1}, x_t, u_t), \qquad x_{t+1} \sim G_\theta(h_t). \end{align}\tag{1} $

In this formulation, $h_t$ provides the persistent runtime memory, $F_\theta$ carries the state-update computation, and $(x_t, u_t, G_\theta)$ define the I/O pathway from observations and actions to the next observable state.

Notation. We use $h_t$ for the NC latent runtime state and reserve $z$ for VAE/video latents used in diffusion-style video models (e.g., Section 3.2).

This update-and-render loop can be described using world-model terminology, where $x_t$ are observations and $u_t$ provides conditioning. In that terminology, the input sequence ${u_t}$ is referred to as a conditioning stream. This view supplies practical machinery for the current prototype, but an NC is not merely a predictor of interface dynamics: it is a learned runtime mechanism in which the latent state $h_t$ carries executable context, $F_\theta$ integrates new observations and inputs, and $G_\theta$ renders the next frame. Auxiliary heads can encode and decode prompts, buffers, or action traces, shifting functionality that would traditionally live in OS queues, device drivers, and UI toolkits into latent-state dynamics.

2.1 Related Work

Early neuromorphic designs ([7]) explored neural computation as a physical substrate. Differentiable memory and program-execution architectures, including fast weight programmers ([8, 9, 10]), Neural Turing Machines ([1]), Differentiable Neural Computers ([2]), and Neural Programmer-Interpreters ([11]), showed that neural controllers with memory can execute structured procedures. Differentiable world models ([12, 13]) learn neural representations of environment dynamics, and inspire our update-and-render formulation. Latent video and world models ([3, 14, 15, 6]) apply these ideas to embodied control and interactive environments. Genie 3 ([6]), in particular, frames such models as agent-training substrates with improved physical consistency. More recently, high-capacity generators such as Veo 3 ([4]) and Sora 2 ([5]) emphasize open-ended, photorealistic simulation. In parallel, systems such as NeuralOS ([16]) and Imagine with Claude ([17]) bring model-based conditioning to desktop and DOM-style interfaces. Building on this trajectory, we study two NC instantiations for CLI and GUI with interface-specific conditioning, supported by a shared data engine and a staged roadmap toward the CNC vision.

3. Implementation of Neural Computers

Section Summary: Researchers have developed neural computers, or NCs, by enhancing an advanced video generation model called Wan2.1 to simulate computer interfaces like command-line terminals and graphical user interfaces. These NCs use prompts or recorded action sequences to predict and generate future frames of the interface in an open-loop setup, meaning they don't interact live with real software but replay logged behaviors. For the command-line version, called CLIGen, they created two datasets: one from diverse real-world terminal recordings using public tools like asciinema, and another from controlled, scripted sessions in isolated environments to ensure clean and repeatable data for training.

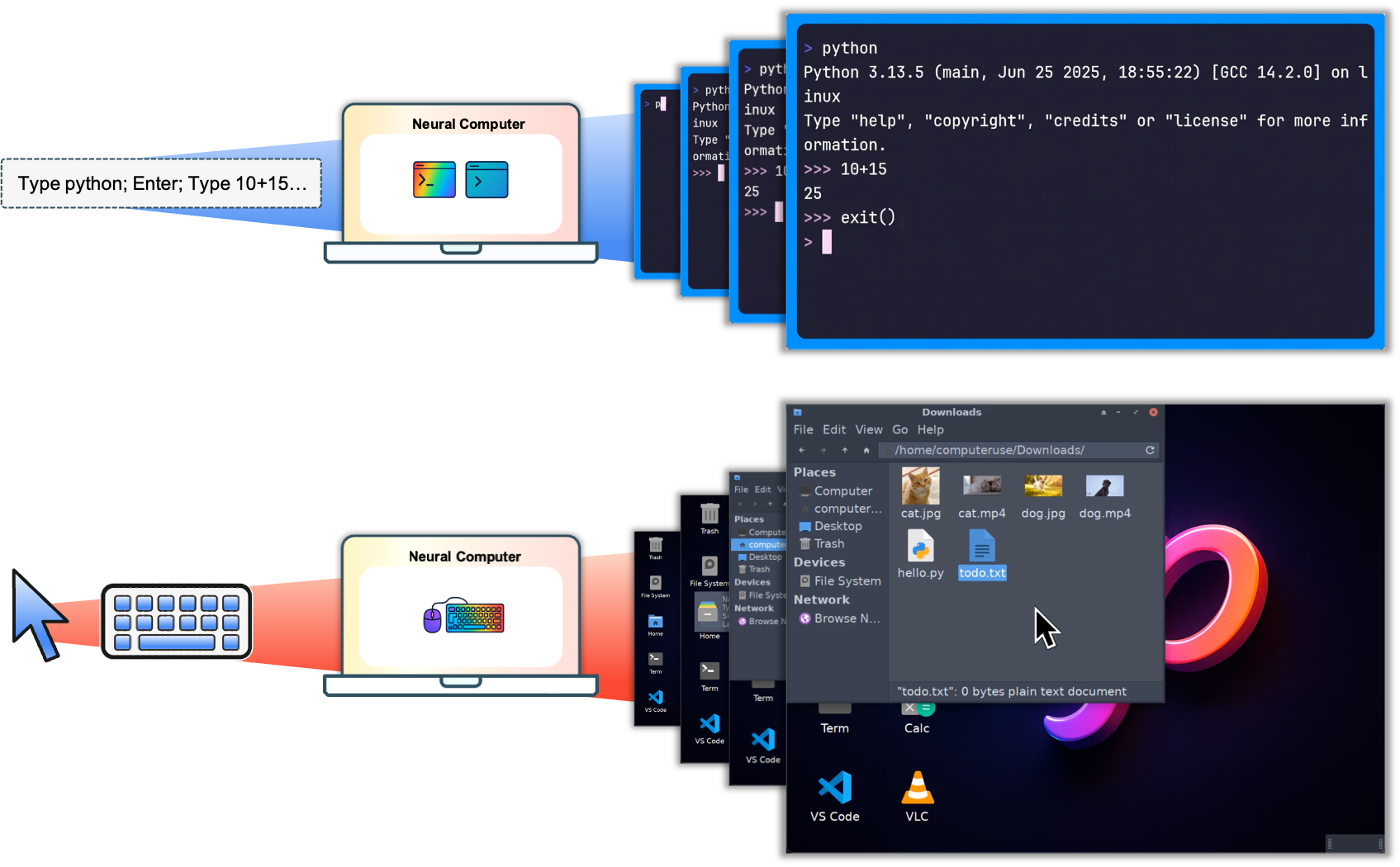

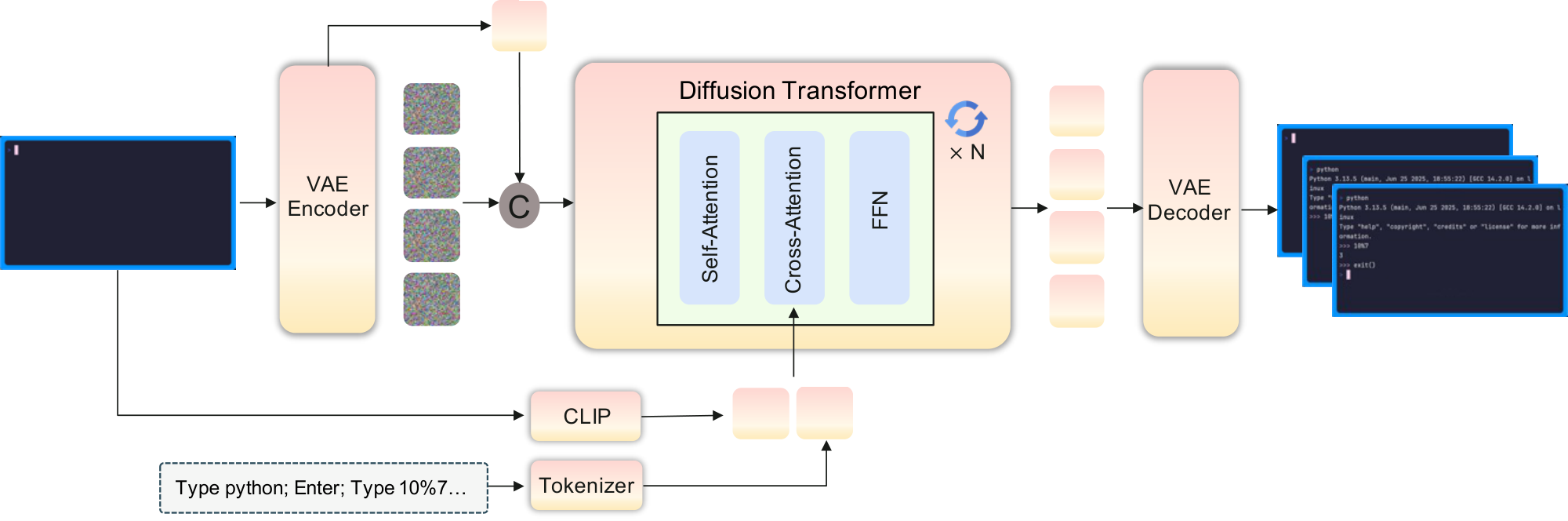

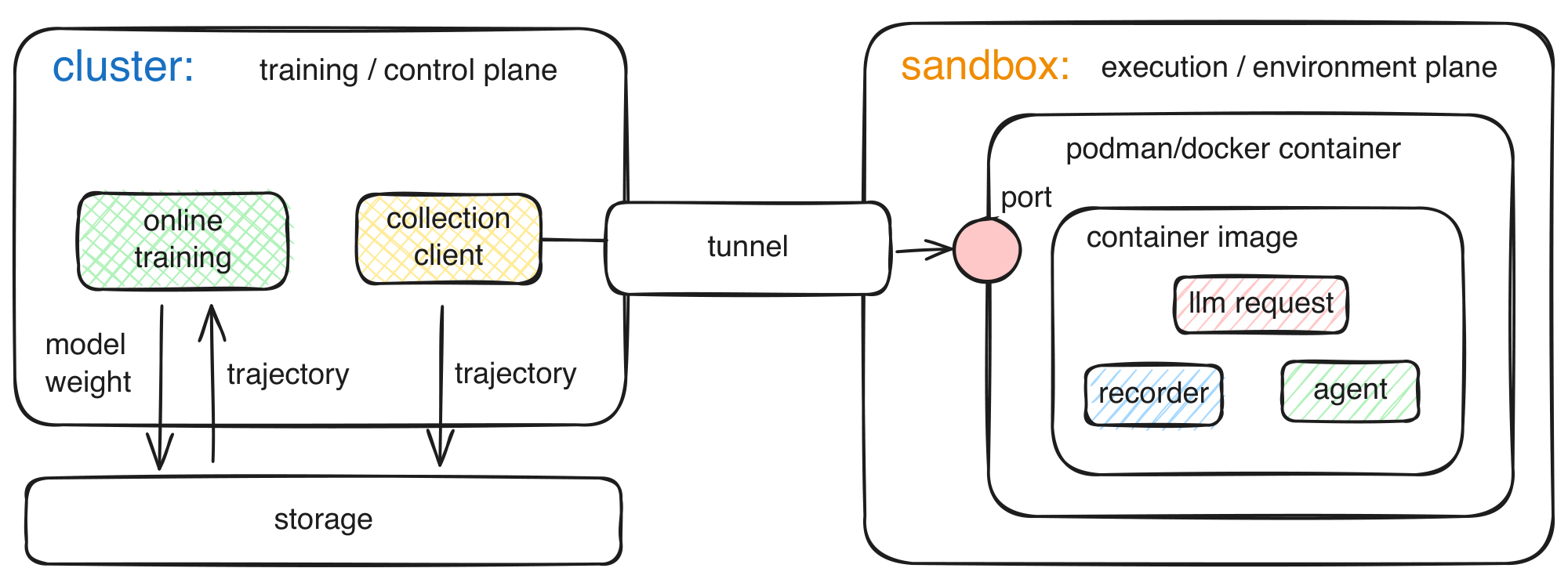

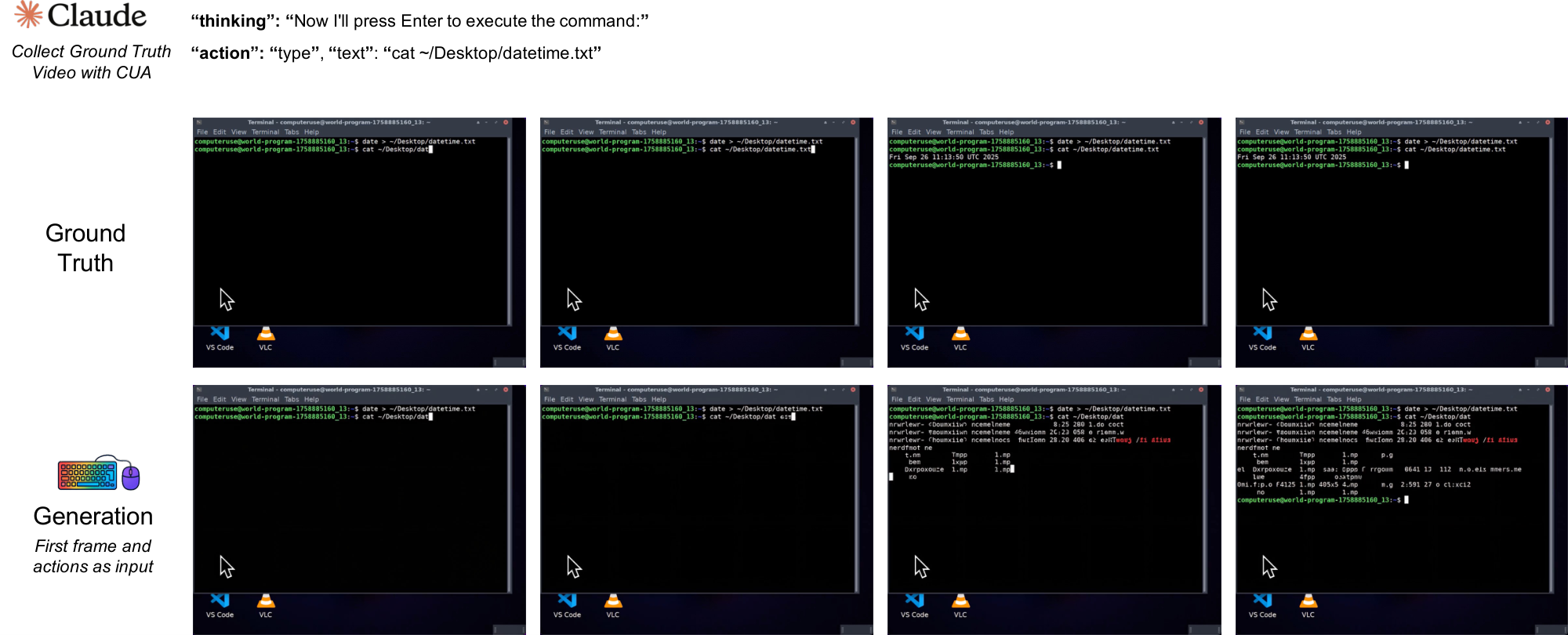

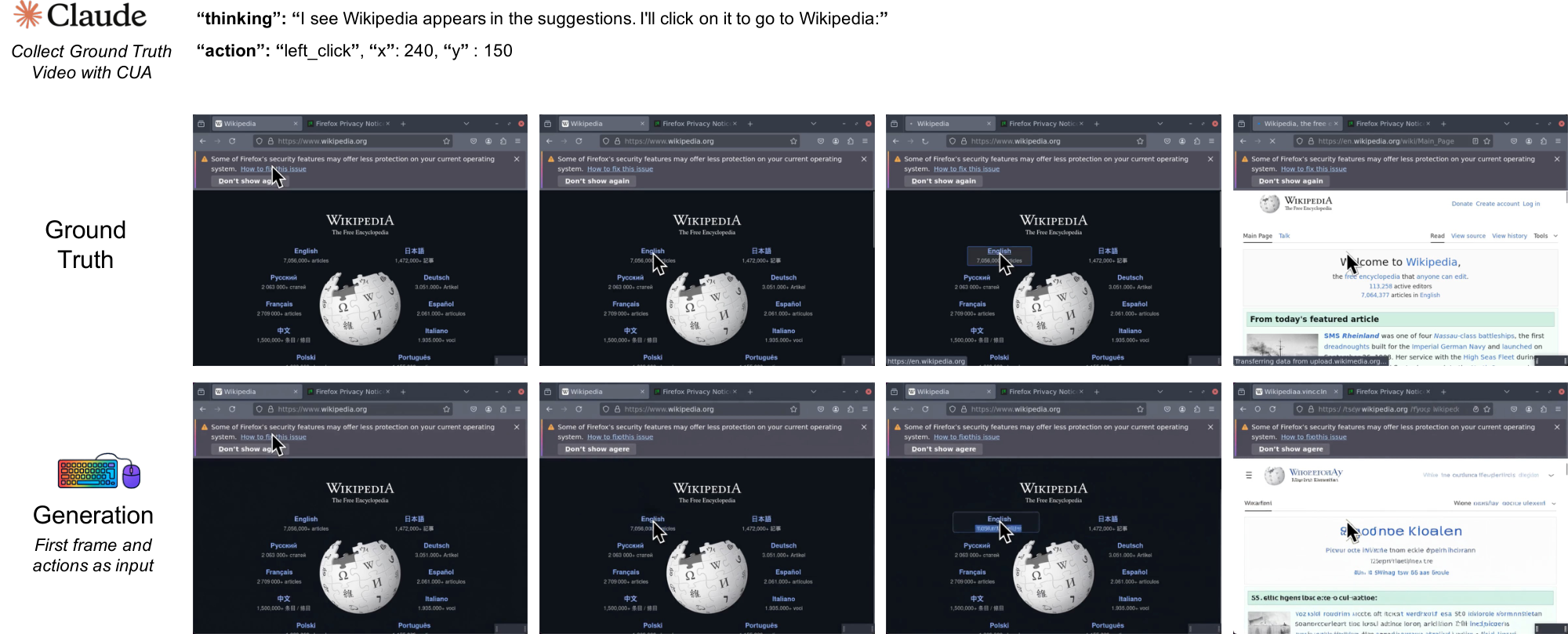

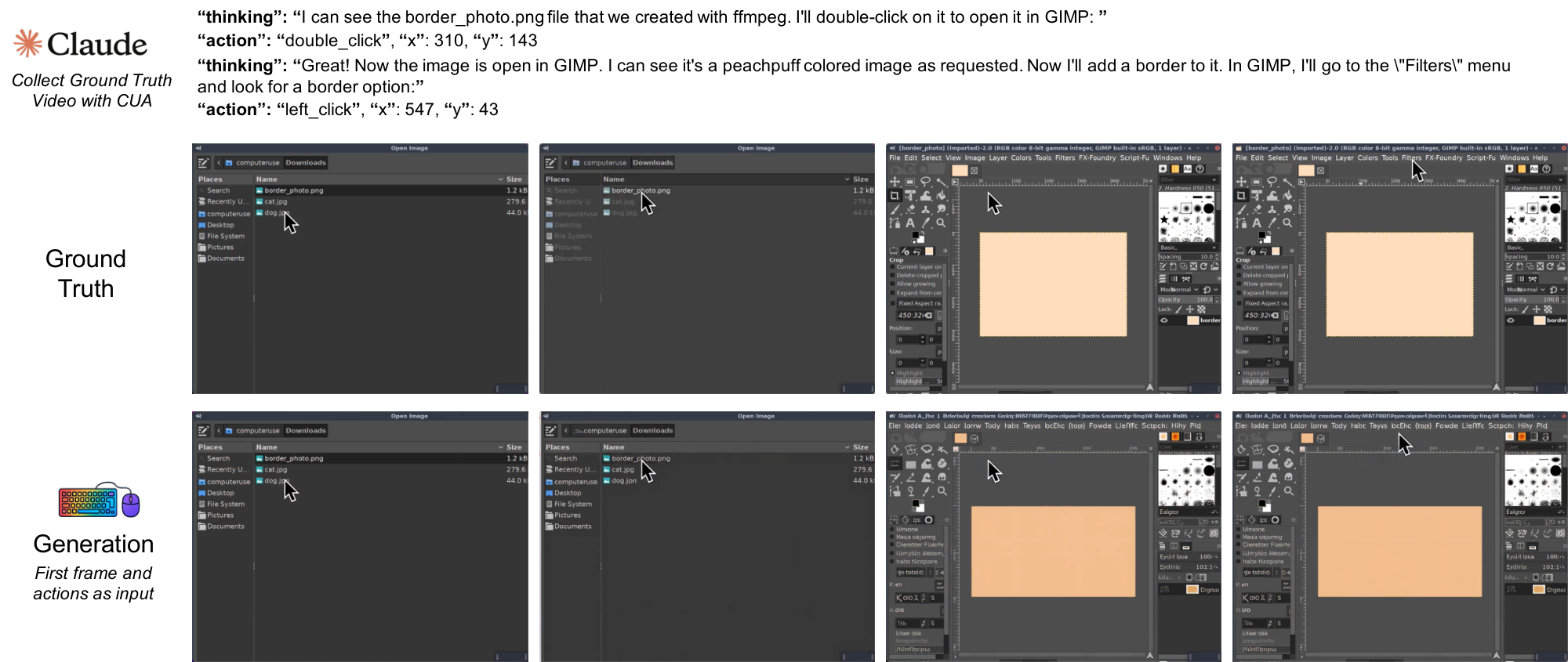

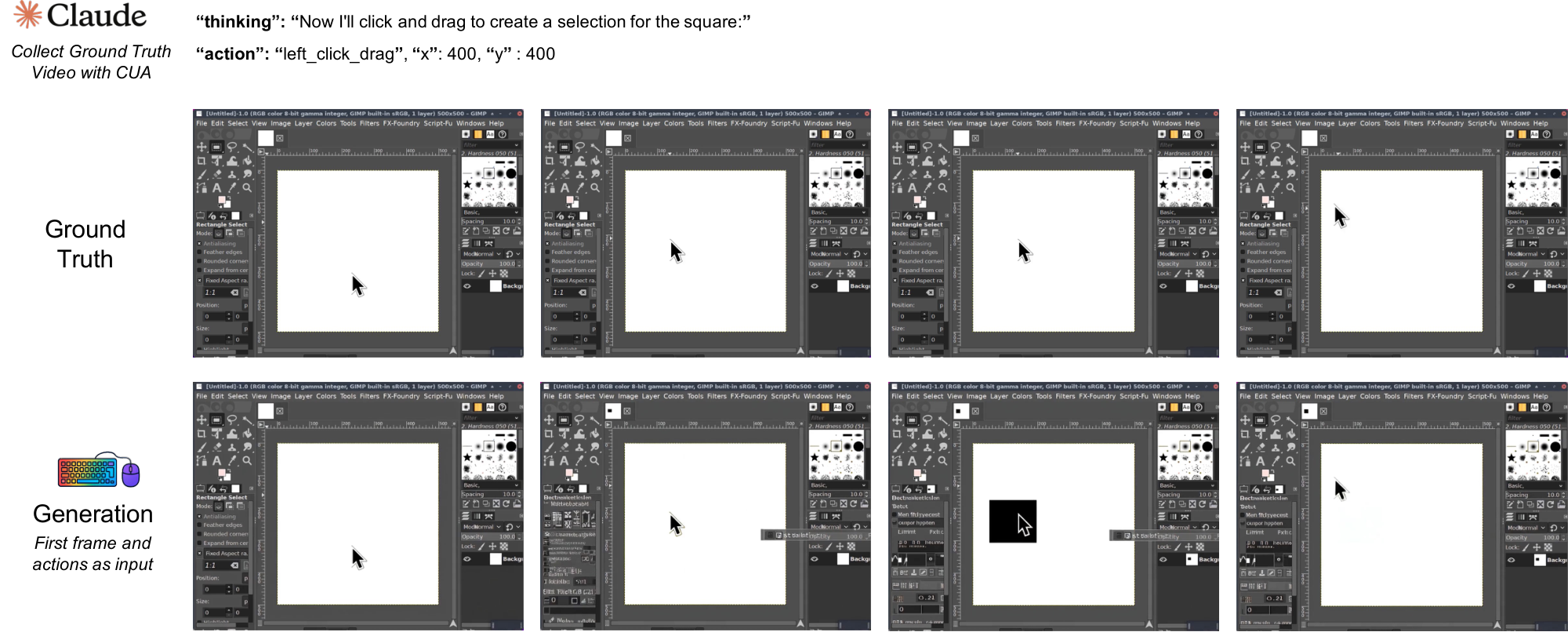

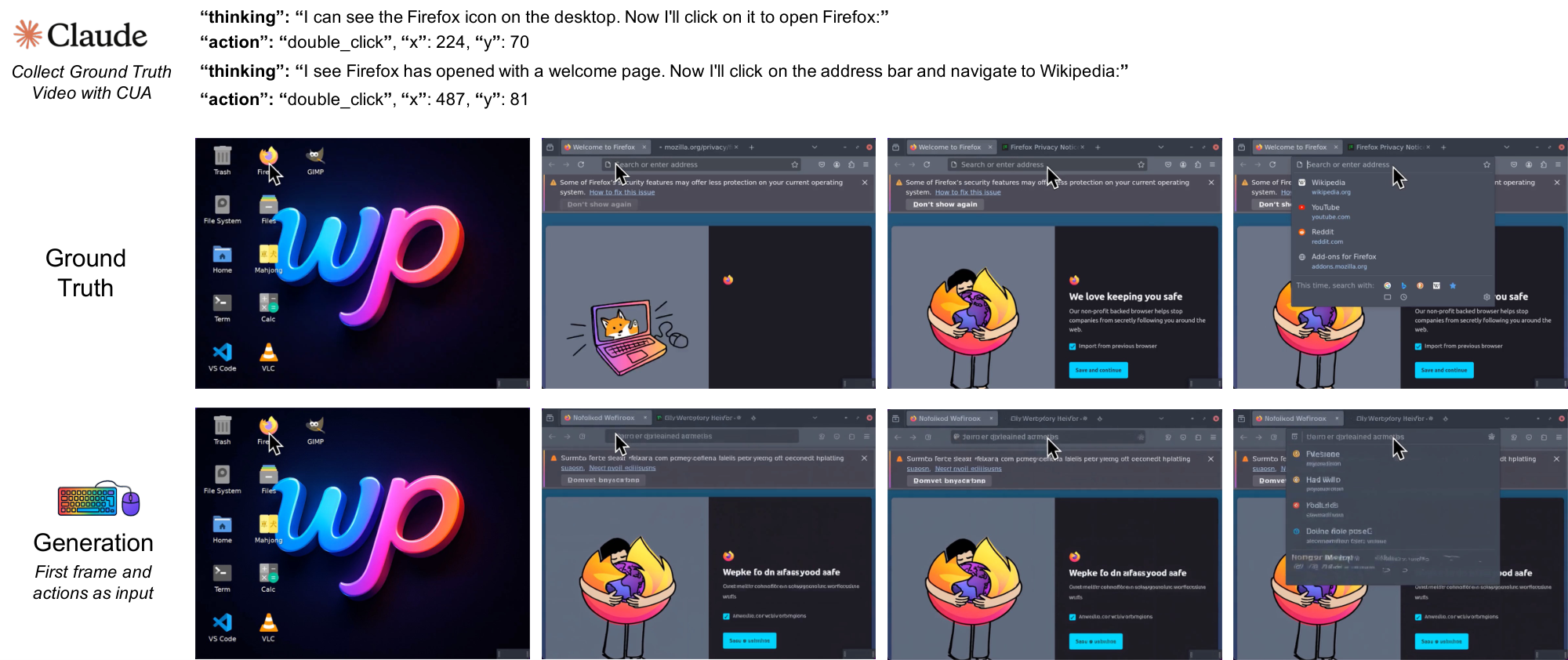

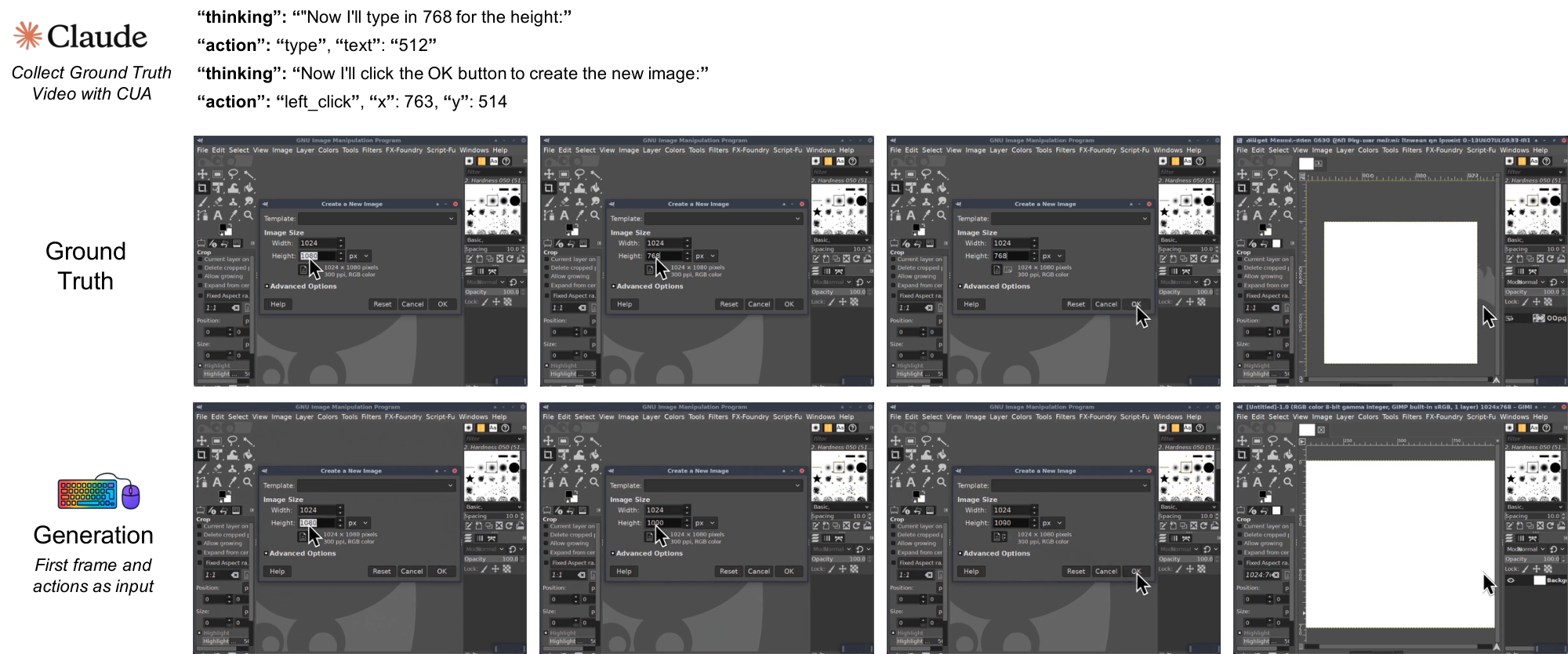

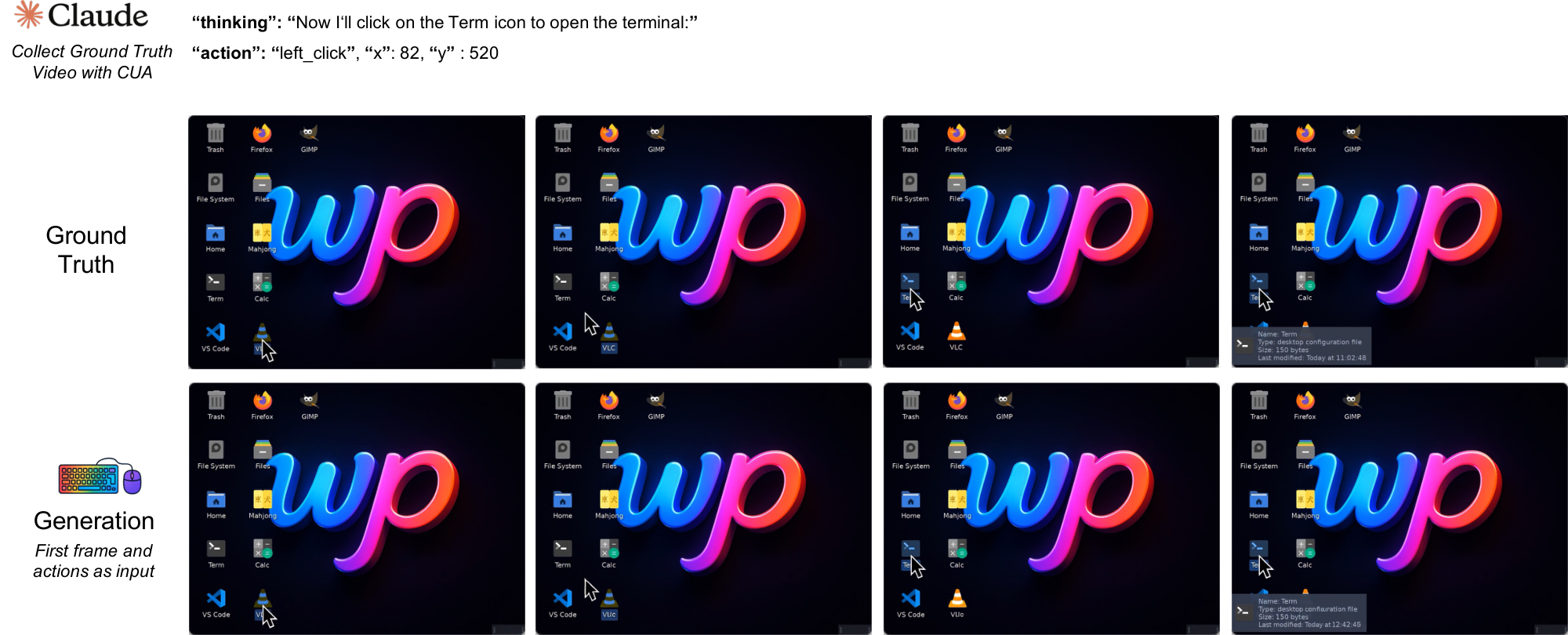

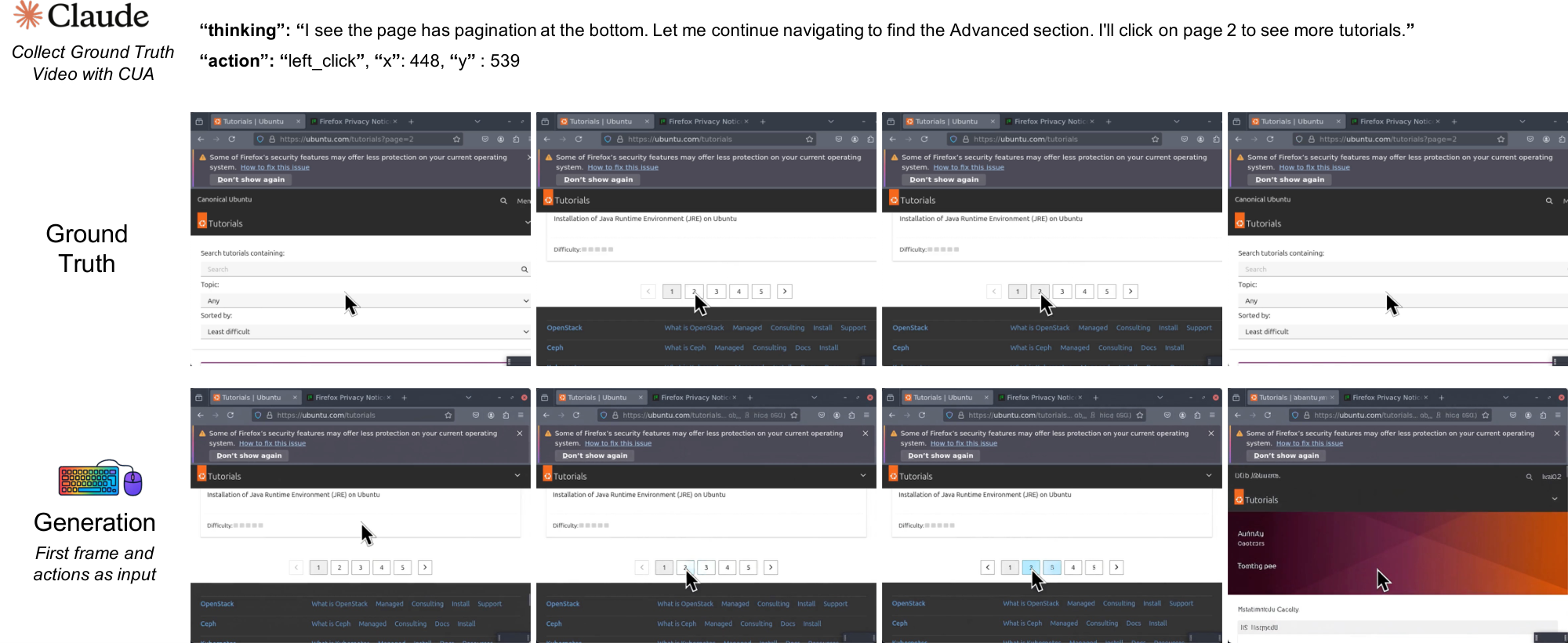

We build on the Wan2.1 model ([18]), which was a state-of-the-art video generation model at the time of our experiments. We add NC-specific conditioning and action modules, together with interface-specific training recipes. Figure 1 illustrates this setup: NCs take a prompt or action stream as input and generate future interface frames in both CLI and GUI settings. In the present prototypes, these prompts and actions are logged conditioning streams, so evaluation remains open-loop rather than closed-loop interaction with a live environment. We refer to these two instantiations as CLIGen (CLI; Section 3.1) and GUIWorld (GUI; Section 3.2).

In this video-based instantiation, the NC latent runtime state $h_t$ is realized by the model’s time-indexed video latents $z_t$. Under this abstraction, the diffusion transformer acts as the state-update map: it consumes prior latents together with the current observation and conditioning inputs, and produces the updated state $h_t$ (realized as $z_t$). The decoder $G_\theta$ parameterizes a distribution over the next frame $x_{t+1}$. Auxiliary heads encode and decode conditioning streams $u_t$, including text prompts and action traces. Structured logs such as terminal buffers are used for alignment and evaluation where available, not as privileged model-state inputs.

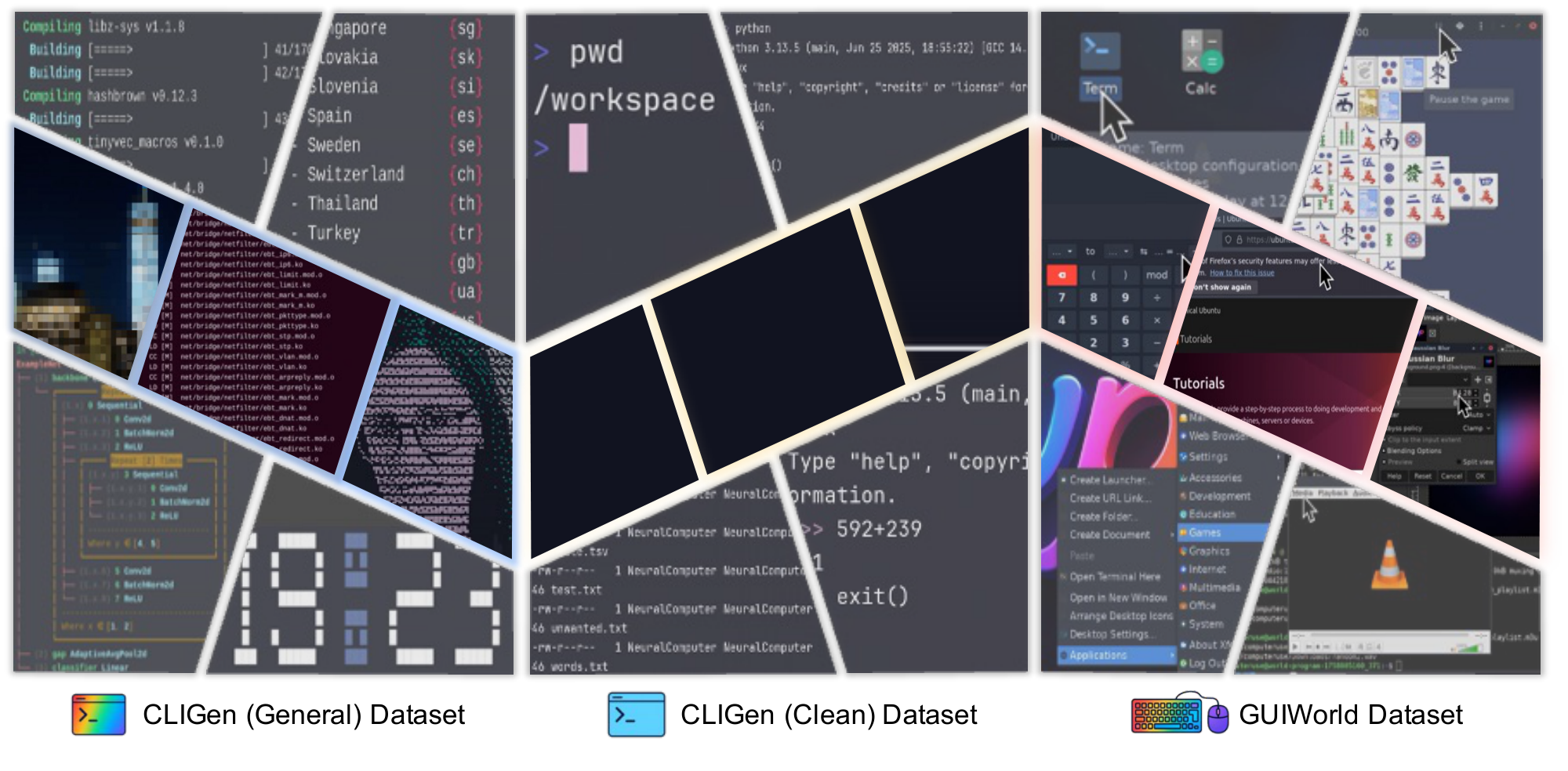

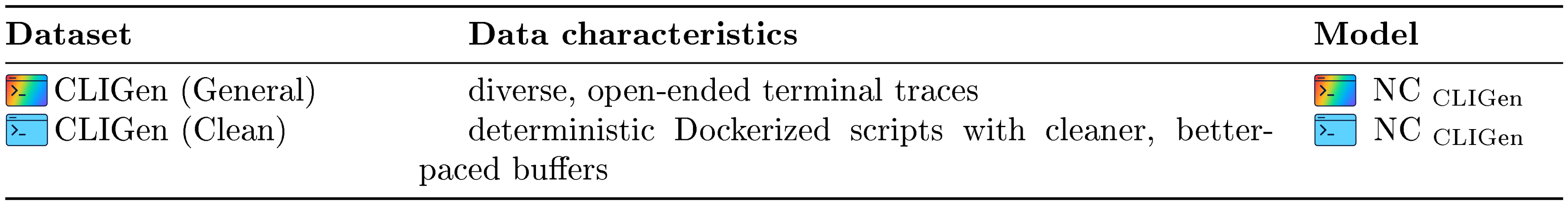

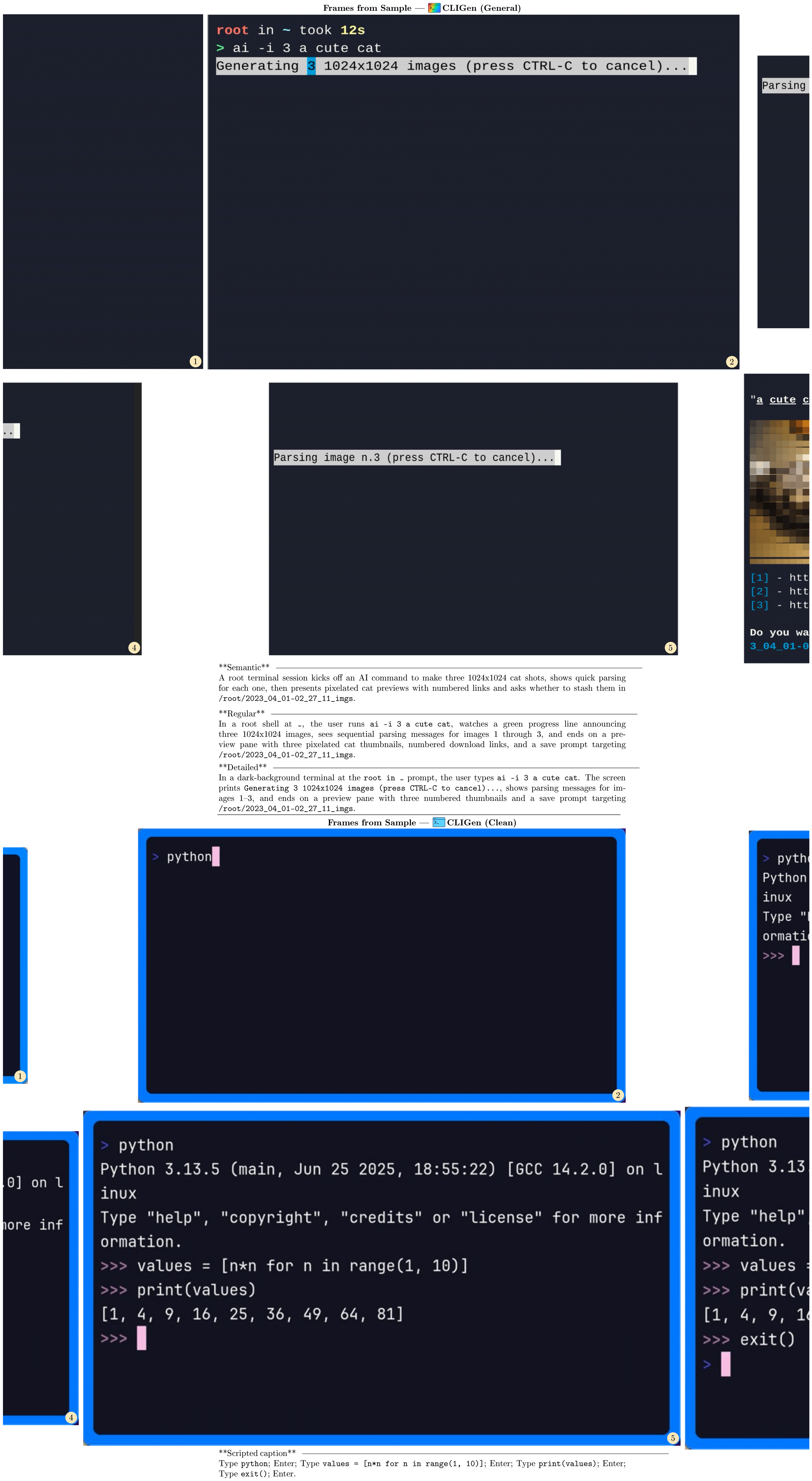

3.1 cligenGeneralLogo / cligenCleanLogo The CLI Video Generators

CLIGen instantiates the NC abstraction in command-line interfaces. Observations $x_t$ are terminal frames rendered from the underlying text buffer. The conditioning stream $u_t$ carries a user prompt and optional metadata, and the video latent state $z_t$ implements the latent runtime state $h_t$ by tracking CLI context across frames. At inference time, the model rolls out from the prompt and first frame, updates $z_t$, and predicts future terminal frames (Figure 3). We use two CLI datasets: cligenGeneralLogo CLIGen (General), which contains diverse, open-ended terminal traces, and cligenCleanLogo CLIGen (Clean), which contains deterministic Dockerized traces. We train one NC $_\text{CLIGen}$ model per dataset under the same architecture.

::: {caption="Table 1"}

:::

% caption, label

::: {caption="Table 2: Data samples for cligenGeneralLogo CLIGen (General) and cligenCleanLogo CLIGen (Clean)."}

:::

3.1.1 Data pipeline

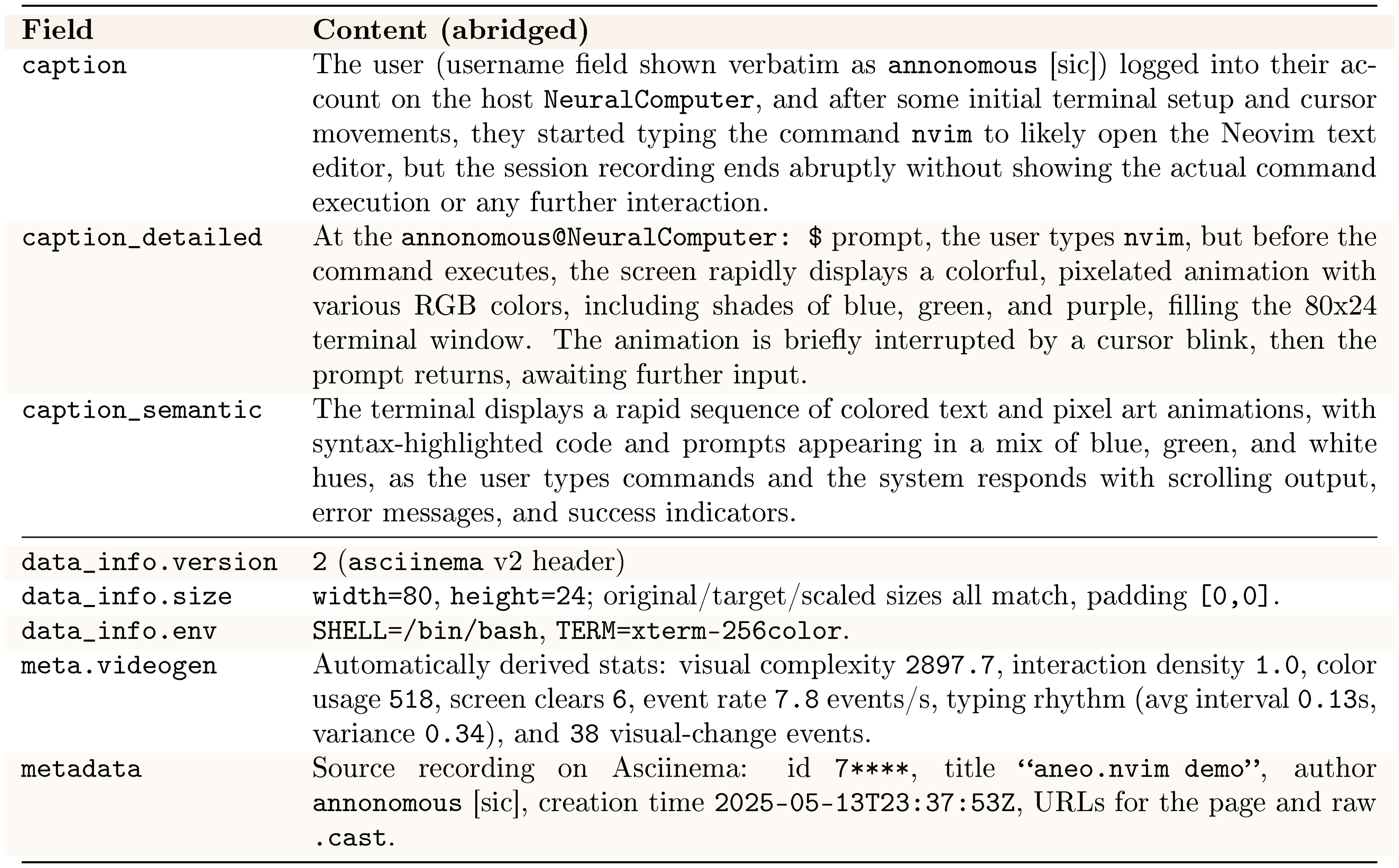

The cligenGeneralLogo CLIGen (General) dataset is built from publicly available asciinema .cast trajectories^2. The asciinema stack records and replays terminal sessions with synchronized timing and ANSI-faithful decoding. We replay each session with the official tools and render it into terminal frames, preserving palette transitions, cursor state, and terminal geometry. Frames, text buffers, and keyboard-event logs share a single monotonic clock. At render time, we normalize resolution and aspect ratio and apply a filter to remove sensitive strings. We render sessions to GIF using and convert them to video with .

We segment each recording into roughly five-second clips using content-aware splits. We temporally normalize each clip to a fixed length: shorter clips repeat the final frame, and longer clips are uniformly subsampled. The resulting 823,989 video streams (approximately 1,100 hours) are resampled to 15 FPS. Underlying buffers and logs are used to generate aligned textual descriptions with Llama 3.1 70B ([19]) in three styles (semantic, regular, and detailed), which serve as prompts. As shown in Figure 2 (left), this split spans diverse real-world terminal use cases[^3].

[^3]: Additional preprocessing details and a .cast example are in Appendix B and Appendix C.1, with a sample overview in Table 2.

The cligenCleanLogo CLIGen (Clean) dataset is collected using the open-source toolkit. It enables repeatable terminal demonstrations and integration tests through scripted execution. Deterministic scripts drive Dockerized environments to capture cleaner, better-paced traces. We authored roughly $250$ k scripts. After filtering (51.21% retained), we keep two subsets. The first contains approximately $78$ k regular traces (package installation, log filtering, interactive REPL usage, etc.). The second contains approximately $50$ k Python math validation traces. Captions are derived directly from the raw vhs scripts for clarity. We standardize frame rendering by fixing one monospace font/size, using a consistent palette for success and error highlights, and locking resolution and theme to remove typography-related confounds. Each episode records its caption type and font settings for later slicing. Clips longer than five seconds are uniformly subsampled for training, while shorter clips repeat the final frame to normalize length[^4].

[^4]: Additional details are provided in Appendix B and Appendix C.2, with a representative data sample in Table 2.

3.1.2 Model architecture

We treat CLI generation as text-and-image-to-video: a caption and the first terminal frame condition the rollout. The first frame is encoded by a VAE into a conditioning latent. In parallel, a CLIP image encoder ([20]) extracts visual features from the same frame, and a text encoder (e.g., T5 ([21])) embeds the caption. Following the Wan2.1 image-to-video (I2V) design, these conditioning features are concatenated with diffusion noise, projected through a zero-initialized linear layer, and processed by a DiT stack. Decoupled cross-attention injects the joint caption and first-frame context derived from the CLIP and text features. The VAE encodes and decodes terminal frames. During generation, the diffusion transformer advances the latent state $z_t$ under the original Wan2.1 I2V sampling schedule, without additional binary masks or periodic reseeding.

3.1.3 Implementation Details

Training uses gradient checkpointing and applies dropout 0.1 to the prompt encoder, CLIP, and VAE modules. Optimization uses AdamW (learning rate $5\times10^{-5}$, weight decay $10^{-2}$), bfloat16 precision, and gradient clipping at 1.0. Training NC $_\text{CLIGen}$ on CLIGen (General) requires $\sim$ 15,000 H100 GPU hours at batch size 1. Training on CLIGen (Clean) across both subsets requires $\sim$ 7,000 H100 GPU hours.

3.1.4 Evaluations

Unless otherwise noted, NC in this section refers to the current video-based CLI prototype. We report six practical takeaways:

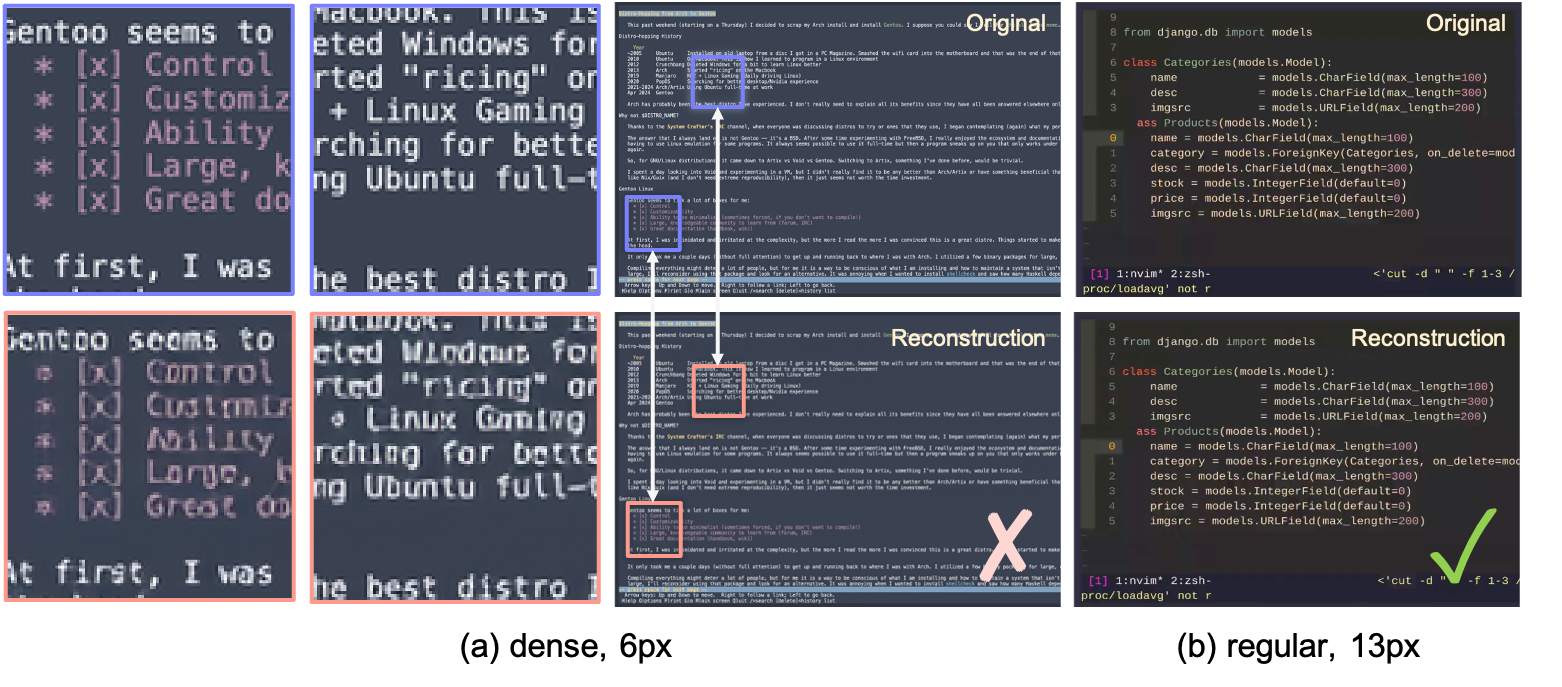

1. The NC maintains high-fidelity terminal rendering at practical font sizes (e.g., 13 px), preserving readable interface state.

2. Prompt specificity is an effective control channel: detailed, literal captions improve text-to-pixel alignment.

3. On clean but domain-specific data, global PSNR/ $\textsc{SSIM}$ plateau around 25k steps (Figure 5), indicating early saturation in reconstruction metrics rather than a complete halt in learning.

4. The NC reproduces complex terminal appearances while sustaining coherent short-horizon command rollouts under fixed conditioning.

5. Symbolic computation remains the main bottleneck: structured arithmetic reveals reliability limits, motivating stronger symbolic or system-level conditioning.

6. In our setting, without changing the NC backbone or adding RL, reprompting improves symbolic probes (4% $\rightarrow$ 83%; Figure 6), reinforcing the view that current models are strong renderers and conditionable interfaces rather than native reasoners (Table 7).

cligenGeneralLogo Experiment 1: The NC stays readable at practical font sizes

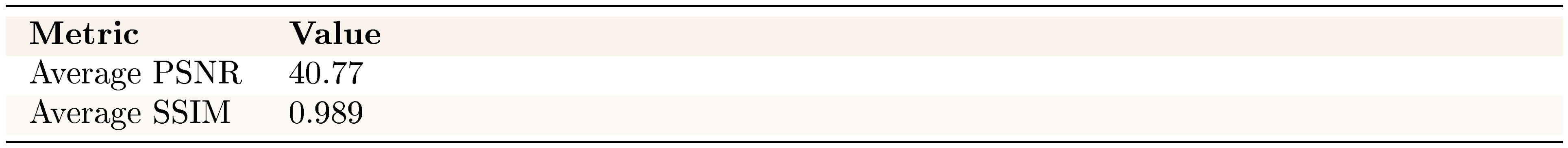

::: {caption="Table 3: Reconstruction quality."}

:::

Concurrent work ([16]) argues that generic natural-image VAEs can perform poorly on structured computer screenshots. We test this claim directly by applying the Wan2.1 VAE ([18]) to terminal content. In our setting, reconstruction quality is primarily governed by font size. At 13 px, it is high (40.77 dB $\textsc{PSNR}$, 0.989 $\textsc{SSIM}$). At 6 px, text exhibits noticeable blurring even when global $\textsc{PSNR}$ / $\textsc{SSIM}$ remain strong, because background regions dominate these metrics.

However, a sweep over CLIGen (General) frames shows that this effect is confined to extreme cases (Figure 4). Very small 6 px fonts and ultra-dense text exhibit localized blurring despite high global $\textsc{PSNR}$. In contrast, the 13 px terminal font used in CLIGen remains visually sharp across panes and commands. These results indicate that the VAE is adequate for regular CLIGen usage and highlight that sensible font choices help ensure stable NC training.

cligenCleanLogo Experiment 2: Performance plateaus early and can degrade with prolonged training

On clean but domain-specific structured interfaces, global reconstruction metrics improve rapidly early and then show limited additional gains under the current training objective. In CLIGen (Clean), $\textsc{PSNR}$ / $\textsc{SSIM}$ plateau quickly, suggesting that further optimization becomes bottlenecked less by model capacity than by the quality and pacing of the available supervision. After the early gains, the remaining errors are often tied to artifact-prone signals (e.g., rendering glitches or rapid screen changes that disrupt temporal alignment), so additional training on the same objective can yield diminishing or even slightly unstable returns in these perceptual metrics.

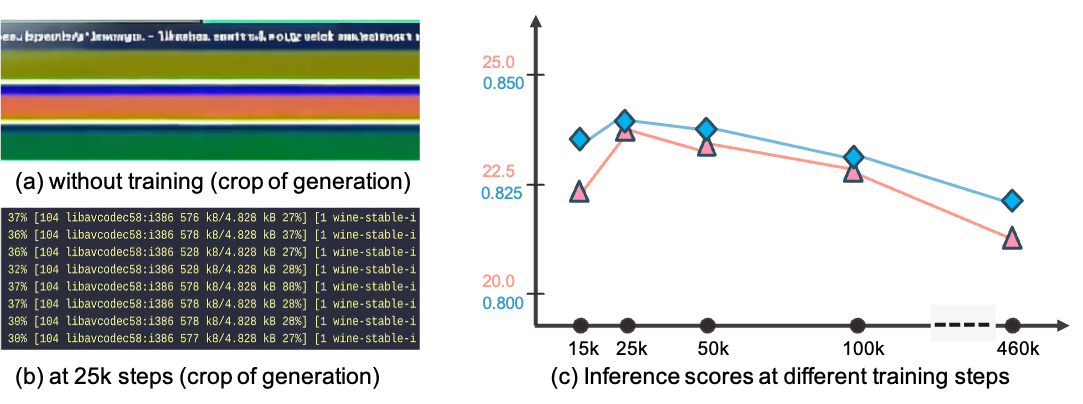

Panels (a–b) illustrate the effect of training on CLIGen data. Without CLIGen fine-tuning, Wan2.1 produces garbled terminal outputs (a). After 25k steps, the model generates readable text with consistent formatting and color cues (b).

Figure 5 plots the corresponding $\textsc{PSNR}$ / $\textsc{SSIM}$ curves and shows that these global perceptual metrics flatten around 25k steps. They improve little with further training up to 460k steps, and extended optimization can even slightly reduce them. One plausible explanation is that most learnable structured patterns are acquired early, and further gains require higher-quality, better-paced, or more informative supervision.

cligenGeneralLogo Experiment 3: Literal captions drive rendering accuracy

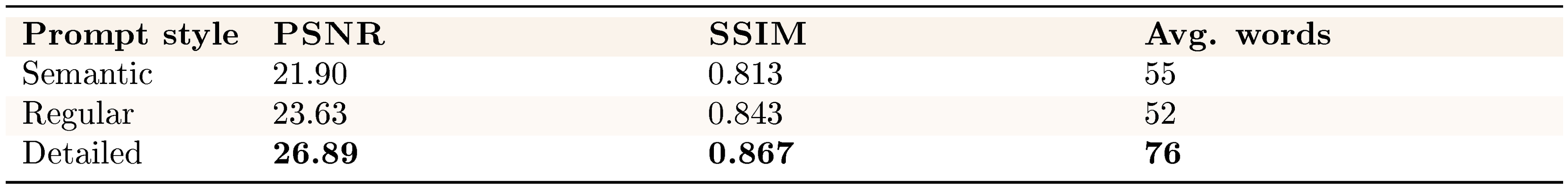

Caption specificity has a strong effect on terminal rendering quality. As shown in Table 4, detailed, literal descriptions improve reconstruction fidelity. $\textsc{PSNR}$ increases from 21.90 dB (semantic) to 26.89 dB (detailed), a gain of nearly 5 dB, compared to less specific, high-level semantic descriptions.

The three caption tiers correspond to the same underlying terminal sequence but differ in length and granularity. Semantic captions (average 55 words) provide high-level summaries (e.g., "a terminal session generates three cat images"). Regular captions (average 52 words) include key commands and outputs (e.g., ai -i 3 a cute cat, status messages). Detailed captions (average 76 words) transcribe screen content more exhaustively, including exact text, colors, and formatting.

::: {caption="Table 4: Caption styles versus TI2V fidelity."}

:::

This progression helps explain why literal descriptions are particularly effective for terminal rendering. Unlike natural images, which are dominated by global style patterns, terminal frames are governed primarily by text placement. Detailed captions act as scaffolding—explicitly specifying which tokens appear where—thereby enabling precise text-to-pixel alignment.

cligenCleanLogo Experiment 4: Neural computers achieve accurate character-level text generation

::: {caption="Table 5: OCR accuracy versus training."}

:::

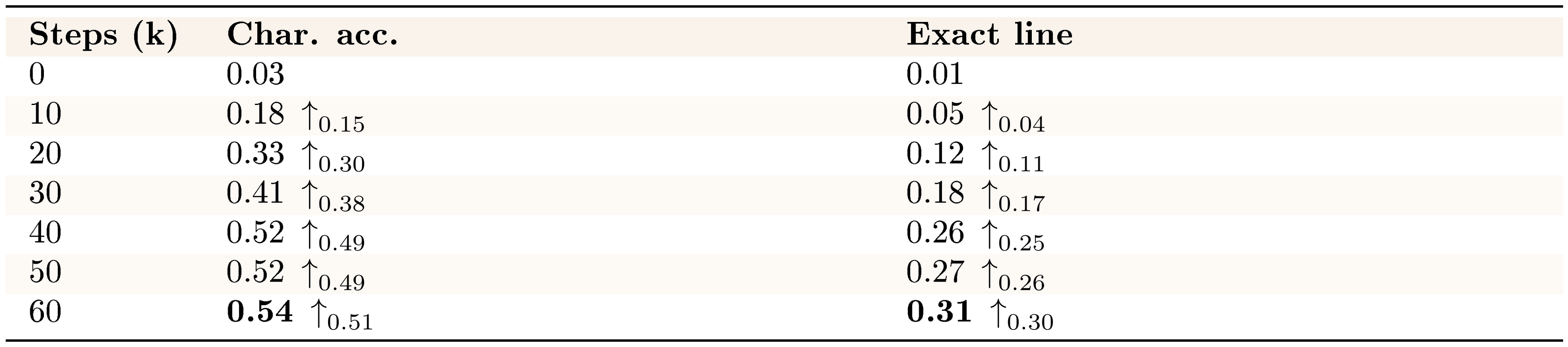

Beyond PSNR and SSIM, character-level accuracy is a more direct metric for terminal rendering. Character-level accuracy requires explicit pixel-to-text correspondence. For CLIGen (Clean), we apply Tesseract to five uniformly sampled (ground-truth, generated) frame pairs per video and normalize whitespace. We then compute two metrics (full protocol in Appendix B). Character accuracy uses the Levenshtein distance between concatenated ground-truth and generated texts. Exact-line accuracy measures the fraction of ground-truth lines whose normalized content exactly matches the prediction at the same line index.

Table 5 shows that our models achieve substantial text rendering accuracy under this protocol. Character accuracy increases from 0.03 at initialization to 0.54 at 60k steps, with exact-line matches reaching 0.31 (0.26 by 40k). Most gains occur within the first 40k steps, followed by smaller refinements thereafter. These OCR-based metrics capture properties beyond perceptual similarity. Accurately generating terminal characters requires modeling text structure, font rendering, and spatial relationships. These are core competencies for interactive neural computer systems. This level of character-level precision is a step toward usable, not just plausible, terminal interfaces. At the same time, we interpret this result primarily as evidence of interface fidelity, while routine reuse and native symbolic computation remain separate questions.

cligenCleanLogo Experiment 5: Does this NC instantiation show native CLI reasoning?

::: {caption="Table 6: Arithmetic probe accuracy (100 problems sampled from a 1,000-problem held-out pool)."}

:::

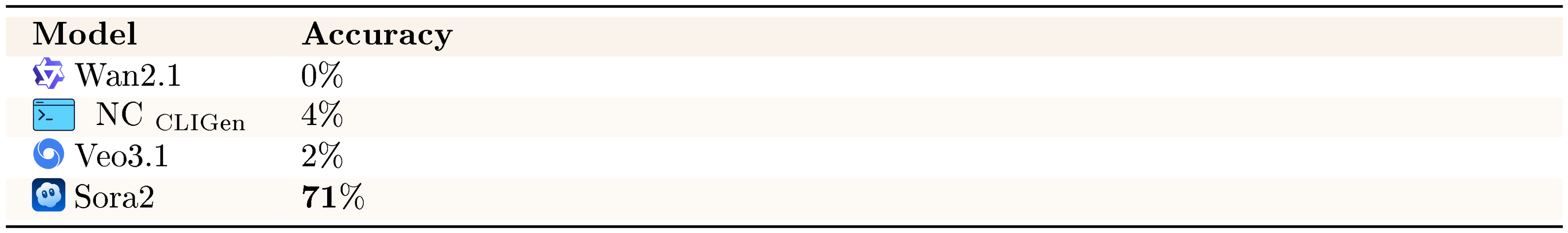

We also probe symbolic computation with CLI arithmetic tasks. These tasks are a sharp stress test for symbolic reliability: humans answer them instantly, yet current NC instantiations often fail on seemingly simple symbolic operations.

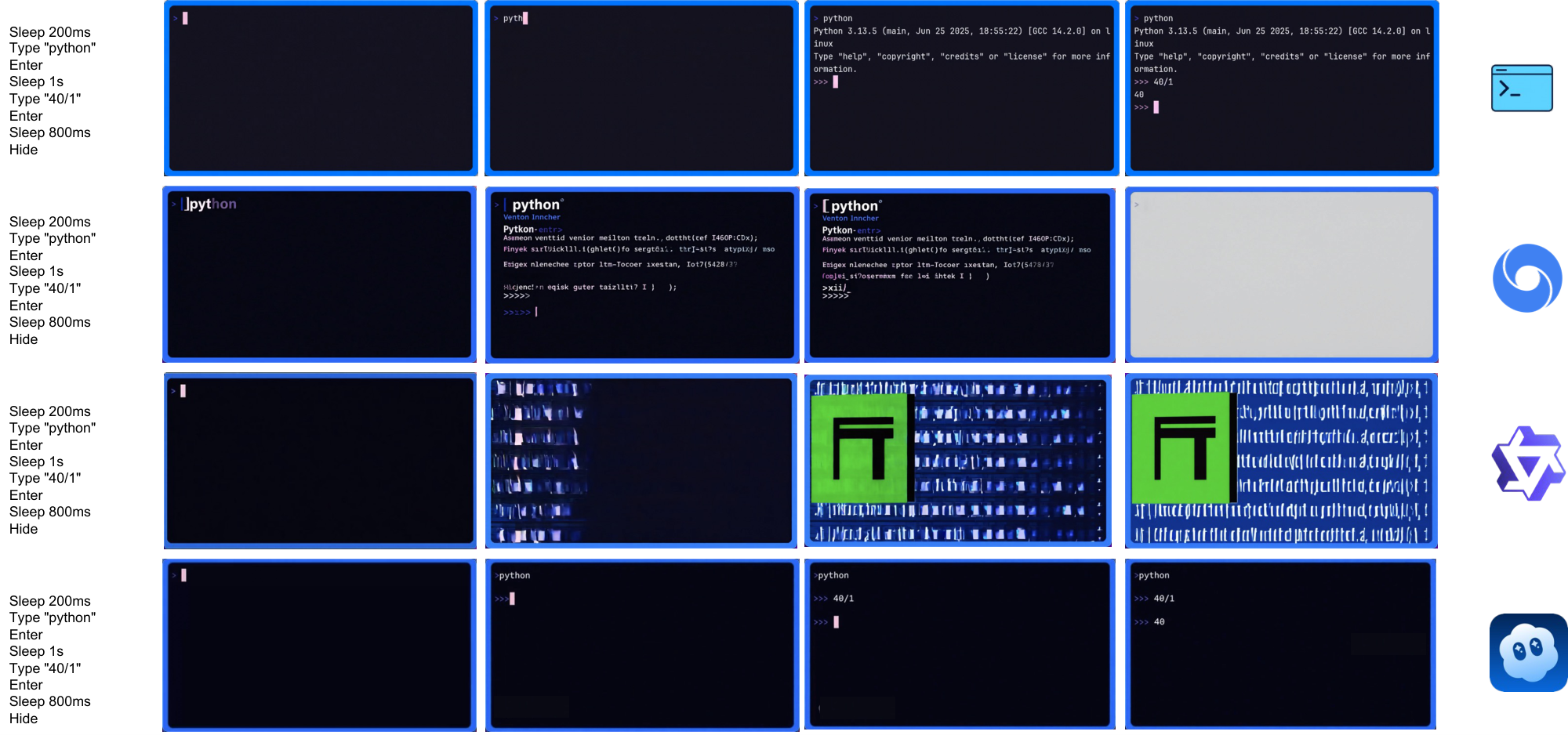

Our arithmetic probe presents basic mathematical operations through terminal interactions. We reserve a held-out pool of 1,000 math problems and randomly sample 100 problems as the final evaluation set. Table 6 shows that current video models, including this NC instantiation, struggle on these symbolic tasks. Wan2.1 achieves 0% accuracy, our NC $_\text{CLIGen}$ model reaches 4%, and Veo3.1 manages 2%—all far below human-level performance on these fundamental tasks. These results contrast with common claims of strong symbolic reasoning in current video models. Sora2's 71% accuracy is a notable outlier and may reflect system-level advantages or additional training beyond our current setup. Overall, native symbolic reasoning remains an open challenge for current video-based NC instantiations. Accordingly, arithmetic probes in this paper serve as a targeted test of symbolic stability under the current prototype substrate.

The poor arithmetic-probe performance in Table 6 raises a key question. Does this prototype require specialized reinforcement learning to achieve reliable symbolic computation, or can stronger conditioning substantially narrow this gap?

cligenCleanLogo Experiment 6: Does this NC instantiation require RL for symbolic probes?

As shown in Figure 6, NC $_\text{CLIGen}$ accuracy on CLIGen (Clean) arithmetic tasks rises from 4% to 83% under reprompting. This suggests that system-level conditioning can be an effective first lever for improving performance on symbolic probes. It is complementary to (rather than strictly requiring) RL-based training pipelines. More generally, the success of reprompting highlights how sensitive symbolic-probe outcomes are to the conditioning interface. Much of the apparent "reasoning" gain can come from better specification and instruction-following rather than new native computation. For the arithmetic subset, we include the correct answer explicitly in roughly half of the training captions to encourage reliable rendering of the output string. Because reprompting can similarly provide stronger hints (or even outsource computation to an external text system), we interpret the gain primarily as evidence of steerability. It also shows faithful rendering of conditioned symbolic content. We do not treat it as a clean demonstration that the NC backbone performs arithmetic internally.

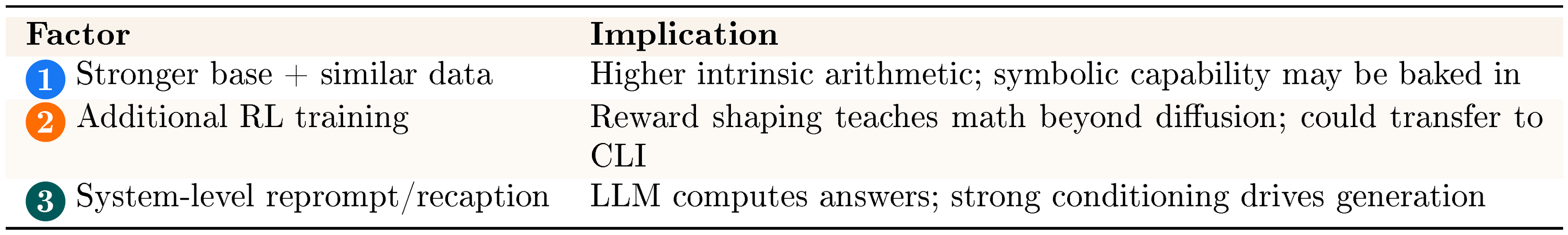

::: {caption="Table 7: Hypotheses for Sora2's advantage."}

:::

The evidence supports system-level conditioning as a practical path forward for this NC instantiation. Among the three hypotheses for improving arithmetic-probe performance—stronger base models, reinforcement learning, or enhanced conditioning—our results most strongly favor the third approach. The gain from reprompting (4% $\rightarrow$ 83%), achieved without modifying the underlying NC backbone, is substantial. It shows that measured "reasoning" on these probes is highly sensitive to specification and conditioning. We therefore do not treat it as direct evidence of native arithmetic inside the NC backbone.

In our setting, strategic conditioning yields larger symbolic-probe gains than the RL pipeline we tested. Evaluations should therefore distinguish native computation from conditioning-assisted performance when assessing reasoning capabilities in current video-based NC instantiations.

3.1.5 Visualizations

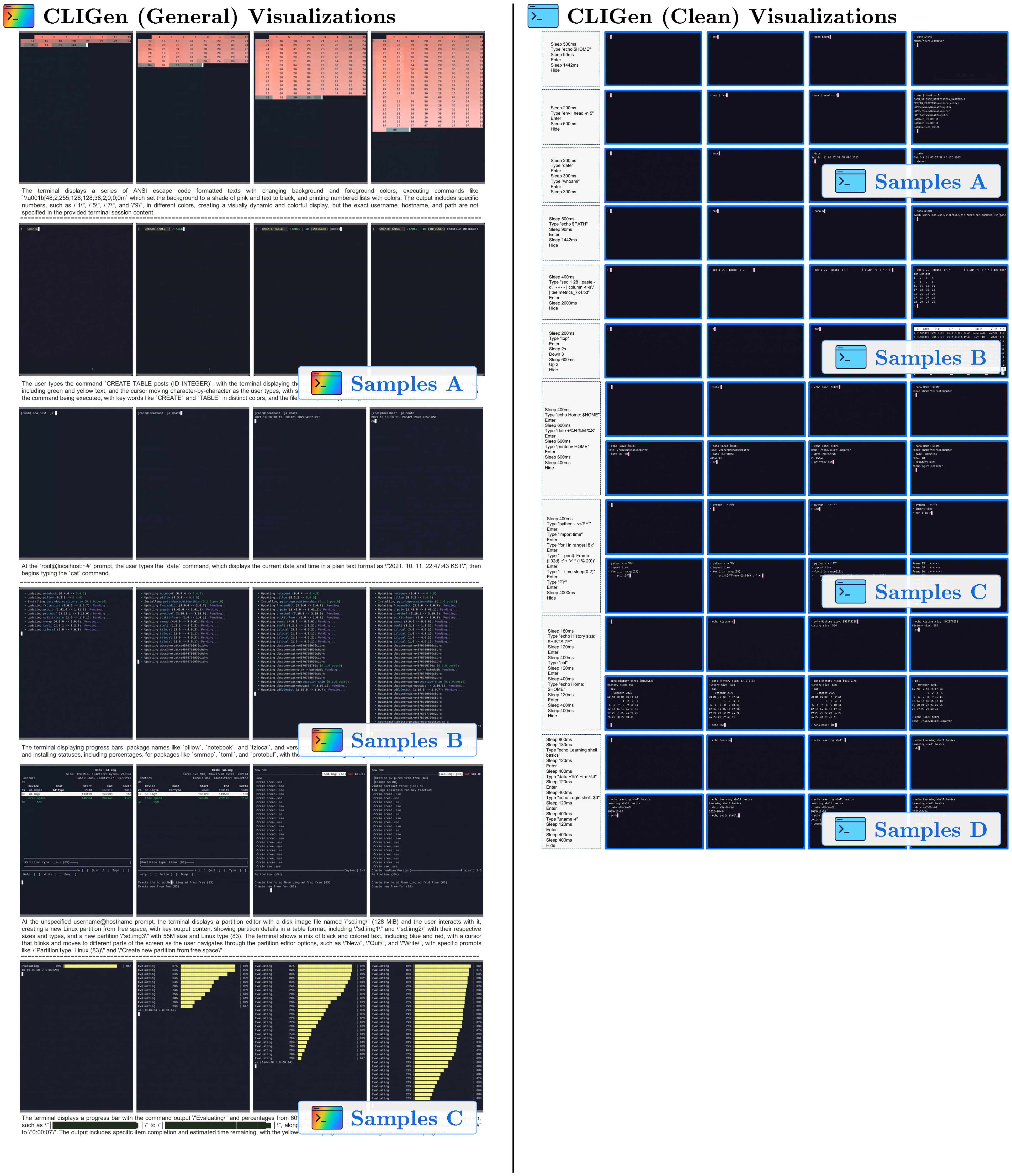

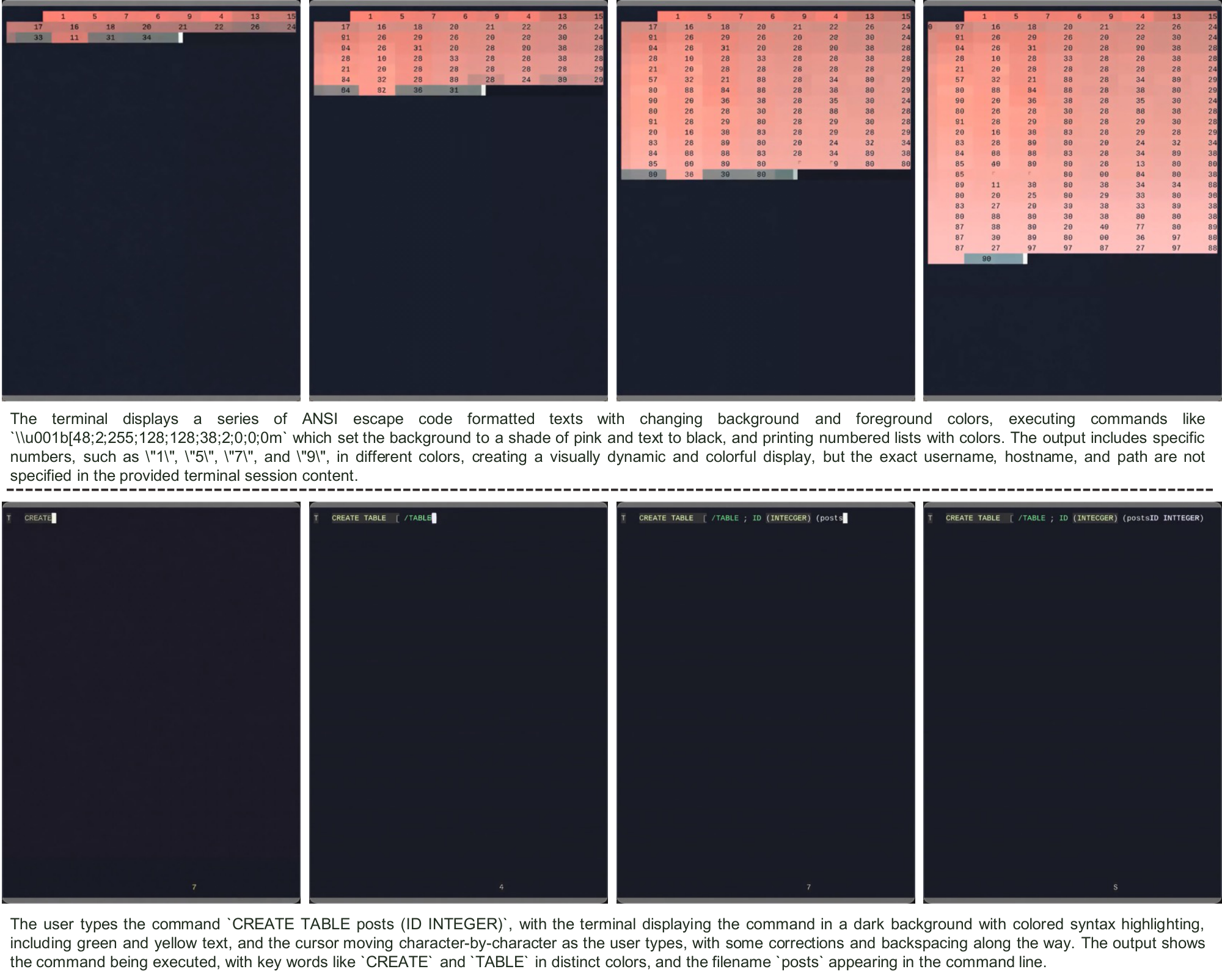

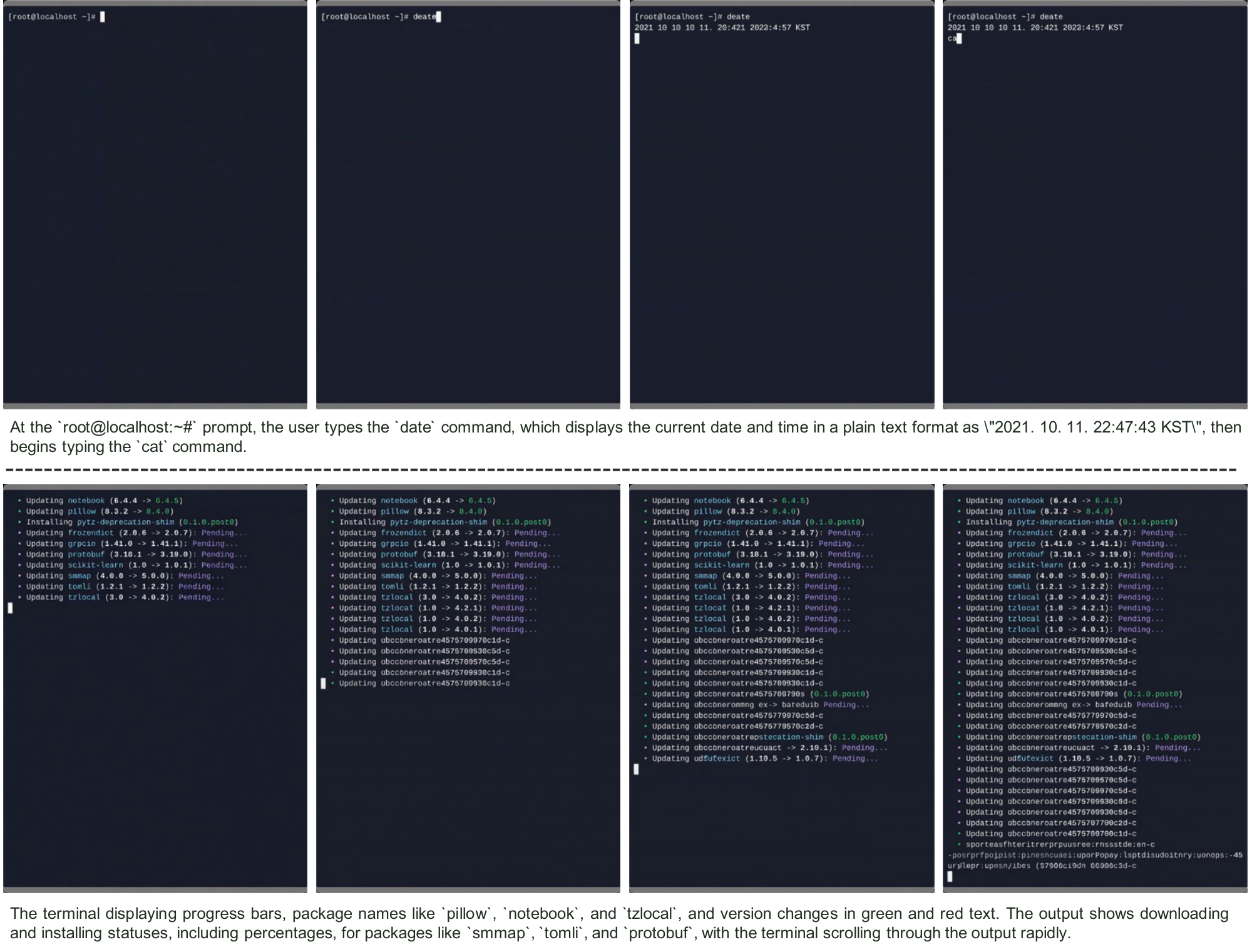

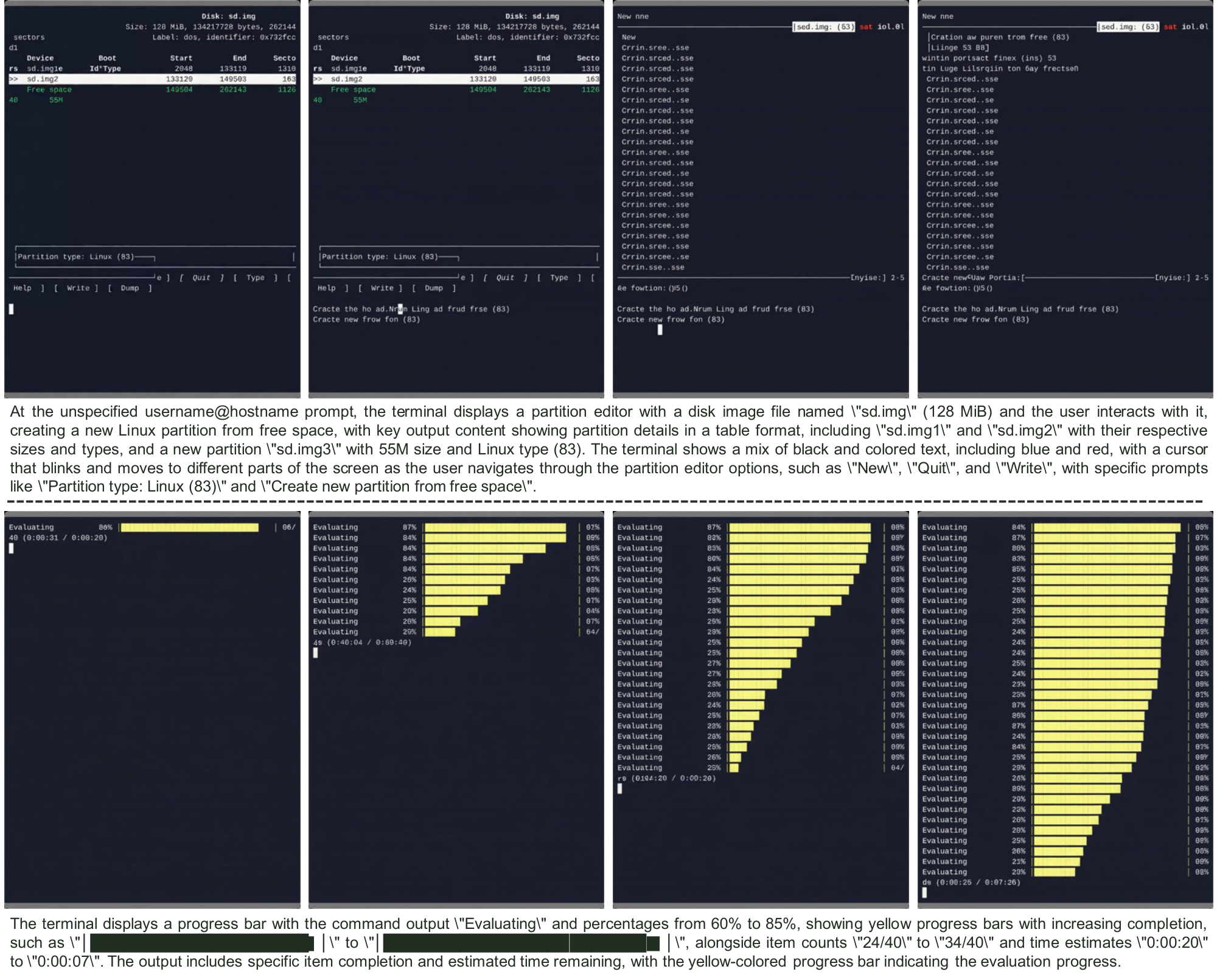

(1) CLIGen (General) visualizations. Qualitative samples highlight the breadth of real-world terminal dynamics captured in CLIGen (General): ANSI escape sequences that repaint regions with changing foreground/background colors, incremental command entry with syntax highlighting and cursor edits, classic shell prompts and system outputs, long-running jobs with rapidly scrolling and color-coded package logs, full-screen TUIs such as partition editors, and progress dashboards with updating bars, counts, and ETAs. These traces emphasize that "looking correct" requires maintaining terminal geometry, palette transitions, and cursor state frame-by-frame.

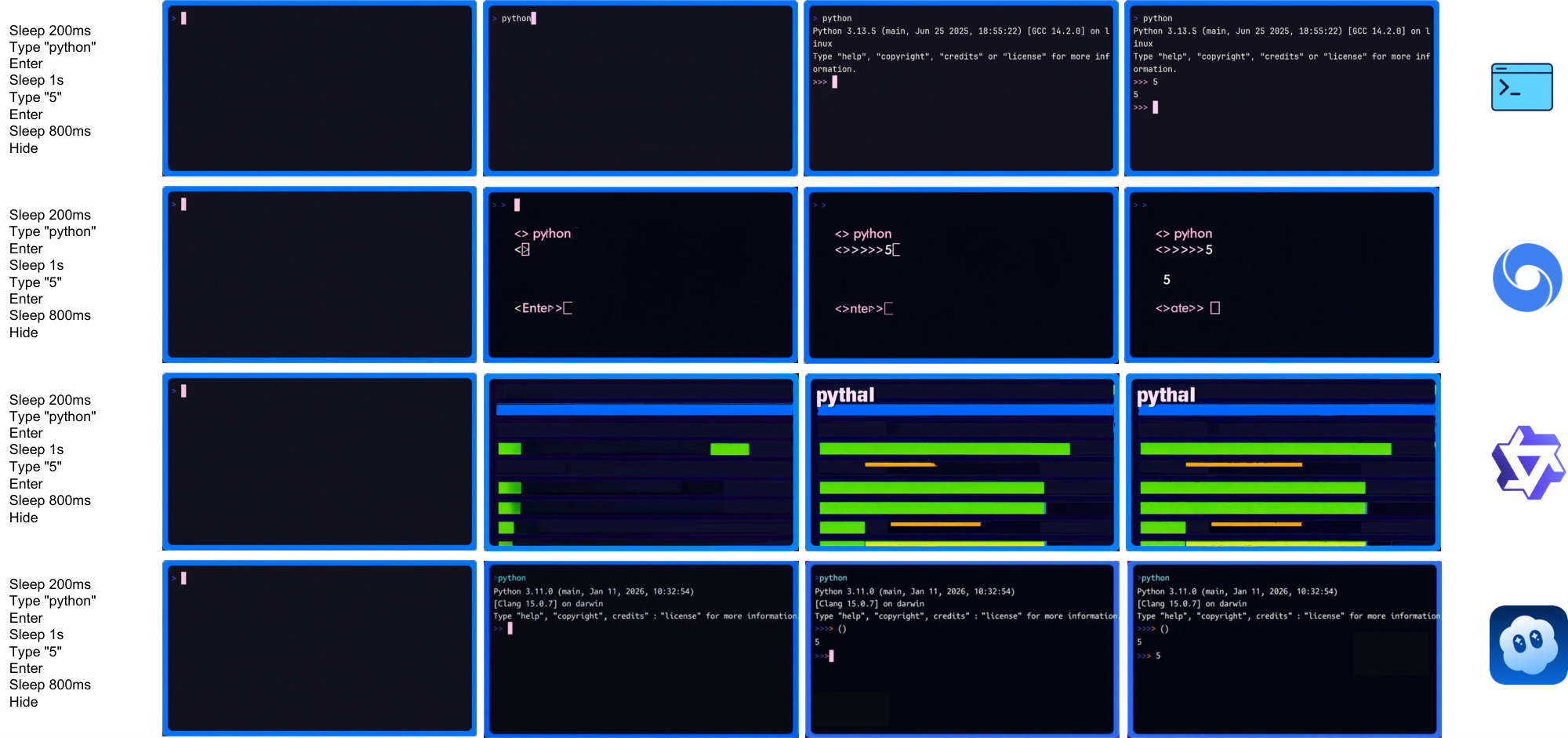

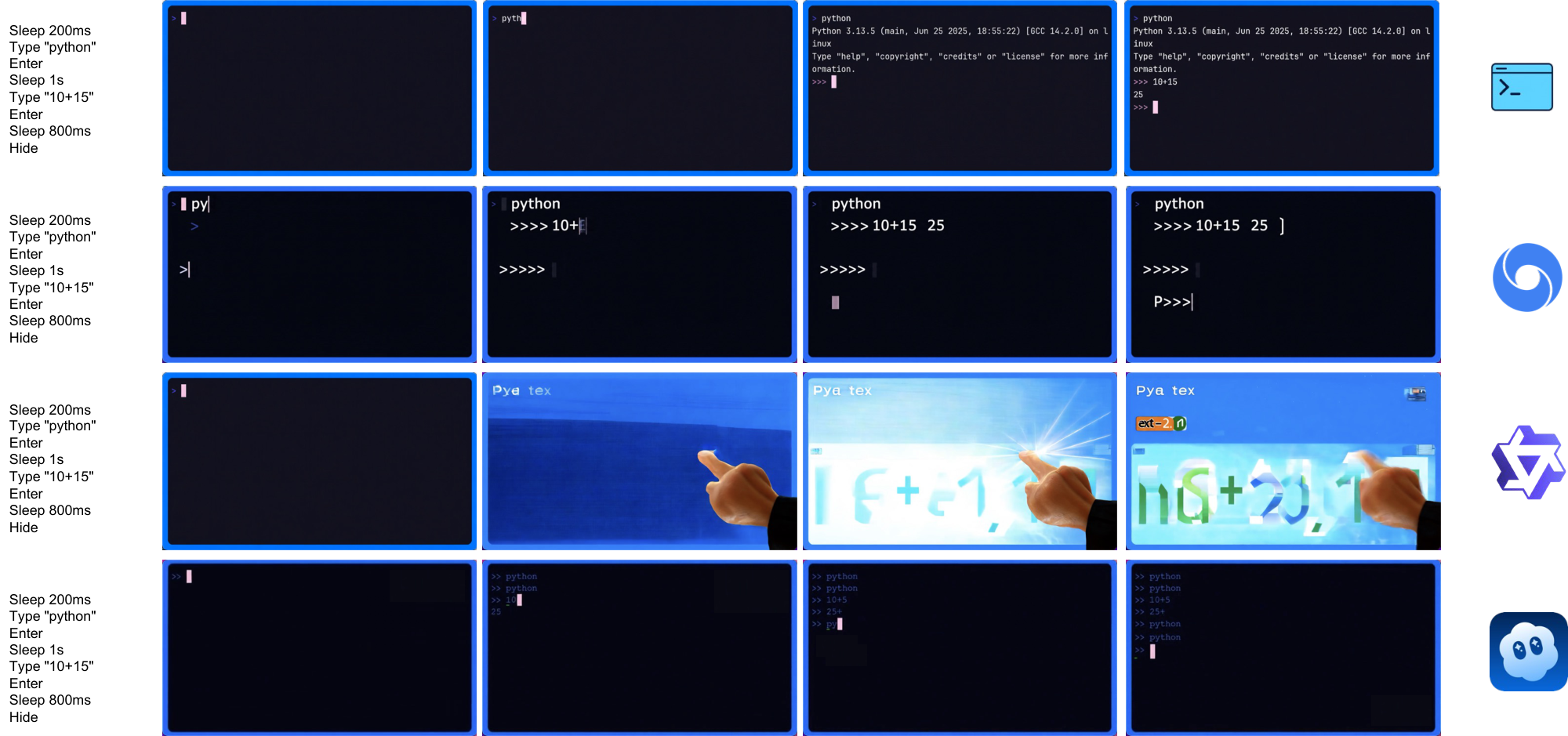

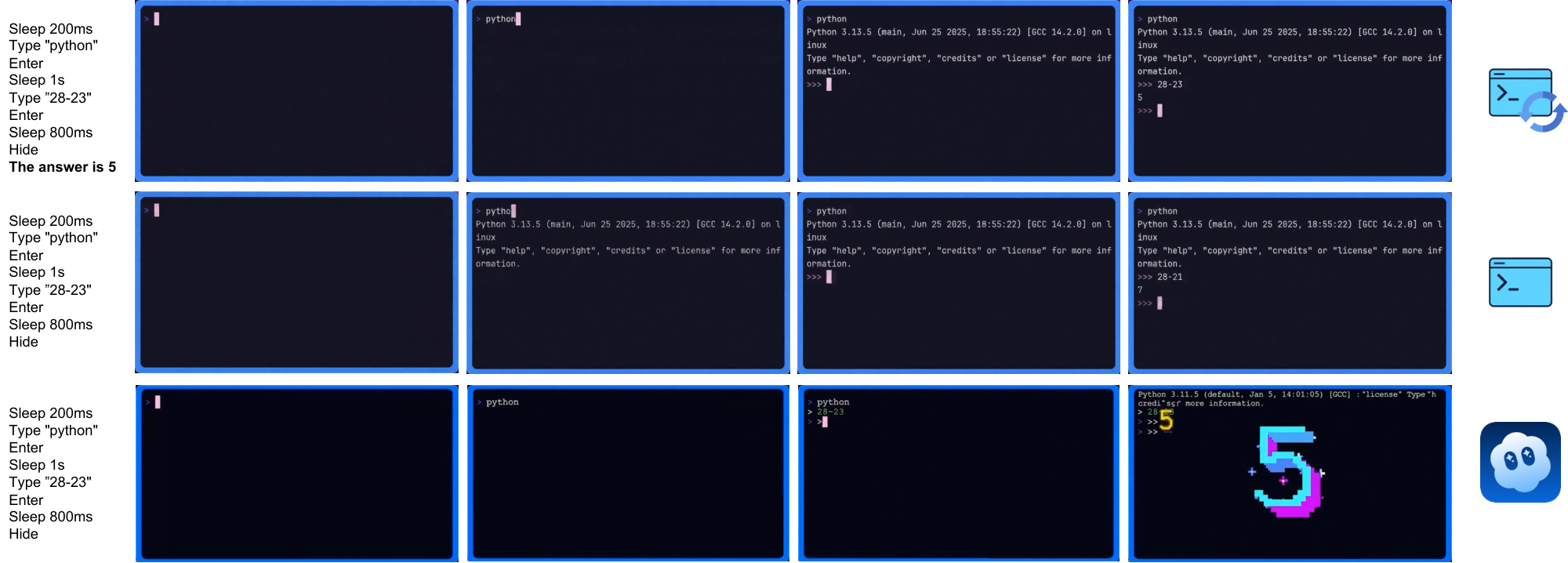

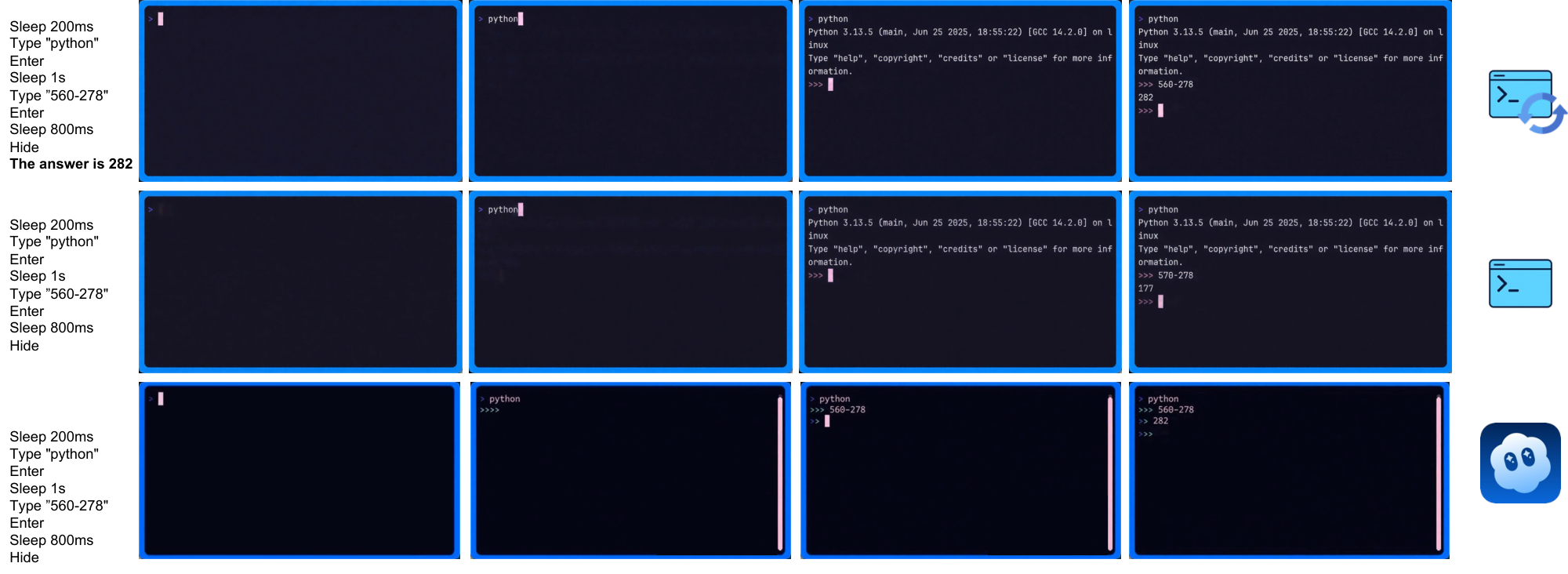

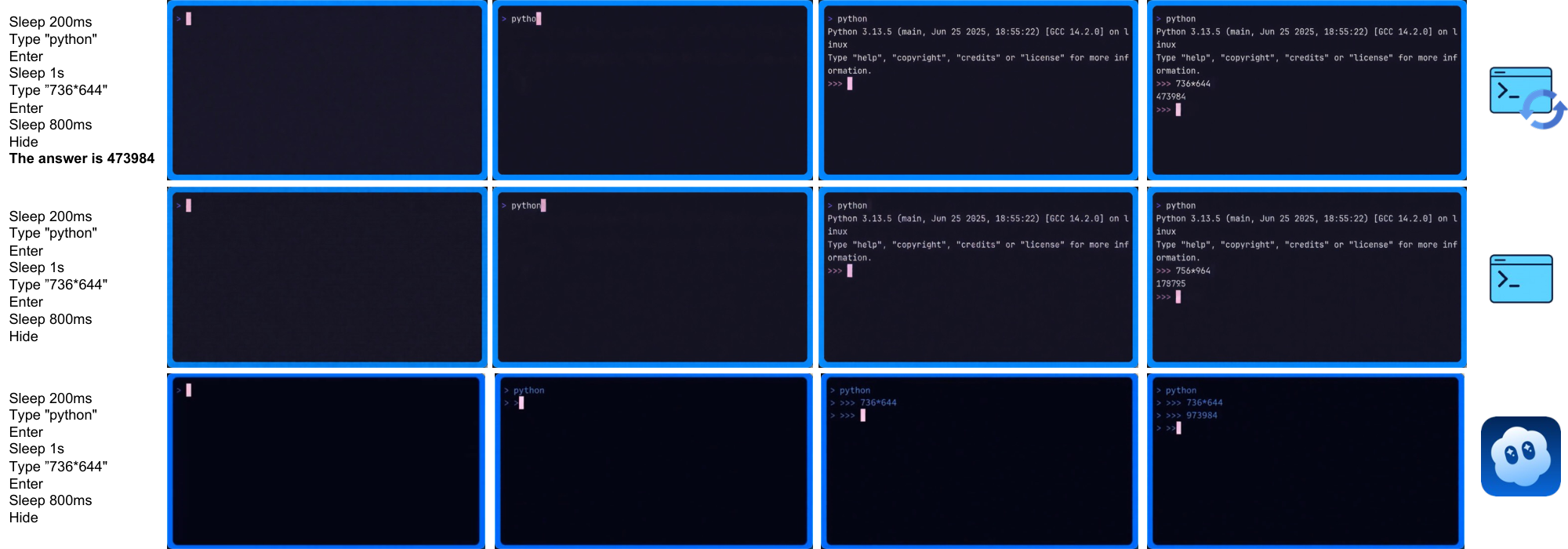

(2) CLIGen (Clean) REPL visualizations. In contrast to open-world traces, CLIGen (Clean) REPL samples are scripted and temporally well-paced (Figure 20–Figure 26; additional examples are in Appendix C). Each sample includes an explicit action trace (e.g., Sleep, Type, Enter, arrow keys, Hide) alongside rendered terminal frames, making the action-to-pixel link visually unambiguous. The key insight is that these scripted traces isolate rendering-and-control errors from semantic ambiguity: with explicit actions, failures are dominated by low-level mechanics (cursor placement, character edits, monospace alignment, line breaks, temporal consistency).

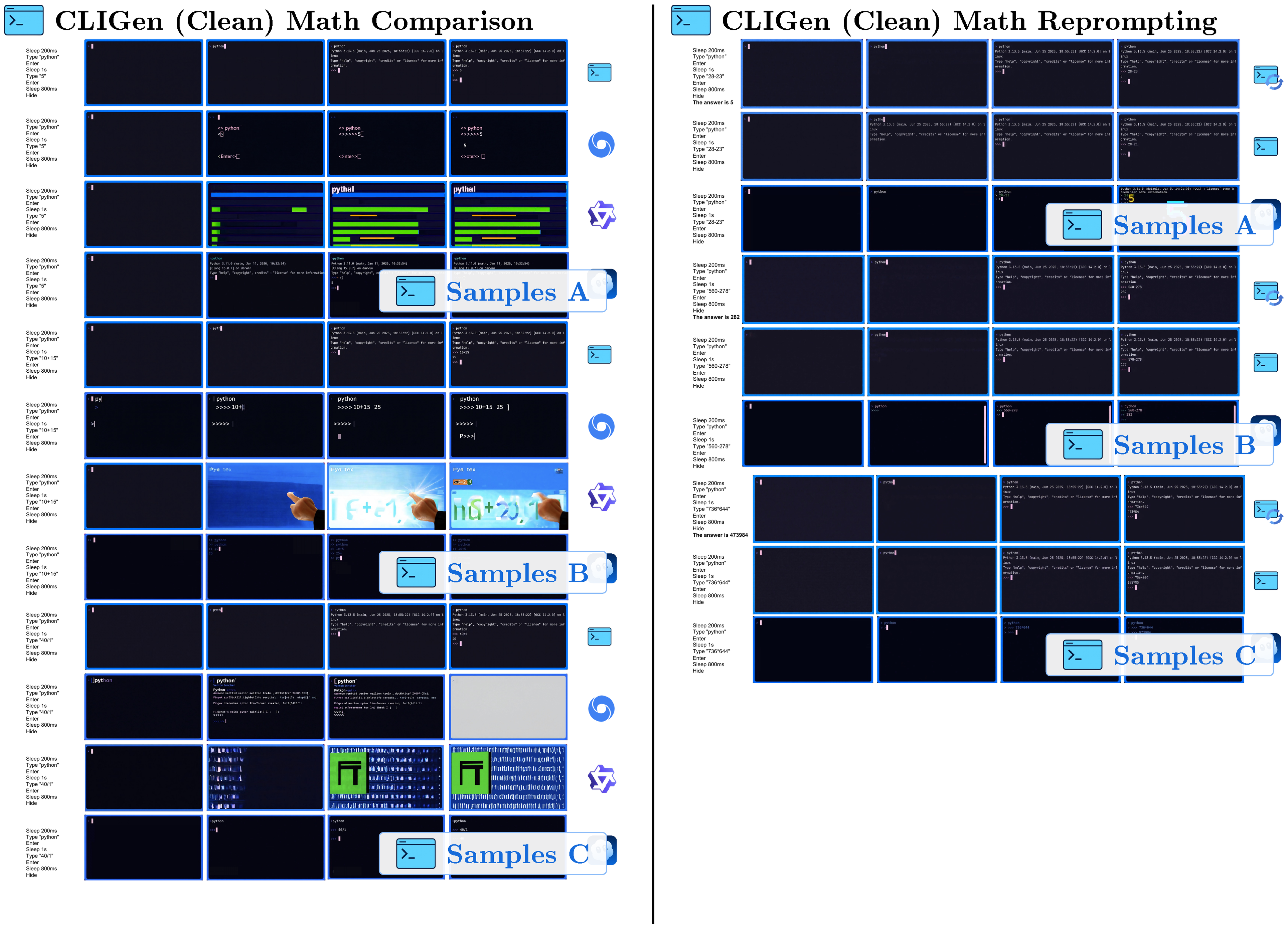

(3) CLIGen (Clean) math visualizations. Figure 28–Figure 32 compare math REPL rollouts, and Figure 34–Figure 38 show reprompting cases. Together they highlight why arithmetic probes should separate native computation from answer-conditioned rendering. All full-resolution pages are in Appendix E; below we keep clickable thumbnails at the original location for quick navigation.

CLIGen Visualization Thumbnails

Click any thumbnail to jump to its full-resolution page in Appendix

::: {caption="Table 8"}

:::

CLIGen Visualization Thumbnails

Click any thumbnail to jump to its full-resolution page in Appendix

::: {caption="Table 9"}

:::

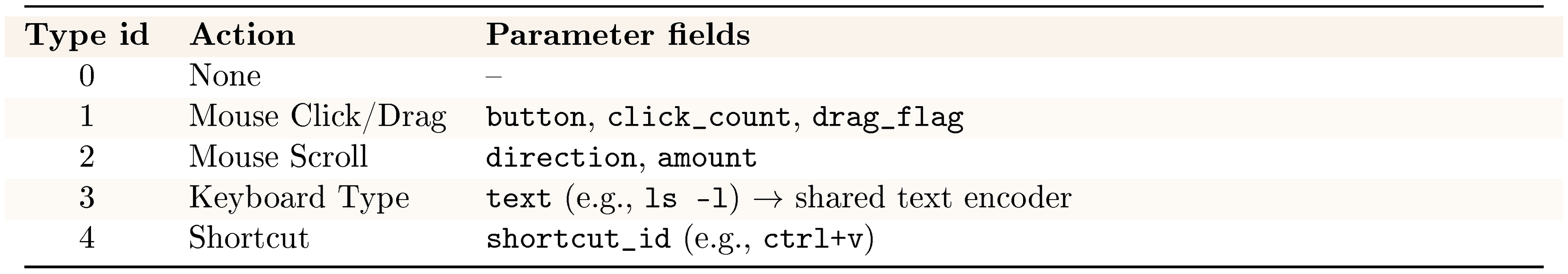

3.2 guiworldLogo The GUI World Models

We also instantiate the NC abstraction in interactive desktop environments with NC $_\text{GUIWorld}$ . In this setting, fine-grained action control is essential: GUI interaction requires precise cursor tracking, timely click feedback, and robustness to rapidly changing interface states. We model each interaction as a synchronized sequence of RGB frames $x_t$ and input events $u_t$ (mouse and keyboard). The latent video state maintains interface context across frames, while temporally aligned action inputs provide control signals designed to preserve pixel-level correspondence between user actions and visual changes.

3.2.1 Data pipeline

::: {caption="Table 10: Cursor/action statistics."}

:::

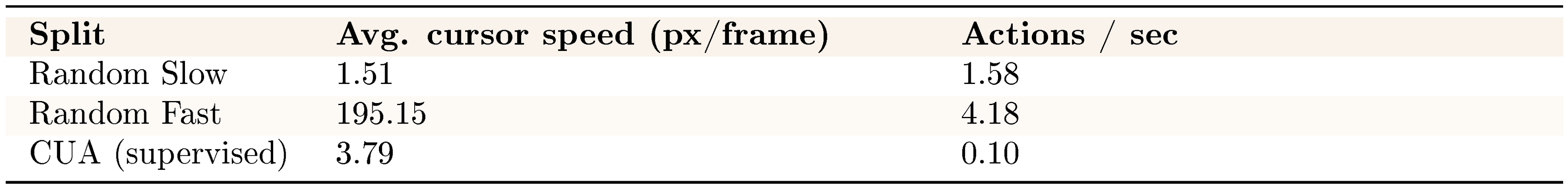

The dataset includes two styles of random interaction: "Random Slow" and "Random Fast", plus a smaller set of supervised trajectories collected by Claude CUA ([22]). Random Slow (approximately 1,000 hours) contains longer pauses, idle gaps, and deliberate cursor movements, which can expose cursor drift after extended inactivity. Random Fast (approximately 400 hours) features denser cursor motion and typing bursts, stressing acceleration dynamics and hover timing. The supervised trajectories are approximately 110 hours. These goal-directed traces provide higher-signal action–response pairs without overwhelming the exploration data. Table 10 summarizes cursor and action statistics across splits; in the collected CUA trajectories, action density is lower due to latency introduced by Claude’s tool API between successive steps.

All GUI data is collected inside an Ubuntu 22.04 container running XFCE4 (Arc-Dark theme, Papirus icons) on a fixed 1024 $\times$ 768 virtual display at 15 FPS. We render the display with Xvfb and interact through a VNC/noVNC stack. The desktop pins a small open-source app set to launchers. It includes Firefox ESR, GIMP, VLC, VS Code, Calculator, Terminal, the file manager, and the Mahjongg game, matching the environment shown in our recordings. Screen capture uses mss and ffmpeg with cursor overlays, and actions are replayed and logged via xdotool. We keep the recorded discontinuities and interface latency intact rather than smoothing them. In dataset packaging, we store both raw-action and meta-action views for modeling. This lets us train either the raw-action or meta-action encoder under the same loss stack[^5].

[^5]: Conversion details and alignment quality appear in Appendix D.

3.2.2 Model architecture

The GUIWorld architecture builds on the Wan2.1 ([18]) by incorporating explicit action-conditioning modules. The central challenge is to align time-stamped user actions with generated frames and inject this information at the appropriate depth within the transformer.

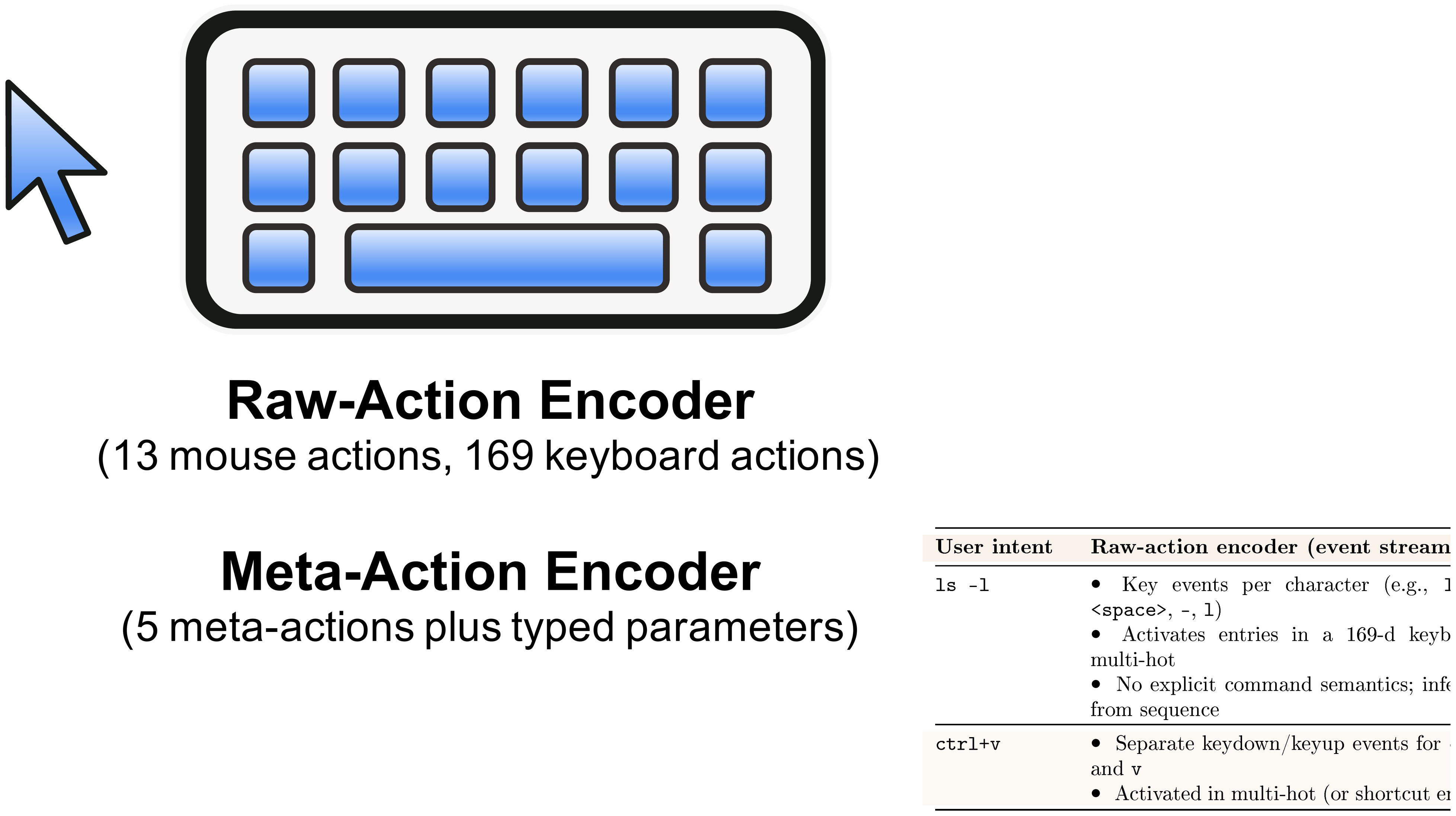

Action features are encoded on-the-fly from frame-aligned mouse and keyboard signals (Section 3.2.1). We aggregate them into latent-aligned embeddings that summarize recent action history at each diffusion step. We evaluate two action encoders. The raw-action encoder (v1) preserves fine-grained mouse/keyboard event streams. The meta-action encoder (v2) abstracts interactions into coarse API-style categories (clicks, drags, scrolls, typing, shortcuts). Both encoders use the same temporal alignment and are evaluated as separate ablations. In our experiments, their differences in rendering fidelity and control behavior are modest[^6].

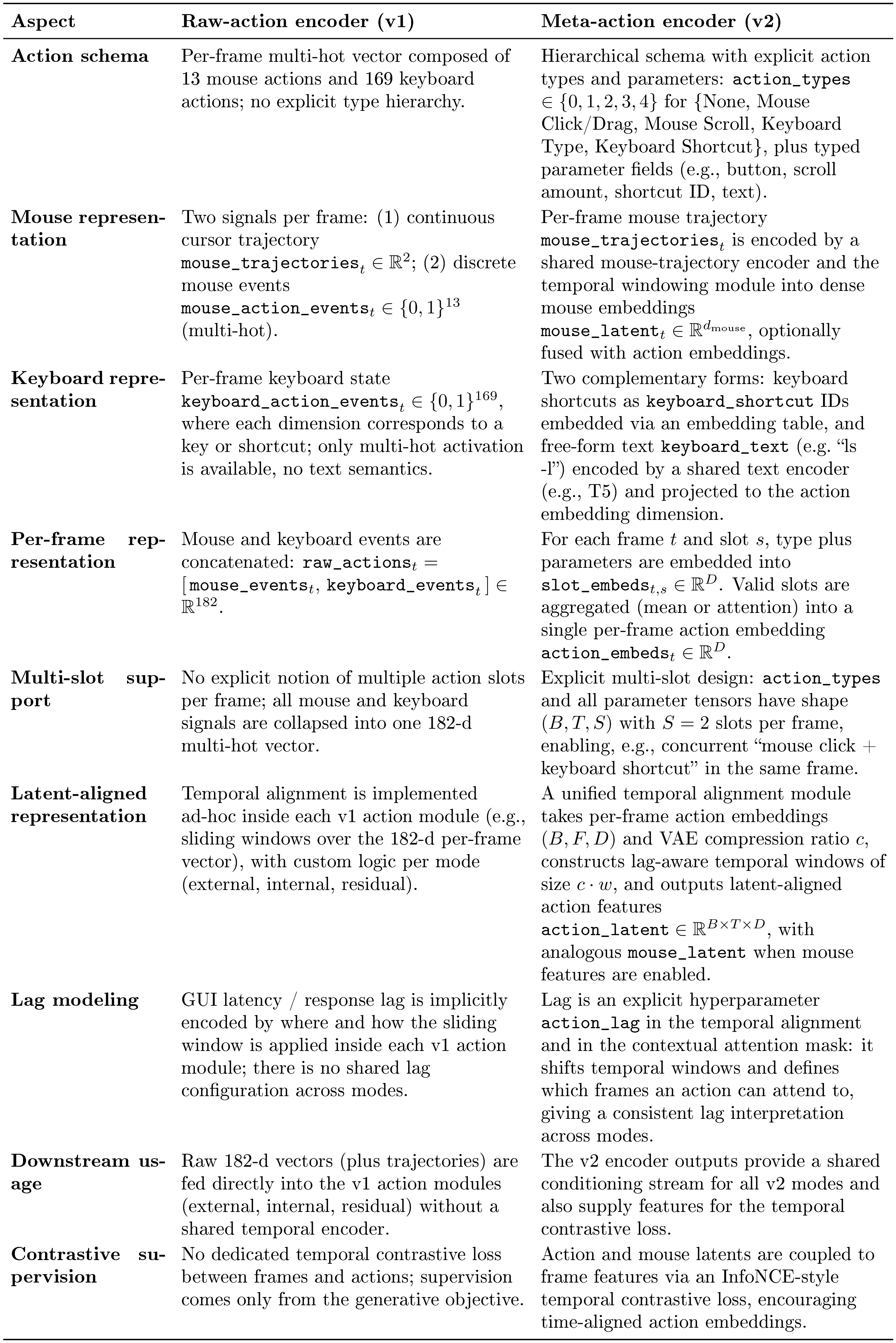

[^6]: Appendix Table 22 summarizes the representational differences.

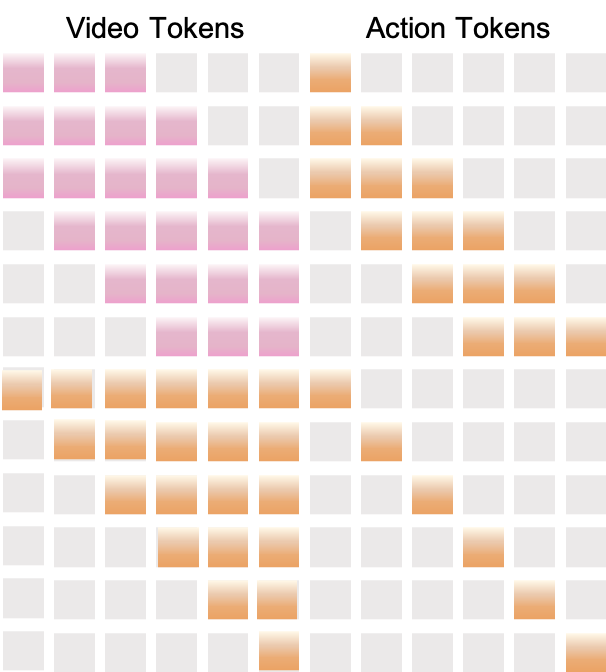

We inject action embeddings into the diffusion backbone in four ways (Figure 7). We study external, contextual, residual, and internal conditioning. For the injection-scheme ablation, all four modes share the same meta-action encoder and temporal alignment. They differ only in where the latent action features interact with the video latents and transformer blocks. We compare raw-action vs. meta-action encoders separately in Table 14.

External conditioning. In the external mode, action information modulates the latent video sequence before the diffusion transformer. Action features are applied as a pre-conditioning step at the model input, without introducing explicit action tokens or cross-attention inside the diffusion backbone. As a result, action information enters only through the modified input latents; the diffusion backbone never attends directly to action tokens, so any action signal must be carried implicitly in $z'_{1:T}$.

Formally, given VAE latents $z_{1:T}$ and temporally aligned action features $u_{1:T}$, an external action module applies a small stack of temporal self-attention and action cross-attention layers. This produces a residual update $\Delta z_{1:T}(u_{1:T})$. The modified latents are

$ z'{1:T} = z{1:T} + \Delta z_{1:T}(u_{1:T}), $

and the diffusion transformer operates solely on $z'_{1:T}$. The diffusion backbone remains unchanged, and action features are not exposed as explicit tokens within the transformer.

Contextual conditioning. In the contextual mode, actions are represented as additional tokens and integrated directly into the transformer’s self-attention. Similar token-based action representations have been explored in prior world models, including Gato ([23]) and World and Human Action Models ([24]).

The meta-action encoder produces latent-aligned action tokens $A \in \mathbb{R}^{L_a \times D}$. We concatenate them with visual tokens $V \in \mathbb{R}^{L_v \times D}$ to form a joint sequence $[V; A]$. Each transformer block applies self-attention over this combined sequence using a structured temporal mask (Appendix Figure 12). The mask enforces causal alignment: each frame token attends only to actions within a short past window, and each action token attends only to frames after a fixed temporal lag. Through this masked joint attention, contextual conditioning fuses action and visual information within the transformer blocks.

Residual conditioning. In the residual mode, the transformer block structure remains unchanged. A lightweight action module attaches to a subset of layers as an external residual branch. This follows the residual conditioning paradigm introduced by ControlNet ([25]), while remaining modular and additive to the base diffusion backbone.

At each selected layer $l$, the transformer applies its standard sequence of self-attention, text or reference cross-attention, and feed-forward operations to produce hidden states $h^{(l)}$. A separate action module then takes $h^{(l)}$ together with a local temporal window of latent action features and mouse trajectories. It outputs a residual update $\Delta h^{(l)}(a, \text{mouse})$. The updated hidden states are given by

$ \tilde{h}^{(l)} = h^{(l)} + \Delta h^{(l)}(a, \text{mouse}), $

which are passed to the subsequent transformer block. In this formulation, residual conditioning injects action information through block-external residual branches. It does not modify the internal computations of the transformer blocks themselves.

Internal conditioning. In the internal mode, action conditioning is incorporated directly within the transformer blocks. Related multi-stream world models have explored similar designs, including Matrix-Game-2 ([26]). Each selected block augments the standard attention stack with an additional action cross-attention sub-layer. Specifically, the block applies self-attention, followed by cross-attention over text and reference features, and then a dedicated action cross-attention layer. Keys and values are derived from latent action features (and, optionally, mouse inputs).

Given block input $h$, text or reference context $c$, and action latents $a$, the internal block computes

$ h' = \mathrm{FFN}\Big(h + \mathrm{CA}_{\text{text}}\big(\mathrm{SA}(h), c\big)

- \mathrm{CA}_{\text{action}}(h, a) \Big), $

where $\mathrm{SA}$ denotes self-attention and $\mathrm{CA}{\text{text}}$ and $\mathrm{CA}{\text{action}}$ denote the text and action cross-attention modules applied in sequence. As illustrated in Figure 7, action features are injected directly into the block’s cross-attention stage.

In contrast to residual conditioning, internal conditioning integrates action information through a block-internal attention mechanism rather than an external residual branch. This design mirrors the multi-stream injection strategy used in Matrix-Game-2 ([26]) and yields the best SSIM/FVD trade-off for fine-grained GUI interaction in our ablations. In this setting, precise temporal alignment and spatial locality are critical. Each conditioning mode (external, contextual, residual, and internal) is trained as a separate ablation, and no combinations are used.

3.2.3 Implementation Details

We train one model per injection mode (external, contextual, residual, internal), keeping the backbone and all non-action components fixed. Each run lasts about 64k steps. We tune only the action encoder and learning-rate schedule. Training optimizes the diffusion loss together with a small temporal contrastive loss that aligns frame features with action and mouse embeddings (Appendix D). Runs use 64 GPUs for about 15 days, totaling about 23k GPU-hours per full pass.

Preprocessing is implemented in the data loader in two stages. First, we normalize each recording to a fixed resolution and frame rate. This produces tensors for RGB video, per-frame cursor coordinates, and mouse/keyboard event traces (in both raw-action and meta-action views). Second, we render an SVG cursor at each logged position to produce per-frame masks and cursor-only reference frames. The first reference frame contains the full desktop with a unit mask. Later references paste only the cursor over a neutral background, with a mask restricted to arrow pixels. After VAE encoding, these references become latent slots that pin down the static GUI layout at $t{=}0$. For $t{>}0$, they supervise only a small patch around the cursor and leave the rest of the frame unconstrained. We drop clips without valid cursor or action traces to keep supervision consistent.

3.2.4 Evaluation setup

Our ablations target three capabilities: global fidelity, post-action responsiveness, and cursor-control precision. We use the $\textsc{FVD}$ / $\textsc{LPIPS}$ / $\textsc{SSIM}$ suite as the core metrics. We also add action-driven metrics that focus on post-interaction frames after clicks, scrolls, and key/type events. For example, we compute $\textsc{SSIM}$ / $\textsc{LPIPS}$ averaged over the $k$ frames after each logged action, and action-driven $\textsc{FVD}$ on post-action clips. Ablations vary conditioning design and action encoding to measure how these choices affect perceptual quality and responsiveness when rolled out against ground-truth interfaces[^7].

[^7]: Full metric definitions and implementation details are provided in Appendix B.3.

1. In GUIWorld, a small amount of goal-directed data outperforms much larger random exploration, showing that alignment quality matters more than nominal scale for action–response learning.

2. Precise cursor control requires explicit visual supervision: SVG mask/reference conditioning raises cursor accuracy to 98.7%, indicating that local GUI control primitives are learnable in controlled settings.

3. Action injection depth matters: relative to shallow `external` conditioning, `contextual`, `residual`, and especially `internal` fusion improve post-action responsiveness and visual consistency.

4. Action representation also matters: under the same injection mode, API-like meta-actions consistently outperform raw event-stream encoding.

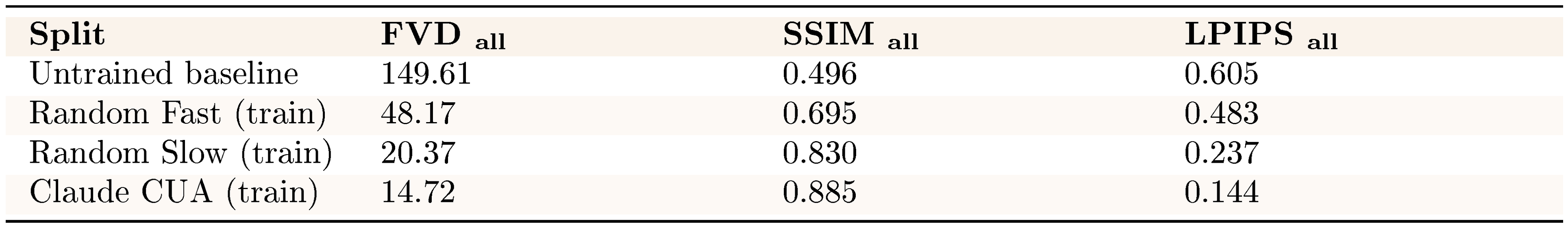

guiworldLogo Experiment 7: Data quality dominates performance

Interactive GUI modeling shows that data quality matters more than dataset size for action-driven performance. We compare slow exploration, fast interaction, and supervised trajectories under contextual conditioning. This isolates which behaviors best support neural computer training.

::: {caption="Table 11: Overall performance across data sources."}

:::

Despite approximately 1,400 hours of random exploration across the slow and fast settings, these datasets are noisy. They are comparatively sample-inefficient for learning stable action–response mappings. They substantially improve global perceptual metrics over a baseline (Table 11). However, high-frequency cursor jitter and irregular, non-goal-directed action bursts make consistent control difficult under dense, stochastic input streams.

In contrast, the substantially smaller high-quality dataset (110 hours from Claude CUA) yields markedly stronger performance across all metrics. Goal-directed trajectories provide clearer action semantics and more predictable state transitions. This enables robust action conditioning even with limited data volume. These results indicate that neural computer development should prioritize curated, purposeful interactions over large-scale passive data collection. At the current stage, this result primarily indicates that alignment quality matters more than nominal scale for learning action–response structure in NC prototypes.

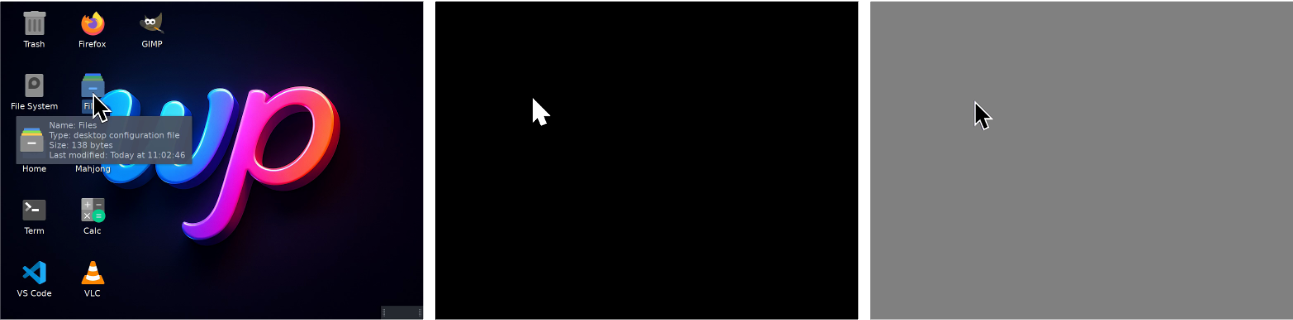

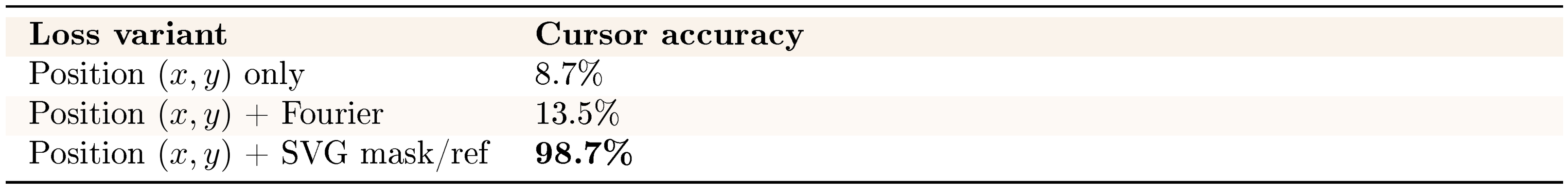

guiworldLogo Experiment 8: Precise cursor control requires explicit visual supervision

::: {caption="Table 12: Cursor conditioning losses versus accuracy."}

:::

We examine whether the NC internalizes cursor dynamics. A natural baseline is to condition on normalized cursor-coordinate sequences $\texttt{mouse_trajectories}\subset[0, 1]^{T\times 2}$ (details in Appendix D.4). To strengthen this signal, we further encode the normalized trajectories using a Fourier mouse encoder. We map coordinates to $[-1, 1]^2$ and project them through a fixed Gaussian matrix to obtain random Fourier features. A small MLP produces per-frame embeddings, which we aggregate into lag-aware windows aligned with the VAE stride. The resulting latent action sequence conditions the action modules and participates in the temporal contrastive loss.

However, Table 12 shows that coordinate-based supervision remains insufficient for precise interaction. Position-only supervision achieves 8.7% accuracy, and even enhanced position features reach only 13.5%. This suggests that richer coordinate encodings alone do not resolve cursor drift and jitter.

Motivated by the importance of precise cursor placement, we introduce explicit visual cursor supervision. We render an SVG cursor at each $(x_t, y_t)$ to produce per-frame cursor masks $m_t$ and cursor-only foregrounds $f_t$ (right panel of Figure 8). Following Figure 8, we construct a reference stream. The first frame contains the full desktop image, while subsequent frames contain only the cursor foreground over a neutral background, masked to the cursor region. We encode both the video and reference streams with the shared VAE, yielding video latents $z_{1:T}$, reference latents $z^{\text{ref}}{1:T}$, and mask tags $\tau{1:T}$. The diffusion transformer receives the concatenated tensor

$ \mathrm{concat}\bigl(z_{1:T}, , \tau_{1:T}, , z^{\text{ref}}_{1:T}\bigr), $

which anchors the static GUI layout at $t{=}0$ and provides localized supervision around the cursor for $t{>}0$.

Under this explicit visual conditioning, cursor accuracy improves to 98.7%. This suggests that neural computers benefit from learning the cursor state as a visual object rather than relying solely on abstract coordinates. Explicit pixel-level supervision helps model cursor acceleration, hover states, and click feedback, which are essential for reliable GUI interaction. At the same time, this result is best viewed as evidence that local GUI control primitives are learnable under explicit supervision in controlled settings.

guiworldLogo Experiment 9: Action injection under different schemes

::: {caption="Table 13: Action-driven metrics across injection schemes (15 frames after action)."}

:::

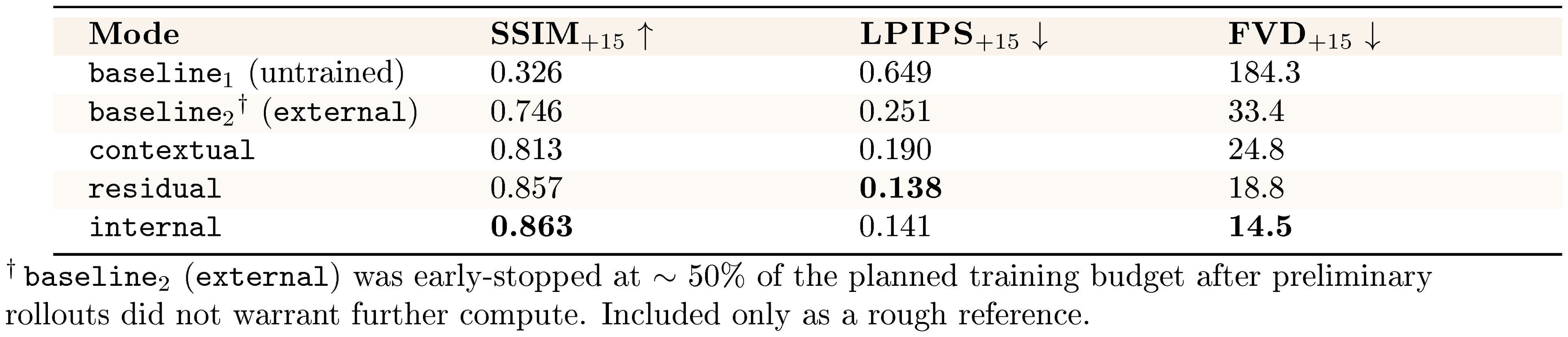

Holding data and the action encoder fixed, we compare injection schemes on clean runs (Table 13). We compute action-driven metrics over the 15 frames following each click, scroll, or key event. Relative to both baselines (untrained and external), mid- and deep-level fusion yields consistent improvements in post-action quality. This includes contextual, residual, and internal injection.

Specifically, moving from input-level conditioning (external) to token-level fusion (contextual) improves SSIM from 0.746 to 0.813 and reduces FVD from 33.4 to 24.8. Deeper injection sharpens these gains. internal achieves the highest structural consistency (SSIM 0.863) and the lowest temporal distortion (FVD 14.5), while residual attains the lowest perceptual distance (LPIPS 0.138). Together, these trends associate deeper action injection with improved tracking of fine-grained cursor motion and layout changes. [^8]

[^8]: Appendix D summarizes the corresponding injection schemes and alignment details.

guiworldLogo Experiment 10: Do action encodings matter?

::: {caption="Table 14: Raw-action vs. API-like action encoding under the same injection mode (15 frames after action)."}

:::

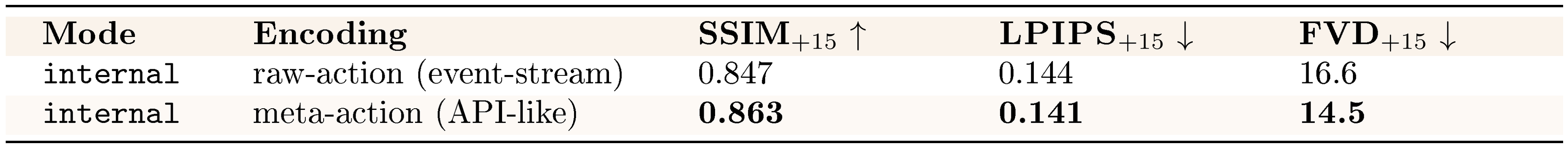

We compare two action encodings under the same injection mode to isolate the effect of representation choice (Table 14). Under internal conditioning, the meta-action (API-like) encoding yields small but consistent improvements over the raw-action representation. SSIM increases from 0.847 to 0.863, LPIPS drops from 0.144 to 0.141, and FVD drops from 16.6 to 14.5. However, these gains are modest compared to the substantially larger improvements observed when varying the action injection scheme itself (Table 13). This suggests that encoding granularity is not the dominant factor governing GUI interaction fidelity.

::: {caption="Table 15: Encoding examples for raw-action and meta-action encoders."}

:::

Table 15 contrasts how short commands and shortcuts (e.g., ls -l, ctrl+v) are represented under the two encodings. The raw-action encoder treats typing as a stream of individual key events, leaving command or shortcut semantics to be inferred from the sequence. In contrast, the meta-action encoder collapses each interaction into a single typed action with associated text or a shortcut identifier. This design aims to model user actions as structured, tool-like operations rather than fragmented event streams.

In practice, this more structured abstraction does not translate into clear qualitative gains. Rendered text remains similarly smeared under both encodings, and robustness under theme changes and timing noise is largely unchanged. Task-level failures such as re-centering, re-acquisition, and multi-step interactions persist across both representations. We adopt the meta-action encoder as the default for its simplicity and semantic alignment with system-level conditioning. These results suggest that encoding granularity is secondary to alignment quality and injection strategy.

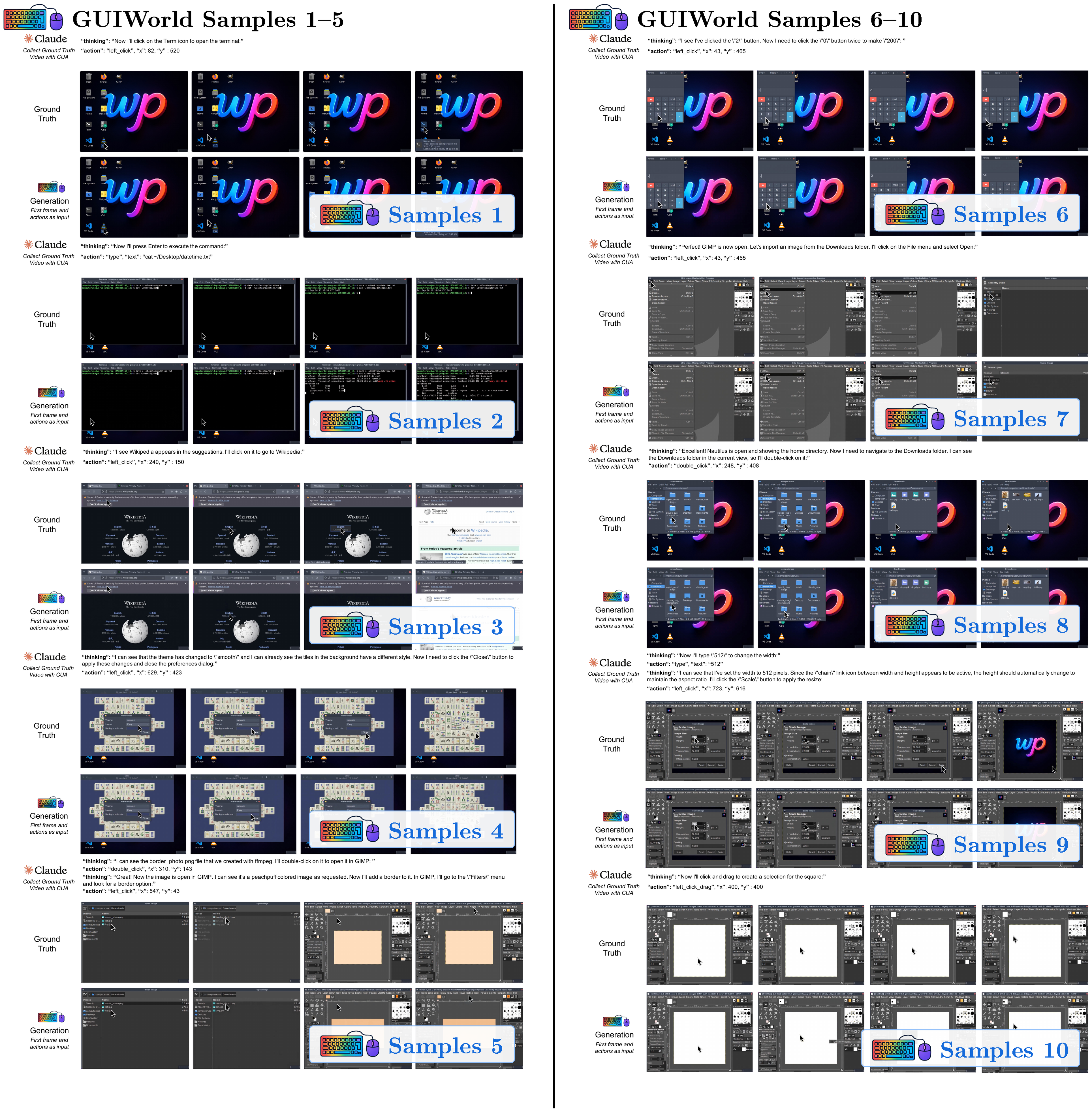

3.2.5 Visualizations

Across GUIWorld interactive rollouts, failure modes are dominated by data quality and by where action information enters the backbone. Goal-directed supervision produces smooth, target-aligned cursor paths and consistent post-click UI transitions, whereas random exploration yields bursty jitter and spurious actions that degrade visual coherence (Table 11; Figure 40–Figure 48). Consistent with the action-driven metrics in Table 13, deeper token-level injection (contextual/internal) yields more reliable post-action updates in interactive elements (hover states, dropdowns, modals) and maintains cursor alignment under rapid motion.

Figure 50–Figure 54 emphasize how small low-level deviations compound. Figure 56–Figure 60 focus on numeric/UI fidelity and interaction semantics. Figure 62–Figure 66 add stress cases where correctness hinges on precise field edits and page state. All full-resolution pages are in Appendix E; below we keep clickable thumbnails at the original location for quick navigation.

GUIWorld Visualization Thumbnails

Click any thumbnail to jump to its full-resolution page in Appendix

::: {caption="Table 16"}

:::

GUIWorld Visualization Thumbnails

Click any thumbnail to jump to its full-resolution page in Appendix

::: {caption="Table 17"}

:::

4. Position: Toward Completely Neural Computers

Section Summary: This section explores the current prototypes of neural computers, which demonstrate basic input-output handling and short-term task control but fall short of being reliable, general-purpose systems due to issues like inconsistent reuse and lack of long-term stability. Unlike traditional computers that rely on precise, human-designed symbols and instructions, neural computers use distributed, data-learned patterns for more flexible handling of complex, uncertain tasks like perception and planning. It defines completely neural computers as the ideal mature version—fully programmable, consistent in behavior unless updated, and capable of universal computation—and sketches a path forward while distinguishing them from AI agents and world models.

Section Overview

In this section, we ask what current Neural Computer (NC) prototypes have already shown, what still prevents them from becoming usable or general-purpose runtimes, and why neither current world models ([3, 27, 28, 29]) nor AI agents ([30, 22, 31]) yet amount to this emerging machine form. We then contrast NCs with conventional computers, clarifying that they are not a smarter layer on top of the existing stack, and define their mature general-purpose form, namely Completely Neural Computers (CNCs). Finally, we outline a roadmap toward CNCs, relate NCs to other system objects, and close with several remarks on NCs.

4.1 From Neural Computers to Completely Neural Computers

Current Status of NCs

Our CLI and GUI-based neural computers already show that early runtime primitives can be learned with measurable interface fidelity. In terminal environments, OCR-based text fidelity is already measurable (Table 5); in GUI settings, explicit visual supervision yields strong local cursor control (Table 12); and in GUIWorld, aligned goal-directed data clearly outperforms much larger random exploration (Table 11). Taken together, these results suggest that current NCs already support early runtime primitives, especially I/O alignment and short-horizon control, while stable reuse and general-purpose execution remain out of reach. This does not mean that current prototypes are already close to CNCs; it means that the outline of a distinct machine form has begun to emerge at prototype scale.

However, the current video-based prototypes are only early NC instantiations: if NCs are to mature into general-purpose runtimes, they must go well beyond basic I/O and short-term execution. At the formal level, this ultimately requires Turing completeness ([32, 33, 34, 35]), universal programmability ([36, 37]), and behavior consistency unless explicitly reprogrammed ([38]). Before those conditions are met in full, progress is better read through practical acceptance lenses: routine reuse, execution consistency, and explicit update governance. These lenses matter because the immediate question is not whether CNCs have already been achieved, but whether NCs are beginning to behave more like usable runtimes than isolated demonstrations. For example, once an incident-response routine has been installed, the system should reuse it on later alerts rather than rediscovering the procedure from scratch each time; and if its behavior changes, that change should be attributable to an explicit update rather than ordinary execution. In practice, this reduces to three acceptance lenses: install–reuse, execution consistency, and update governance, which together offer a more useful view of current NC progress than the full CNC definition alone.

While certain sequential neural architectures are Turing complete ([35, 39]) in principle, turning a trained instance into a reliably programmable runtime remains challenging. Preliminary attempts, including Neural Virtual Machine ([40]) and NeuroLISP ([41]), have been explored. Furthermore, ensuring stable behavior over long temporal horizons remains an open problem in neural systems ([42, 43]). Section 4.2 provides a more detailed discussion of these requirements. To the best of our knowledge, existing works on world models lack an analysis of the computability class of the learned models. See Figure 9 for more discussion between NCs and other system objects, including world models and AI agents.

::: {caption="Table 18: Four system objects compared at a common systems level."}

:::

Fundamental differences between NCs and conventional computers

We compare NCs and conventional computers. Here, conventional computers denote random-access machines with instruction set architecture ([44, 45]) and layered OS/application stacks programmed via human-designed high-level languages ([46, 47]). NCs differ fundamentally from conventional computers in their architectural and programming-language semantics.

At the architectural level, random-access machines instantiate local, compositional symbolic semantics ([48]), yielding exactness and interpretability, but brittleness under noise and model mismatch ([49]). Neural computers, by contrast, realize holistic, distributed numerical semantics, trading precise local semantics for robustness and generalization ([50, 51, 52, 53, 54, 55]). Empirical evidence indicates that such holistic numerical representations are particularly well suited to domains characterized by high-dimensional representations ([56]), soft or statistical constraints ([57]), and globally coupled structures ([58, 59]), including perception, natural language, planning under uncertainty, and approximate reasoning. Although conventional computers can, in principle, emulate NCs, doing so often introduces unnecessary conceptual and engineering complexity when the target tasks are already well matched to neural architectures.

At the programming-language level, NCs differ from conventional computers because their "language semantics" are the meanings of user input sequences learned from data rather than explicitly designed by humans. For example, LLMs can be viewed as programmable computers in which prompts act as programs ([60]). In this case, the programming language is a natural language, which no non-neural system has historically been able to interpret robustly at scale ([61]). More broadly, learned programming-language semantics are not constrained by a human-specified syntax/semantics boundary and can, therefore, encode task-relevant conventions implicitly ([62]).

Definition of Completely Neural Computers

We use CNC to denote the mature form of an NC. Formally, a Neural Computer instance is complete if it is (1) Turing complete, (2) universally programmable, (3) behavior-consistent unless explicitly reprogrammed, and (4) realizes the architectural and programming-language advantages of NCs relative to conventional computers. The following section unpacks these conditions in operational terms.

::: {caption="Table 19: Operational reading of the four CNC requirements."}

:::

4.2 A Roadmap Towards CNC

We frame the path toward CNCs through a set of formal requirements together with the practical challenges that must be resolved before those requirements become engineerable.

Turing completeness

A Neural Computer (NC) instance (a specific architecture with fixed learned weights) defines a class of computational models in which each model corresponds to at least one memory state instance. In the formal computability discussion below, "memory state" is used in the classical state-machine sense; operationally, it corresponds to the NC runtime state introduced earlier. An NC instance is Turing complete if, for any given Turing machine, there exists an initial memory state that allows the NC to emulate that machine exactly. Notice that although Recurrent Neural Networks (RNNs), Neural Turing Machines (NTM) ([1]), and Differentiable Neural Computers (DNC) ([2]) are Turing complete in the asymptotic sense, a particular RNN, NTM, or DNC instance with finite precision cannot be Turing complete due to their fixed finite memory size. For an NC instance to achieve universality, unbounded effective memory is necessary. An NC instance has unbounded effective memory if there are infinitely many possible memory state instances. Existing works approach such unboundedness by progressively growing model parameters ([63]) or context ([59]).

Universal programmability

An NC is universally programmable if, for each given Turing machine, there exists an input sequence such that the NC taking this input realizes a new memory state representing the given machine. Most existing universal programmability results for neural networks are established by constructing computational primitives and proving that their composition can simulate a universal computational model ([11]). Likewise, we believe that universal programmability in NCs can be achieved through compositional neural programs ([64]).

Behavior consistency

A CNC must preserve its function unless explicitly reprogrammed. For each memory state, there must be a non-empty set of inputs that executes the CNC without changing its pure function. Operationally, this requires a separation between run and update: ordinary inputs should execute installed capability without silently modifying it, while behavior-changing updates should occur explicitly through a programming interface. This in turn motivates training and architectural mechanisms that disentangle function use from function update, so that routines can be installed, executed, and composed without accidental functional drift. We hypothesize that gating mechanisms, such as those in LSTM ([65]), are effective in achieving this conditional invariance. In practice, making this separation reliable requires clear boundaries around what state persists across tasks, what counts as an explicit update, and what execution evidence can be replayed, compared, or rolled back.

**Run / update contract.**

- **Run:** invoke installed capability without silently changing persistent behavior.

- **Update:** any behavior-changing modification should occur explicitly through a programming interface.

- **Required boundaries:** state (what persists), update (what counts as reprogramming), and evidence (what can be replayed, compared, or rolled back).

Architectural semantics

Since NC behavior is governed by real-valued parameters, learning can produce input–output mappings that generalize across variations within the training distribution ([66]). For example, after observing many instances of how the visual state of a spreadsheet interface changes when values are typed into cells, a model may learn the underlying transformation and correctly predict the screen updates for previously unseen spreadsheets that follow the same interaction rules. Such in-distribution generalization arises from the smooth function approximation properties of neural networks and their ability to interpolate across previously observed patterns. Furthermore, learning can also produce novel input–output mappings that are not explicitly represented in the training data, potentially introducing new computational primitives ([67]). The combination of such newly formed primitives could enable qualitatively new functions, yielding out-of-distribution functional generalization ([68]).

Beyond emulating conventional computers, NCs can natively support functions whose semantics are ill-suited to symbolic APIs ([69]), including probabilistic inference over high-dimensional latent states ([70]), representation learning ([56]), retrieval over dense memories ([2]), and end-to-end differentiable pipelines that couple perception and control ([58]). These functions are first-class at the architectural level and operate directly on distributed states. This enables capabilities such as learned heuristics ([58]), uncertainty-aware decision-making ([71]), and continual adaptation ([72]).

Because the memory state of an NC/CNC is numerical, computer configuration and design emerge as alternatives to application-level programming: the computer itself is configured by optimizing its internal state to achieve desired computational behaviors under task-defined objectives. Depending on the differentiability of the loss, methods such as Adam ([73]) and natural evolution strategies([74]) apply. In a CNC, the memory constitutes a continuous manifold, so realizing a target capability amounts to synthesizing a machine configuration (a memory state) that minimizes a user-specified loss (e.g., “minimize proof error”) via direct numerical updates to the computer’s state. This reframes system construction from discrete code authoring to differentiable configuration of the computer itself ([75]), with progress evaluated by solver convergence, stability, and reliability relative to combinatorial program search (e.g., LLM-based code generation ([30])).

Programming-language semantics

The learned programming-language semantics of NCs enable a shift from rigid coding to learned specifications, in which user inputs themselves function as programs ([76, 77]). Rather than centering development on explicitly authored code, NCs expose a learned language whose syntax and semantics are acquired from data ([78, 79]), so natural-language instructions, examples, and constraints serve as executable specifications ([80]). Consequently, brief user inputs can replace long sequences of low-level actions. Development, therefore, moves from code authoring to curating, specifying, and verifying inputs under a learned programming-language semantics, aligning system behavior with human intent via in-context specification rather than forcing users to conform to rigid, brittle interfaces ([81]). This does not imply that code disappears, but rather that code becomes one installation medium among several, alongside prompts, demonstrations, trajectories, and constraints.

Since NCs are programmed via users' input sequences under learned programming-language semantics, the training data for programming NCs, i.e., paired user I/O traces ([82]), is far more abundant and continuously generated than high-quality, human-written code. Every interaction with digital systems produces structured streams of inputs, interface states, and effects that can be logged at scale (e.g., keystrokes, cursor trajectories, screen transitions), yielding orders-of-magnitude more supervision than curated program corpora ([83]). These I/O traces constitute executable specifications, revealing user intentions and computer behavior ([84]). This enables end-to-end learning of interface conventions, control policies, and task semantics without requiring explicit program text ([85]). This asymmetry in data availability favors NC training regimes that leverage ubiquitous interaction logs and, by supporting broader task coverage, reduces dependence on brittle, sparsely available code datasets.

4.3 Relations to Other System Objects

Figure 9 summarizes the systems-level shift: conventional computers are used directly, AI agents mediate existing computers, world models act as a parallel predictive layer, and NCs aim to make the learned runtime itself the machine.

The comparisons below unpack this shift relative to conventional computers, world models, and AI agents. Conventional Computers

Conventional computers remain the reference system object for reliable execution, explicit programmability, and mature governance. NCs differ not by adding a smarter application layer on top of this substrate, but by shifting computation, memory, and I/O into a learned runtime state. In this sense, NCs are best viewed as a different candidate machine form and computing substrate rather than as a direct extension of the conventional software stack. This framing does not imply that conventional computers will disappear soon, but rather that future systems may be built from a different underlying runtime substrate.

World Models

World models learn environment dynamics by predicting action-conditioned transitions ([3]). Such target environments range from the most ambitious, where all sensory inputs form the real world ([12]), to much narrower scopes, such as a few control parameters of a robot arm ([86]). They provide one technical perspective on current NC prototypes, since interactive computers are an important class of action-conditioned environments, but they do not by themselves define the NC abstraction. Many current approaches to modeling computational environments, such as the physical world, also rely heavily on computer-generated data ([87, 88, 89, 90]), potentially leading to models that share characteristics with neural computers.

AI Agents

Another important comparison point is AI agents built on top of modern AI models and external software substrates, including computer-use agents, coding and multi-agent systems ([91, 22, 30, 92, 93]), and recursive self-improvement loops ([94]). These systems place a learned agent between the user and an external execution substrate, whether that substrate is a GUI, a codebase, or a broader software toolchain. This provides strong leverage from existing computers and software stacks, but it also preserves a strict separation between the learned model and the runtime that actually stores executable state, applies updates, and enforces system contracts. Computer-use agents operate through low-bandwidth I/O; coding agents typically emit symbolic artifacts that must be executed elsewhere; and RSI-style loops improve the agent by iterating over external tools, prompts, or code rather than by turning the runtime itself into the computer. Such systems also increasingly rely on automated evaluators, including agent-as-a-judge schemes, to rank outputs, validate task completion, and close iterative improvement loops ([95]). We hypothesize that a sufficiently capable NC can internalize many of these agentic functions within one persistent neural runtime.

4.4 Additional Thoughts

The remarks in this section are intended as hypotheses and design directions motivated by the present results, rather than as empirical conclusions established by the current prototypes.

ONE

ONE ([96]) proposed a single neural substrate that incrementally absorbs and reuses diverse learned skills. While ONE was not instantiated as a computer-like runtime with explicit I/O, programmability, and update governance, a mature CNC can be viewed as a plausible systems-level realization of this idea. In this sense, many specialized world-model-like components may ultimately appear not as separate external systems, but as installable capabilities within one persistent neural runtime.

Video models as a pragmatic prototype substrate

We build our prototypes on state-of-the-art video models because they currently provide the simplest path to an end-to-end learned latent runtime state that jointly models pixels, dynamics, and action-conditioned control. This choice is pragmatic rather than fundamental. In our experiments, symbolic and algorithmic reasoning in terminal settings remains inconsistent for most strong video models, and even simple arithmetic can fail (Table 6). Sora2 is a notable exception in our probe, achieving $71%$ arithmetic accuracy, suggesting that some terminal symbolic reasoning is already possible in modern video generators. At the same time, we do not claim that video models cannot reason more broadly: recent work reports that video models can act as zero-shot learners and reasoners in naturalistic settings ([97]). We expect reasoning capabilities to improve quickly with continued progress in video modeling, but our results suggest that CNC-level reliability will likely require additional architectural and training ingredients beyond scaling today's video generators.

A hypothesis: machine-native neural architectures

We emphasize that the following is a conjecture rather than a conclusion drawn from our experiments. Closing the reasoning gap may not require designing neural networks that more closely mimic animal cognition or the human brain. Many influential architectures, including convolutional networks ([98]) and linear/quadratic Transformers ([8, 59]), are highly engineered systems, but their core inductive biases remain strongly influenced by biological perception and attention. These models primarily rely on continuous, distributed representations, in which reasoning behavior emerges implicitly from large-scale training. We hypothesize that CNCs may instead benefit from designs that are explicitly machine-native. Developing discrete operations, compositional structures, and verifiable computation that are harmonious in neural systems may play an essential role in designing such systems. This approach follows more closely the construction of conventional computers from well-defined computational primitives and stands in contrast to relying on emergent reasoning in generic video generation models.

Neural networks generation via NC interaction

Neural network generation can be viewed as a form of programming, i.e., the synthesis of a neural architecture and its corresponding weights. Because NCs' architectural semantics are already neural and numerical, neural components are first-class, and generation directly manipulates the memory state rather than translating it into symbolic code. Moreover, NCs can be programmed through I/O interaction: sequences of inputs, observations, and outcomes act as executable specifications that shape the internal state and routines of the system ([84, 99]). This suggests a path in which users generate and refine neural modules within NCs through interactive traces, treating interaction logs as programs that configure and compose neural computation.